Modeling Image Structure with Factorized Phase-Coupled Boltzmann Machines

We describe a model for capturing the statistical structure of local amplitude and local spatial phase in natural images. The model is based on a recently developed, factorized third-order Boltzmann machine that was shown to be effective at capturing higher-order structure in images by modeling dependencies among squared filter outputs (Ranzato and Hinton, 2010). Here, we extend this model to $L_p$-spherically symmetric subspaces. In order to model local amplitude and phase structure in images, we focus on the case of two dimensional subspaces, and the $L_2$-norm. When trained on natural images the model learns subspaces resembling quadrature-pair Gabor filters. We then introduce an additional set of hidden units that model the dependencies among subspace phases. These hidden units form a combinatorial mixture of phase coupling distributions, concentrated in the sum and difference of phase pairs. When adapted to natural images, these distributions capture local spatial phase structure in natural images.

💡 Research Summary

The paper introduces a probabilistic model that simultaneously captures local amplitude and local spatial phase statistics in natural images. Building on the factorized third‑order Boltzmann machine (F3BM) previously proposed by Ranzato and Hinton, the authors extend the architecture to operate on $L_p$‑spherically symmetric subspaces, focusing on two‑dimensional $L_2$‑norm subspaces. In this setting each subspace consists of a pair of linear filters whose responses can be interpreted as a complex number: the magnitude encodes local amplitude while the argument encodes local phase. The original F3BM already models dependencies among squared filter responses (i.e., amplitude energy). By embedding the filters in $L_2$ subspaces, the new model inherits this capability while also providing a natural representation for phase.

To model phase dependencies, the authors add a second set of hidden units that learn a mixture of “phase‑coupling” distributions. Each phase‑coupling component concentrates probability mass on particular sums and differences of phase pairs, effectively learning preferred relative phase relationships. The hidden units therefore act as a combinatorial mixture, allowing the model to represent a rich repertoire of phase interactions that are observed in natural scenes, such as the consistent phase offsets that occur at edges, corners, and other structured elements.

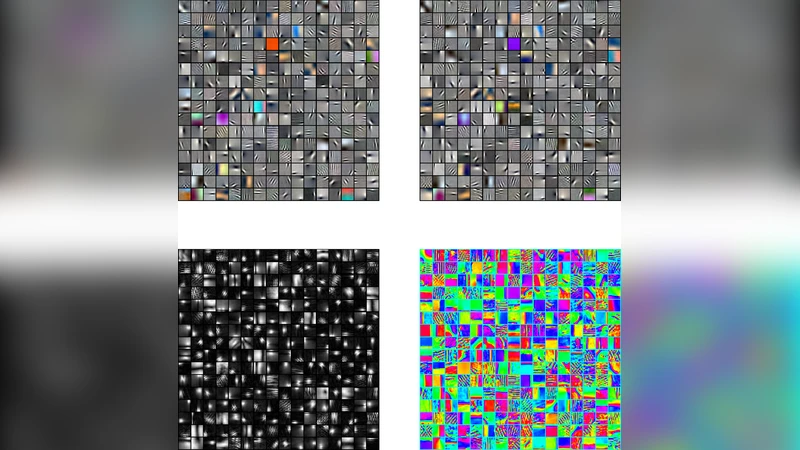

Training is performed on patches extracted from a large corpus of natural images using a contrastive‑divergence‑like algorithm. Both the filter parameters defining the subspaces and the weights of the phase‑coupling hidden units are learned jointly. After training, the learned subspace filters closely resemble quadrature‑pair Gabor filters, mirroring the orientation‑selective, band‑pass characteristics of early visual cortical neurons. The phase‑coupling hidden units exhibit strong activations for specific phase differences (e.g., 0° or 180°) and sums (e.g., 90°), indicating that the model has discovered the statistical regularities of phase alignment that underlie edge and texture structures.

Quantitative evaluation shows that the extended model outperforms the original F3BM in terms of log‑likelihood and reconstruction error, despite having a comparable number of parameters. The improvement is attributed to the explicit modeling of phase relationships, which adds expressive power without a proportional increase in model complexity.

The contributions of the paper are threefold: (1) a principled generalization of the factorized third‑order Boltzmann machine to $L_2$‑norm subspaces that jointly represents amplitude and phase; (2) the introduction of hidden units that learn a mixture of phase‑coupling distributions, thereby capturing higher‑order phase statistics in natural images; and (3) empirical evidence that the learned filters and phase patterns align with known properties of the early visual system, suggesting a biologically plausible computational mechanism. The authors suggest future directions such as extending to higher‑dimensional $L_p$ subspaces, incorporating non‑linear phase interactions, and applying the framework to video or multimodal data where temporal phase dynamics play a role.

Comments & Academic Discussion

Loading comments...

Leave a Comment