Multi-core: Adding a New Dimension to Computing

Invention of Transistors in 1948 started a new era in technology, called Solid State Electronics. Since then, sustaining development and advancement in electronics and fabrication techniques has caused the devices to shrink in size and become smaller, paving the quest for increasing density and clock speed. That quest has suddenly come to a halt due to fundamental bounds applied by physical laws. But, demand for more and more computational power is still prevalent in the computing world. As a result, the microprocessor industry has started exploring the technology along a different dimension. Speed of a single work unit (CPU) is no longer the concern, rather increasing the number of independent processor cores packed in a single package has become the new concern. Such processors are commonly known as multi-core processors. Scaling the performance by using multiple cores has gained so much attention from the academia and the industry, that not only desktops, but also laptops, PDAs, cell phones and even embedded devices today contain these processors. In this paper, we explore state of the art technologies for multi-core processors and existing software tools to support parallelism. We also discuss present and future trend of research in this field. From our survey, we conclude that next few decades are going to be marked by the success of this “Ubiquitous parallel processing”.

💡 Research Summary

The paper traces the evolution of semiconductor technology from the invention of the transistor in 1948 to the present day, emphasizing how relentless scaling of device dimensions and clock frequencies drove performance growth for several decades. By the early 2000s, however, fundamental physical limits—most notably the power wall, voltage scaling saturation, and thermal constraints—prevented further increases in clock speed without exceeding acceptable power budgets. This “frequency scaling wall” forced the microprocessor industry to abandon the traditional single‑core, ever‑faster approach and to explore a new design dimension: integrating multiple independent processing cores onto a single silicon die.

The authors describe the two principal hardware philosophies that have emerged. The first, homogeneous multi‑core, packs several identical cores, each with its own pipeline, L1/L2 caches, and often a shared L3 cache and memory controller. Intel’s Core i series and AMD’s Ryzen line exemplify this model, scaling from dual‑core to octa‑core and beyond. The second, heterogeneous or “big‑LITTLE” architecture, combines high‑performance cores with low‑power efficiency cores on the same package. ARM’s big.LITTLE, Qualcomm’s Kryo, and Apple’s M‑series processors illustrate how this approach balances peak performance with energy efficiency, especially for mobile and embedded platforms.

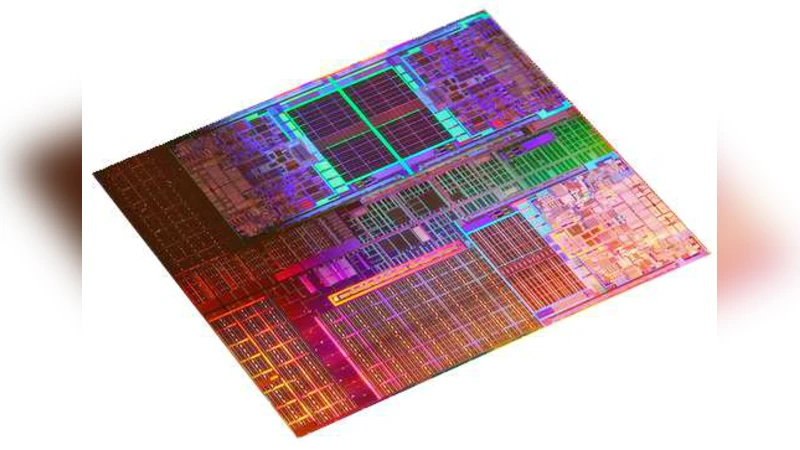

A major hardware challenge in multi‑core systems is the design of memory subsystems and interconnects. Non‑Uniform Memory Access (NUMA) architectures, cache‑coherence protocols (MESI, MOESI), and high‑bandwidth, low‑latency interconnects such as Intel’s Ultra Path Interconnect (UPI) and AMD’s Infinity Fabric are essential for maintaining data consistency and minimizing latency as core counts rise. The paper also highlights emerging 3‑D stacking technologies (Through‑Silicon Vias) and chip‑on‑chip packaging, which physically shorten inter‑die pathways and enable tighter integration of CPUs, memory, and specialized accelerators.

On the software side, the authors argue that merely adding cores does not automatically yield performance gains; software must be explicitly parallel. Operating system schedulers have been re‑engineered to be core‑aware, with Linux’s Completely Fair Scheduler (CFS) providing load balancing, CPU affinity, and cgroup controls. High‑level parallel programming models—POSIX threads, OpenMP, Intel Threading Building Blocks (TBB), and the task‑based runtime Kokkos—allow developers to express concurrency without managing low‑level details. For distributed memory systems, MPI remains the de‑facto standard, while heterogeneous computing leverages CUDA, OpenCL, and newer standards such as SYCL to offload work to GPUs and AI accelerators. The paper notes the growing ecosystem of automatic parallelizing compilers and static analysis tools that attempt to transform legacy sequential code into multi‑threaded equivalents.

Research trends surveyed include “many‑core” designs that extend core counts into the dozens or hundreds (e.g., Intel Xeon Phi, AMD EPYC) for high‑performance computing and data‑center workloads. The authors discuss the importance of scalable interconnect topologies, such as mesh and ring networks, and the role of software‑defined memory hierarchies in exploiting these massive core counts. They also explore emerging paradigms such as neuromorphic cores, which emulate spiking neural networks for ultra‑low‑power parallelism, and quantum co‑processors that promise exponential speed‑ups for specific algorithms. Integrating these novel compute units with traditional CPUs will require new programming models (e.g., OpenMP 5.0 extensions, Kokkos, and domain‑specific languages) and sophisticated runtime systems capable of dynamic workload placement across heterogeneous resources.

In conclusion, the paper asserts that the end of frequency scaling has inaugurated an era where performance growth is achieved primarily through parallelism. Both hardware and software ecosystems are converging toward ubiquitous parallel processing, making multi‑core and heterogeneous architectures the dominant paradigm for the next several decades. The authors predict that this shift will reshape everything from consumer devices to large‑scale scientific simulations, establishing parallelism as the default abstraction for all future computing systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment