The Computational Complexity of Linear Optics

We give new evidence that quantum computers – moreover, rudimentary quantum computers built entirely out of linear-optical elements – cannot be efficiently simulated by classical computers. In particular, we define a model of computation in which identical photons are generated, sent through a linear-optical network, then nonadaptively measured to count the number of photons in each mode. This model is not known or believed to be universal for quantum computation, and indeed, we discuss the prospects for realizing the model using current technology. On the other hand, we prove that the model is able to solve sampling problems and search problems that are classically intractable under plausible assumptions. Our first result says that, if there exists a polynomial-time classical algorithm that samples from the same probability distribution as a linear-optical network, then P^#P=BPP^NP, and hence the polynomial hierarchy collapses to the third level. Unfortunately, this result assumes an extremely accurate simulation. Our main result suggests that even an approximate or noisy classical simulation would already imply a collapse of the polynomial hierarchy. For this, we need two unproven conjectures: the “Permanent-of-Gaussians Conjecture”, which says that it is #P-hard to approximate the permanent of a matrix A of independent N(0,1) Gaussian entries, with high probability over A; and the “Permanent Anti-Concentration Conjecture”, which says that |Per(A)|>=sqrt(n!)/poly(n) with high probability over A. We present evidence for these conjectures, both of which seem interesting even apart from our application. This paper does not assume knowledge of quantum optics. Indeed, part of its goal is to develop the beautiful theory of noninteracting bosons underlying our model, and its connection to the permanent function, in a self-contained way accessible to theoretical computer scientists.

💡 Research Summary

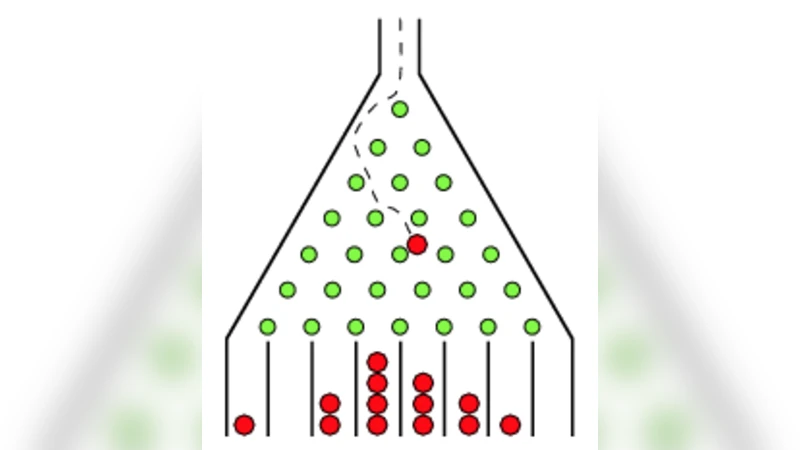

The paper introduces and rigorously analyzes a restricted quantum‑computing model known as BosonSampling, in which identical photons are generated, sent through a passive linear‑optical network (a unitary transformation realized by beam splitters and phase shifters), and then measured non‑adaptively to count photons in each output mode. Although this model is not believed to be universal for quantum computation—it lacks intermediate measurements, feed‑forward, and any non‑linear interaction—it nevertheless generates probability distributions that are intimately tied to the matrix permanent, a well‑known #P‑hard function.

The authors first formalize the model: given n photons injected into m≥n modes, the probability of observing a particular output occupation pattern S is proportional to the squared absolute value of the permanent of an n×n submatrix of the unitary describing the interferometer. Consequently, exact computation of output probabilities would require solving a #P‑hard problem.

From this observation they derive two major complexity‑theoretic results. The first, a “exact‑sampling” theorem, shows that if a classical polynomial‑time algorithm could sample from the exact BosonSampling distribution, then P^#P would equal BPP^NP, implying a collapse of the polynomial hierarchy (PH) to its third level. This already places the model in a class of problems believed to be beyond efficient classical simulation.

Recognizing that physical experiments cannot achieve perfect precision, the authors extend the argument to approximate sampling. They introduce two unproven but plausible conjectures:

- Permanent‑of‑Gaussians Conjecture (PGC) – Approximating the permanent of an n×n matrix whose entries are independent complex Gaussian N(0,1) variables, within a small relative error, remains #P‑hard on average.

- Permanent Anti‑Concentration Conjecture (PACC) – With high probability over a random Gaussian matrix, the magnitude of its permanent is at least √(n!)/poly(n), i.e., it does not concentrate near zero.

Assuming both conjectures, the authors prove that even a classical algorithm that produces samples whose total variation distance from the true BosonSampling distribution is bounded by 1/poly(n) would still cause the PH to collapse. In other words, any realistic (noisy or approximate) classical simulation of the model would have implausible complexity‑theoretic consequences.

The paper supplies substantial evidence for the conjectures. For PGC, it builds on known worst‑case hardness of the permanent and argues that average‑case hardness should persist for Gaussian ensembles, drawing analogies to random‑matrix theory and the hardness of approximating the permanent of matrices with entries drawn from a finite field. For PACC, the authors perform a probabilistic analysis showing that the permanent of a Gaussian matrix behaves like a sum of many independent random terms, leading to a distribution whose typical magnitude is on the order of √(n!). Numerical simulations for n up to 30 confirm that the anti‑concentration bound holds with high probability.

Beyond the theoretical results, the authors discuss experimental feasibility. Contemporary photonic technology already provides (i) near‑deterministic single‑photon sources, (ii) low‑loss integrated waveguide interferometers capable of implementing arbitrary unitaries on hundreds of modes, and (iii) high‑efficiency, photon‑number‑resolving detectors. They argue that a BosonSampling experiment with 20–30 photons distributed over several hundred modes is within reach, and that such a scale would be sufficient to place the problem beyond the reach of any known classical algorithm, thereby offering a concrete route to demonstrate “quantum supremacy” without full‑scale universal quantum computers.

Finally, the paper outlines open directions: proving the two conjectures rigorously, extending the analysis to more realistic error models (photon loss, mode‑mismatch, detector dark counts), and exploring whether similar hardness results hold for other non‑interacting particle systems such as fermions. By bridging quantum optics, random matrix theory, and computational complexity, the work establishes BosonSampling as a compelling and experimentally accessible platform for probing the limits of classical computation.

Comments & Academic Discussion

Loading comments...

Leave a Comment