Exact block-wise optimization in group lasso and sparse group lasso for linear regression

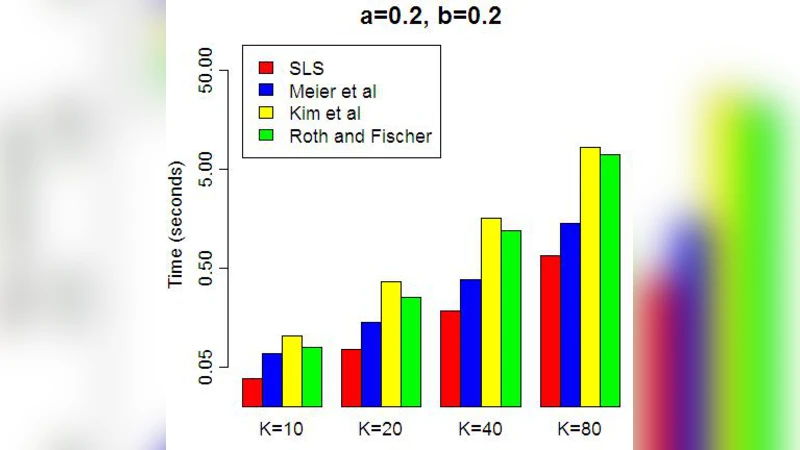

The group lasso is a penalized regression method, used in regression problems where the covariates are partitioned into groups to promote sparsity at the group level. Existing methods for finding the group lasso estimator either use gradient projection methods to update the entire coefficient vector simultaneously at each step, or update one group of coefficients at a time using an inexact line search to approximate the optimal value for the group of coefficients when all other groups’ coefficients are fixed. We present a new method of computation for the group lasso in the linear regression case, the Single Line Search (SLS) algorithm, which operates by computing the exact optimal value for each group (when all other coefficients are fixed) with one univariate line search. We perform simulations demonstrating that the SLS algorithm is often more efficient than existing computational methods. We also extend the SLS algorithm to the sparse group lasso problem via the Signed Single Line Search (SSLS) algorithm, and give theoretical results to support both algorithms.

💡 Research Summary

The paper addresses the computational challenges of solving the group lasso and sparse group lasso problems in the context of linear regression, where predictors are pre‑partitioned into groups and sparsity is desired at the group level (and, for the sparse version, also at the individual coefficient level). Traditional algorithms fall into two broad categories. The first updates the entire coefficient vector simultaneously using gradient‑projection or proximal‑gradient schemes; the second adopts a block‑coordinate approach that updates one group at a time but relies on an inexact line search to approximate the optimal group update while keeping the remaining groups fixed. Both strategies can be computationally expensive in high‑dimensional settings: the former requires handling the full gradient and projection at each iteration, while the latter may need many inner iterations to obtain a sufficiently accurate line‑search solution, especially when groups are highly correlated.

To overcome these drawbacks, the authors propose the Single Line Search (SLS) algorithm for the ordinary group lasso. The key observation is that, with a fixed residual vector obtained from all other groups, the sub‑problem for a single group reduces to a one‑dimensional convex optimization problem in the norm of that group’s coefficient vector. By introducing a scalar variable (t = |\beta_g|_2), the original objective (quadratic loss plus the (\lambda_2|\beta_g|_2) penalty) becomes a univariate function (f(t)) that is smooth and strictly convex on (

Comments & Academic Discussion

Loading comments...

Leave a Comment