A constructive mean field analysis of multi population neural networks with random synaptic weights and stochastic inputs

We deal with the problem of bridging the gap between two scales in neuronal modeling. At the first (microscopic) scale, neurons are considered individually and their behavior described by stochastic differential equations that govern the time variations of their membrane potentials. They are coupled by synaptic connections acting on their resulting activity, a nonlinear function of their membrane potential. At the second (mesoscopic) scale, interacting populations of neurons are described individually by similar equations. The equations describing the dynamical and the stationary mean field behaviors are considered as functional equations on a set of stochastic processes. Using this new point of view allows us to prove that these equations are well-posed on any finite time interval and to provide a constructive method for effectively computing their unique solution. This method is proved to converge to the unique solution and we characterize its complexity and convergence rate. We also provide partial results for the stationary problem on infinite time intervals. These results shed some new light on such neural mass models as the one of Jansen and Rit \cite{jansen-rit:95}: their dynamics appears as a coarse approximation of the much richer dynamics that emerges from our analysis. Our numerical experiments confirm that the framework we propose and the numerical methods we derive from it provide a new and powerful tool for the exploration of neural behaviors at different scales.

💡 Research Summary

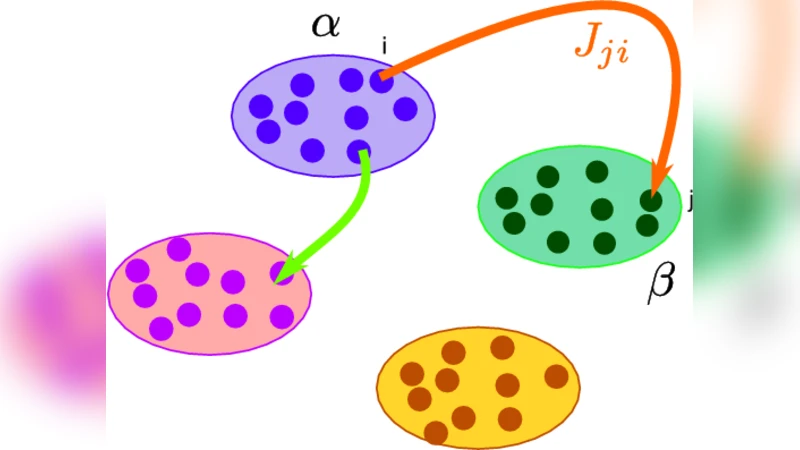

The paper addresses a fundamental gap in computational neuroscience: how to rigorously connect microscopic descriptions of individual neurons with mesoscopic descriptions of interacting neuronal populations. At the microscopic level each neuron’s membrane potential is modeled by a stochastic differential equation (SDE) that incorporates passive leakage, synaptic input, and stochastic external drive. Synaptic input is expressed as a weighted sum of the nonlinear firing rates of presynaptic neurons; the weights themselves are treated as random variables with a prescribed mean and variance, reflecting the heterogeneity observed in biological synapses.

The authors then group neurons that share the same statistical properties into distinct populations. For each population they introduce a stochastic process that represents the collective membrane potential dynamics. By viewing the set of population processes as a functional object, they formulate the “mean‑field” equations as functional equations on a space of stochastic processes. This viewpoint is novel: instead of deriving deterministic mean‑field limits via law‑of‑large‑numbers arguments, they keep the full stochastic character and treat the mean‑field map as an operator on a Banach space of square‑integrable processes.

The central theoretical contribution is a rigorous proof that, on any finite time interval (

Comments & Academic Discussion

Loading comments...

Leave a Comment