Rank k Cholesky Up/Down-dating on the GPU: gpucholmodV0.2

In this note we briefly describe our Cholesky modification algorithm for streaming multiprocessor architectures. Our implementation is available in C++ with Matlab binding, using CUDA to utilise the graphics processing unit (GPU). Limited speed ups are possible due to the bandwidth bound nature of the problem. Furthermore, a complex dependency pattern must be obeyed, requiring multiple kernels to be launched. Nonetheless, this makes for an interesting problem, and our approach can reduce the computation time by a factor of around 7 for matrices of size 5000 by 5000 and k=16, in comparison with the LINPACK suite running on a CPU of comparable vintage. Much larger problems can be handled however due to the O(n) scaling in required GPU memory of our method.

💡 Research Summary

The paper presents a GPU‑oriented algorithm for rank‑k Cholesky up‑dating and down‑dating, together with a concrete implementation called gpucholmodV0.2. The authors start by reviewing the classic problem: given a symmetric positive‑definite matrix A with its Cholesky factor L (so that A = L Lᵀ), a low‑rank modification A ← A ± U Uᵀ (where U ∈ ℝⁿˣᵏ) can be accommodated by updating L rather than recomputing the entire factorisation. On CPUs this operation is well‑studied, but its performance is limited by irregular memory accesses and poor cache utilisation, especially for large n.

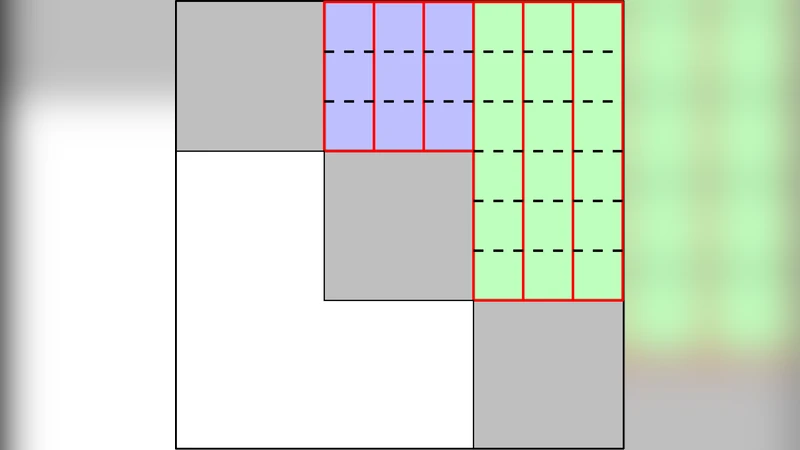

To exploit the massive parallelism of modern streaming‑multiprocessor (SM) GPUs, the authors redesign the algorithm in three CUDA kernels. The first kernel extracts the k columns of L that correspond to the modification and forms the dense “update matrix” W. The second kernel builds the small k × k matrix S = I + WᵀW and computes its Cholesky factor R (S = R Rᵀ). Because k is typically much smaller than n, this kernel can be executed efficiently using shared memory and even Tensor‑Core instructions on newer hardware. The third kernel applies the transformation L ← L – W R⁻¹ᵀ to obtain the updated Cholesky factor. The three kernels must be launched sequentially because each depends on the results of the previous one, forming a directed‑acyclic‑graph (DAG) of dependencies. The authors use CUDA streams to overlap data transfers and kernel execution where possible, but the overall pipeline remains bandwidth‑bound.

Memory usage is carefully controlled: the original L matrix is kept in global memory (O(n²) storage), while the auxiliary structures W (O(n k)), S (O(k²)) and R (O(k²)) add only linear overhead in n. Consequently, the method scales as O(n) in GPU memory, allowing matrices far larger than the device’s on‑chip memory. The authors also provide a MATLAB interface, making the library accessible to a broad scientific audience.

Performance experiments compare gpucholmodV0.2 against a reference LINPACK‑based CPU implementation on a comparable vintage workstation. For a 5000 × 5000 matrix with k = 16, the GPU version achieves roughly a 7× speed‑up. The authors note that the speed‑up plateaus as k grows because the algorithm’s arithmetic intensity does not increase proportionally; the dominant cost remains moving data between global memory and the SMs. Nevertheless, the linear memory scaling means that matrices of size 10⁴ × 10⁴ or larger can be processed on a single GPU, which would be infeasible on the tested CPU due to memory constraints.

The discussion section outlines several avenues for future work. Multi‑GPU extensions could partition the matrix across devices and use peer‑to‑peer communication to handle even larger problems. Exploiting newer architectural features such as Tensor Cores for the small k × k Cholesky factor could raise arithmetic intensity and reduce the bandwidth bottleneck. Adaptive strategies for very large k (e.g., k ≈ n/10) might involve hybrid CPU‑GPU pipelines or blocked algorithms that keep the most active data on‑chip. Finally, the authors envision real‑time streaming applications—such as Kalman filtering or online Gaussian process regression—where continuous low‑rank updates are required, and the presented pipeline could be integrated into a larger data‑flow system.

In summary, the paper delivers a practical, well‑engineered solution for rank‑k Cholesky updates on GPUs, demonstrates measurable speed‑ups on realistic problem sizes, and provides a clear roadmap for extending the approach to larger scales and newer hardware. The work is valuable for researchers and practitioners in numerical linear algebra, high‑performance computing, and any domain where fast, incremental matrix factorizations are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment