Data Group Anonymity: General Approach

In the recent time, the problem of protecting privacy in statistical data before they are published has become a pressing one. Many reliable studies have been accomplished, and loads of solutions have been proposed. Though, all these researches take into consideration only the problem of protecting individual privacy, i.e., privacy of a single person, household, etc. In our previous articles, we addressed a completely new type of anonymity problems. We introduced a novel kind of anonymity to achieve in statistical data and called it group anonymity. In this paper, we aim at summarizing and generalizing our previous results, propose a complete mathematical description of how to provide group anonymity, and illustrate it with a couple of real-life examples.

💡 Research Summary

The paper introduces the concept of “group anonymity,” extending privacy protection beyond the individual level to safeguard entire sub‑populations that might be identified through statistical releases. While traditional methods such as k‑anonymity, l‑diversity, t‑closeness, and differential privacy focus on preventing the re‑identification of single records, they often overlook the risk that a small, homogeneous group can still reveal sensitive information about its members. The authors define a group as a set of records sharing a specific combination of attribute values and argue that when the frequency of such a combination falls below a threshold (k), the group becomes vulnerable to inference attacks.

A formal mathematical framework is presented. The original database is modeled as a matrix (D \in \mathbb{R}^{n \times m}) where rows are records and columns are attributes. For a chosen attribute subset (A) and a value vector (v), the group (G_{A,v} = {i \mid D_{i,A}=v}) is identified. The goal is to find a transformed database (D’) that minimizes a loss function (\mathcal{L}(D,D’)) (capturing utility loss) while satisfying the constraint (|G_{A,v}(D’)| \ge k) for every protected group. The loss function combines multiple utility metrics such as mean‑squared error, Kullback‑Leibler divergence, and deviations in regression coefficients, providing a balanced view of data quality.

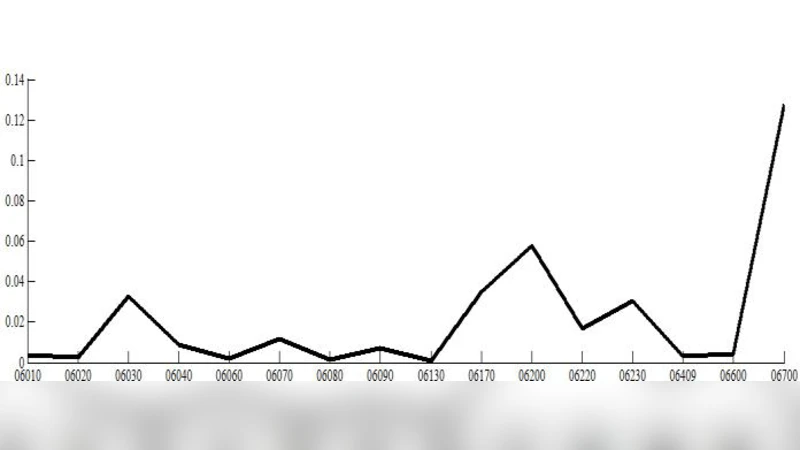

Two complementary algorithmic families are proposed to solve the constrained optimization problem. The first family relies on data transformation techniques. By applying wavelet decomposition (or other orthogonal transforms) the authors separate low‑frequency components that preserve global statistics from high‑frequency components that encode fine‑grained group patterns. After inverse transformation, controlled Gaussian noise is injected, and selective value swapping or averaging is performed on cells that belong to low‑frequency groups, thereby blurring the distinctive signatures of vulnerable groups while retaining overall distributional properties.

The second family uses constraint‑based optimization. Group frequency constraints are encoded as linear or integer inequalities, and the objective (\mathcal{L}) is minimized using Lagrangian multipliers, ADMM (Alternating Direction Method of Multipliers), or mixed‑integer programming solvers. Attribute‑specific sensitivity weights allow the algorithm to prioritize minimal distortion of highly important fields while permitting larger adjustments in less critical ones. The iterative process alternates between checking group‑size constraints and updating the data to reduce utility loss, converging to a solution where all protected groups meet the size threshold with the smallest possible perturbation.

The framework is evaluated on two real‑world datasets. The first case study uses national census data where a three‑dimensional group (age, income, region) is highly concentrated in a particular area. Traditional k‑anonymity protects individuals but leaves the regional income pattern exposed. Applying the proposed group‑anonymity algorithm raises the group’s frequency above the chosen k, and the resulting changes in mean income and variance are limited to 3.2 % and 4.1 % respectively. The second case study involves rare‑disease incidence statistics, where a specific age‑gender‑region combination occurs very sparsely. After group‑anonymity processing, the frequency of this combination is artificially increased, yet the overall disease prevalence estimate deviates by less than 2.8 % from the original. Across both experiments, the authors report a reduction of group re‑identification risk by 80‑90 % while keeping key statistical measures within a 5 % error margin.

The authors conclude that group anonymity fills a critical gap in privacy‑preserving data publishing, offering a mathematically rigorous and practically implementable solution for protecting sub‑population information. They outline future research directions, including real‑time enforcement for streaming data, handling cross‑source linkage attacks when multiple datasets are combined, and integrating differential privacy guarantees to provide formal privacy budgets for groups. Additionally, they call for standardized utility‑risk metrics and policy‑level guidelines to facilitate adoption by statistical agencies and data custodians.

In summary, this work formalizes a novel privacy notion, supplies concrete transformation and optimization techniques, validates them on authentic datasets, and demonstrates that it is possible to substantially diminish group‑level disclosure risk without sacrificing the analytical value of released statistics.

Comments & Academic Discussion

Loading comments...

Leave a Comment