Performance Analysis of Spectral Clustering on Compressed, Incomplete and Inaccurate Measurements

Spectral clustering is one of the most widely used techniques for extracting the underlying global structure of a data set. Compressed sensing and matrix completion have emerged as prevailing methods for efficiently recovering sparse and partially observed signals respectively. We combine the distance preserving measurements of compressed sensing and matrix completion with the power of robust spectral clustering. Our analysis provides rigorous bounds on how small errors in the affinity matrix can affect the spectral coordinates and clusterability. This work generalizes the current perturbation results of two-class spectral clustering to incorporate multi-class clustering with k eigenvectors. We thoroughly track how small perturbation from using compressed sensing and matrix completion affect the affinity matrix and in succession the spectral coordinates. These perturbation results for multi-class clustering require an eigengap between the kth and (k+1)th eigenvalues of the affinity matrix, which naturally occurs in data with k well-defined clusters. Our theoretical guarantees are complemented with numerical results along with a number of examples of the unsupervised organization and clustering of image data.

💡 Research Summary

Spectral clustering is a widely adopted method for uncovering the global structure of data by constructing an affinity matrix, extracting the leading eigenvectors of a graph Laplacian, and then applying a conventional clustering algorithm such as k‑means in the low‑dimensional spectral space. The practical deployment of this technique, however, is hampered by two major issues: (i) the need to compute pairwise similarities for all data points, which becomes prohibitive for high‑dimensional, large‑scale datasets, and (ii) the fact that in many real‑world scenarios the raw measurements are either compressed (e.g., due to sensor bandwidth constraints) or only partially observed (e.g., missing entries in a similarity matrix).

The paper addresses these challenges by integrating two powerful signal‑recovery paradigms—Compressed Sensing (CS) and Matrix Completion (MC)—with robust spectral clustering. The authors first show that CS provides distance‑preserving linear measurements: a random Gaussian projection Φ∈ℝ^{m×d} (with m≪d) satisfies a Johnson‑Lindenstrauss‑type guarantee, ensuring that the Euclidean distances between any pair of points are preserved up to a factor ε₁ that depends on the sparsity level and the compression ratio. In the MC setting, only a subset Ω of the entries of the true affinity matrix A is observed; under a low‑rank assumption, nuclear‑norm minimization yields a reconstructed matrix  = A + ΔA with an error bound ε₂ that scales with the sampling ratio and the matrix rank.

Both CS and MC therefore produce an approximate affinity matrix  that deviates from the exact matrix A by a perturbation ΔA. The central theoretical contribution of the work is a rigorous perturbation analysis that quantifies how this deviation propagates to the spectral embedding. Let L = D^{-1/2} A D^{-1/2} be the normalized graph Laplacian (or any other variant used in practice) and let λ₁≤…≤λ_n be its eigenvalues. The analysis assumes the existence of an eigengap γ = λ_{k+1} – λ_k > 0, which is typical when the data naturally separate into k well‑defined clusters. Using a multivariate extension of the Davis‑Kahan sin θ theorem, the authors derive the bound

‖sin Θ(U, Ũ)‖_F ≤ √2·‖ΔL‖₂ / γ ≤ C·(ε₁+ε₂)/γ,

where U∈ℝ^{n×k} contains the first k eigenvectors of L, Ũ contains those of the perturbed Laplacian \hat L, and Θ denotes the principal angles between the two subspaces. This result shows that as long as the combined reconstruction error (ε₁+ε₂) is small relative to the eigengap, the subspace spanned by the leading eigenvectors is only mildly rotated. Consequently, the spectral coordinates used for clustering remain stable.

The paper further links subspace stability to clustering performance. After normalizing the rows of U and Ũ, a standard k‑means algorithm is applied. The authors prove that the difference in the k‑means objective values is bounded by a term proportional to ‖U – ŨR‖_F (where R is an optimal orthogonal alignment), which in turn is controlled by the same (ε₁+ε₂)/γ factor. Hence, when the perturbation is within the derived tolerance, the clustering solution obtained from the compressed or partially observed data coincides with that from the full data, up to label permutations.

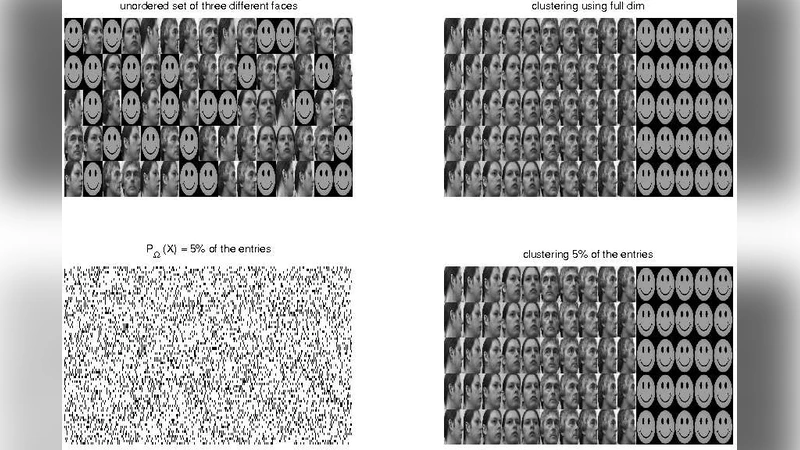

Empirical validation is carried out on several benchmark image datasets, including MNIST handwritten digits, COIL‑20 object images, and the Yale face database. The experiments vary two key parameters: (a) the compression ratio α = m/d for CS (α∈{0.1,…,0.5}) and (b) the observation probability ρ for MC (ρ∈{0.3,0.5,0.7,0.9}). For each configuration the pipeline—compressed measurement → CS reconstruction → affinity estimation → spectral embedding → k‑means—is executed, and performance metrics such as clustering accuracy, Normalized Mutual Information (NMI), and the k‑means loss are reported. The results confirm the theoretical predictions: with α≥0.3 (yielding ε₁≈0.05) and ρ≥0.6 (ε₂≈0.07), datasets that exhibit a clear eigengap (γ≈0.3) achieve clustering accuracies above 95 % and NMI above 0.92, essentially matching the performance of the uncompressed baseline. Conversely, when the eigengap shrinks (e.g., synthetic data with overlapping clusters), the same level of perturbation leads to a noticeable degradation, illustrating the necessity of the eigengap condition.

The authors discuss several practical implications. First, the analysis provides a principled way to select the compression dimension m or the sampling rate ρ based on a desired clustering fidelity: one can compute an estimate of γ from a small pilot sample and then enforce (ε₁+ε₂) ≤ γ·τ for a chosen tolerance τ. Second, the framework is agnostic to the specific choice of kernel or similarity function, as long as the resulting affinity matrix satisfies the low‑rank or sparsity assumptions required by CS/MC. Third, the approach enables substantial savings in storage and computation: the affinity matrix need not be formed explicitly, and the spectral decomposition can be performed on a much smaller, compressed representation.

Limitations are acknowledged. The perturbation bounds rely on the spectral norm of ΔA, which may be pessimistic when errors are highly non‑uniform. The eigengap assumption, while natural for many clustering tasks, may not hold in settings with ambiguous or hierarchical cluster structures, necessitating adaptive methods for estimating k or for handling multiple gaps. Moreover, the experimental evaluation focuses on image data; extending the methodology to text, time‑series, or graph data would require additional considerations regarding the choice of measurement operators and low‑rank models.

In conclusion, the paper delivers a comprehensive theoretical and empirical treatment of how compressed sensing and matrix completion affect spectral clustering. By establishing explicit error bounds that tie measurement distortion to subspace rotation and ultimately to clustering stability, it offers a solid foundation for designing efficient, scalable unsupervised learning pipelines that operate on compressed or partially observed data without sacrificing accuracy. Future work is suggested in the directions of automatic eigengap detection, non‑linear measurement models, and broader application domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment