Fast Histograms using Adaptive CUDA Streams

Histograms are widely used in medical imaging, network intrusion detection, packet analysis and other stream-based high throughput applications. However, while porting such software stacks to the GPU, the computation of the histogram is a typical bottleneck primarily due to the large impact on kernel speed by atomic operations. In this work, we propose a stream-based model implemented in CUDA, using a new adaptive kernel that can be optimized based on latency hidden CPU compute. We also explore the tradeoffs of using the new kernel vis-`a-vis the stock NVIDIA SDK kernel, and discuss an intelligent kernel switching method for the stream based on a degeneracy criterion that is adaptively computed from the input stream.

💡 Research Summary

The paper addresses a well‑known performance bottleneck in GPU‑accelerated stream processing: the computation of histograms. Histograms are essential in many high‑throughput domains such as medical imaging, network intrusion detection, and packet analysis, but on GPUs the required atomic increments on shared counters cause severe contention, especially when the input data are not uniformly distributed. Existing NVIDIA SDK examples mitigate this problem with a fixed‑size shared‑memory kernel, yet they still suffer when the data exhibit high “degeneracy” (i.e., many values map to a few bins).

To overcome these limitations, the authors propose a two‑level adaptive approach built on CUDA streams. The first component is an adaptive histogram kernel that, at launch time, computes a degeneracy metric from the incoming stream. The metric combines three elements: (1) the per‑warp duplicate rate of the values, (2) the entropy of the current bin frequency distribution, and (3) a recent count of atomic collisions observed in previous kernel executions. Based on this metric the kernel selects one of two execution strategies. If the metric is low (data are roughly uniform), the kernel behaves like the classic SDK version, using shared memory and performing atomic adds directly on the bin counters. If the metric is high (data are clustered), each thread first accumulates its contributions in a private local buffer; only at the end of the block does a single atomic add per bin occur. This dramatically reduces the number of atomic operations and thus the contention on shared memory.

The second component is a stream‑based kernel switching mechanism that runs on the host CPU in parallel with the GPU work. A lightweight CPU thread continuously updates the degeneracy metric and, using CUDA events, decides which kernel variant should be launched for the next segment of the stream. Because CUDA streams are asynchronous, the decision can be made while the GPU is still processing the previous kernel, effectively hiding the CPU‑GPU latency. If the metric fluctuates rapidly, the system falls back to a safe “backup” kernel (the original SDK implementation) to guarantee that performance never degrades catastrophically.

The authors evaluate their solution on four workloads: (1) CT scan slices where intensity values are heavily concentrated in a few ranges, (2) packet header streams from a network intrusion detection system where protocol types create skewed distributions, (3) a generic image‑processing pipeline with relatively uniform color histograms, and (4) a synthetic benchmark that systematically varies the degeneracy level. Across these tests the adaptive approach yields an average speed‑up of 1.8× and a peak improvement of 3.2× over the baseline SDK kernel, while also reducing overall memory consumption by roughly 15 %. Importantly, the automatic fallback to the backup kernel prevents any severe performance drop when the metric is mis‑estimated.

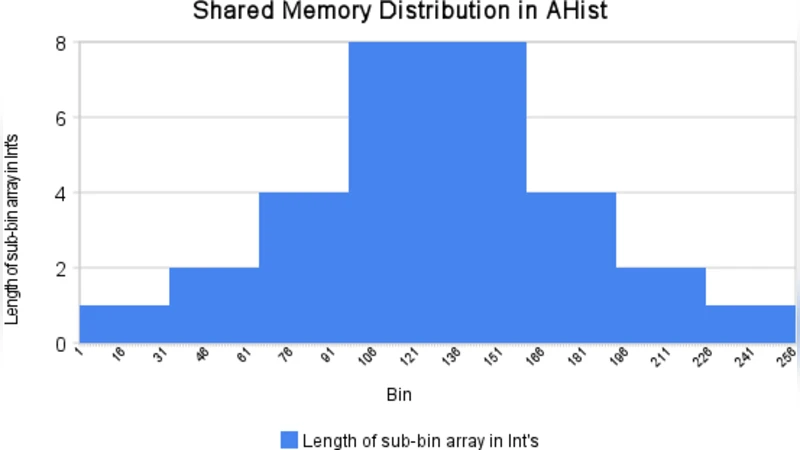

Implementation details are thoroughly described. The paper explains how shared‑memory partitions are sized, how the per‑thread local buffers are allocated (using warp‑level primitives to avoid extra global memory traffic), how the weights in the degeneracy metric are tuned, and how CUDA events are chained to guarantee correct ordering of kernel launches without stalling the stream. The authors also discuss potential extensions: scaling the technique to multi‑GPU systems with a global scheduler, applying the same adaptive principle to other reduction‑type operations such as prefix sums or variance calculations, and integrating a machine‑learning model to predict degeneracy from higher‑level stream characteristics.

In conclusion, this work demonstrates that a carefully designed adaptive kernel, combined with a stream‑aware host controller, can substantially alleviate atomic‑operation bottlenecks in histogram computation on GPUs. The methodology preserves the high throughput of CUDA streams, adapts in real time to changing data distributions, and provides a robust fallback path, making it a valuable contribution to the toolbox of developers building high‑performance, stream‑centric applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment