Discussion of "Riemann manifold Langevin and Hamiltonian Monte Carlo methods by M. Girolami and B. Calderhead

This technical report is the union of two contributions to the discussion of the Read Paper "Riemann manifold Langevin and Hamiltonian Monte Carlo methods" by B. Calderhead and M. Girolami, presented in front of the Royal Statistical Society on Octob…

Authors: ** - J. Cornebise (Jean‑Christophe Cornebise) - G. Peters (Gilles Peters) *(두 명 모두 원 논문인 Girolami & Calderhead, 2010에 대한 토론을 작성)* --- **

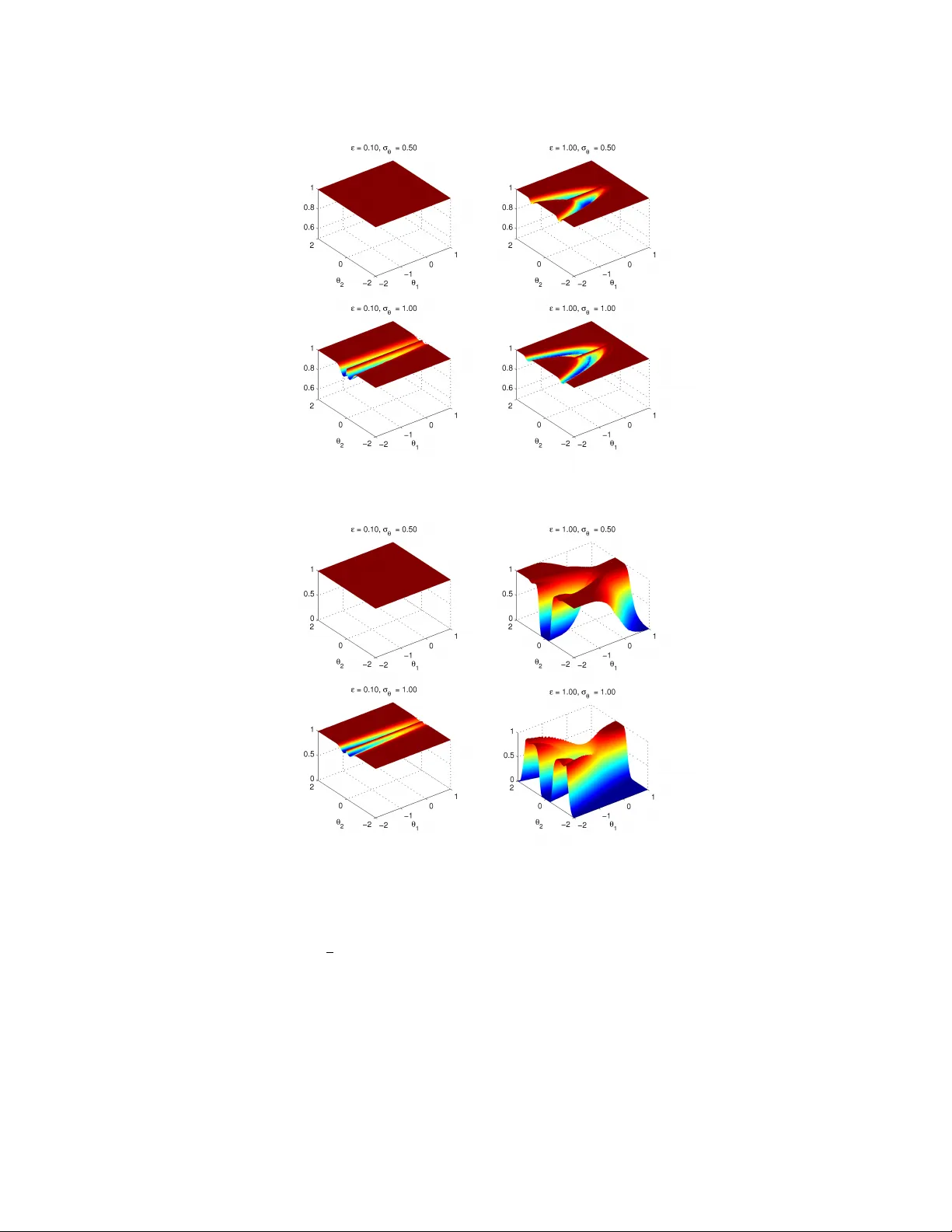

Discussion of “Riemann manifold Langevin and Hamiltonian Mon te Carlo metho ds” b y M. Girolami and B. Calderhead Luk e Bornn ∗ , Julien Cornebise † , Gareth W. Peters ‡ Octob er 25, 2018 Intro duction This technical rep ort is the union of tw o contributions to the discussion of the Read P ap er R iemann manifold L angevin and Hamiltonian Monte Carlo metho ds ( Calderhead and Girolami , 2010 ), presen ted in front of the Ro yal Statistical So- ciet y on Octob er 13 th 2010 and to app ear in the Journal of the Ro yal Statistical So ciet y Series B. The first commen t establishes a parallel and p ossible interactions with Adap- tiv e Mon te Carlo metho ds. The second commen t exposes a detailed study of Riemannian Manifold Hamiltonian Monte Carlo (RMHMC) for a weakly iden tifi- able mo del presen ting a strong ridge in its geometry . 1 On A daptive Monte Ca rlo – J. Cornebise and G. Peters The utilit y of RMMALA and RMHMC metho dology is its abilit y to adapt Marko v c hain prop osals to the current state. Man y articles design Adaptiv e Monte Carlo (MC) algorithms to learn efficien t MCMC prop osals, suc h as the sp ecial case of con trolled MCMC, Haario et al. ( 2001 ) whic h utilizes a historically estimated global cov ariance for a Random W alk Metrop olis Hastings (R WMH) algorithm. Similarly , A tchadé ( 2006 ) devised global adaptation in MALA. Surveys are pro- vided in A tchadé et al. ( 2009 ), Andrieu and Thoms ( 2008 ) and Roberts and Rosen thal ( 2009 ). ∗ Univ ersity of British Columbia, Department of Statistics, l.bornn@stat.ubc.ca † Univ ersity of British Columbia, Departmen t of Statistics and Departmen t of Computer Science, cornebis@cs.ubc.ca ‡ Univ ersity of New South W ales, Sc ho ol of Mathematics and Statistics, garethpeters@unsw. edu.au 1 When the prop osal remains essen tially unc hanged regardless of the current Mark ov chain state, performance may b e p o or if the shap e of the target distri- bution v aries widely ov er the parameter space. A typical illustration inv olv es the “banana-shap ed” warped Gaussian (see commen ts b y Cornebise and Bornn), iron- ically originally utilized to illustrate the strength of early A daptive MC ( Haario et al. , 1999 , Figure 1). This algorithm learned lo cal geometry by estimating the co v ariance matrix based on a sliding window of past states. Ho wev er, Haario et al. ( 2001 , App endix A) show ed it could exhibit strong bias, p erhaps connected to requirements of “d iminishing adaptation” as studied in Andrieu and Moulines ( 2006 ). Recen t lo cally adaptiv e algorithms satisfy this condition, e.g. State- dep endent pr op osal sc alings ( Rosenthal , 2010 , Section 3.4) fits a parametric family to the co v ariance as a function of the state, or the parameterized parameter space approac h of R e gional A daptive Metr op olis A lgorithms ( Rob erts and Rosen thal , 2009 , Section 5). Riemannian approaches provide strong rationale for parameterizing the pro- p osal co v ariance as a function of the state – without learning, when the FIM (or observed FIM) can b e computed or estimated, (see comment b y Doucet and Jacob). With unkno wn FIM, or to learn the optimal step size, it w ould be in- teresting to combine Riemannian Mon te Carlo with adaption. A first step could in volv e a simplistic Riemann-inspired algorithm suc h as a centered R WMH via the (observ ed) FIM as the prop osal cov ariance (Section 4.3.1 Marin and Rob ert , 2007 , as used in) – equiv alen t to one step of RMMALA without drift. An additional use of Riemannian MC could b e within the MCMC step of P article MCMC ( Andrieu et al. , 2010 ), where adaption was highly adv an tageous in the A dPMCMC algorithms of P eters et al. ( 2010 ). Another in teresting extension inv olves considering the sto chastic approxima- tion alternativ e approach, based on a curv ature up dating criterion of ( Ok aba yashi and Gey er , 2010 , Equation 10), for an adaptiv e line search. This was prop osed as an alternative to MCMC-MLE of Gey er ( 1991 ) for complex dep endence structures in exp onential family mo dels. In particular comparing prop erties of this curv ature based condition based on lo cal gradient information with adaptive RMMALA and RMHMC versions of the MCMC-MLE algorithms w ould b e instructive. A dditionally , one ma y consider ho w to extend Riemannian MC to trans- dimensional MC suc h as rev ersible jump ( Richardson and Green , 1997 ), for which adaptiv e extensions are rare ( Green and Hastie , 2009 , Section 4.2). W e wonder ho w a geometric approac h may b e extended to efficien tly explore disjoint unions of mo del subspaces as in Nev at et al. ( 2009 ). Finally , an op en question to the comm unity: could suc h geometric to ols b e utilized in Approximate Bay esian Computation ( Beaumont et al. , 2009 ), e.g. to design the distance metric b et ween summary statistics? 2 2 RMHMC fo r unidentifiable mo dels – J. Cornebise and L. Bornn In this comment we sho w how the prop osed RMHMC metho d can b e particularly useful in the case of strong geometric features such as ridges commonly o ccur- ring in nonidentifiable mo dels. While it has b een suggested to use tempering or adaptiv e methods to handle these ridges ( Neal , 2001 ; Haario et al. , 2001 , e.g.), they remain a celebrated challenge for new Monte Carlo metho ds ( Corn uet et al. , 2009 ). W e susp ect that RMHMC, b y exploiting the geometry of the surface to help mak e intelligen t mov es along the ridge, is a brillian t adv ance for noniden tifiability sampling issues. Consider observ ations y 1 , . . . , y n ∼ N ( θ 1 + θ 2 2 , σ 2 y ) . The parameters θ 1 and θ 2 are non-identifiable without an y additional information b eyond the observ ations: an y v alues such that θ 1 + θ 2 2 = c for some constan t c explain the data equally w ell. By imp osing a prior distribution, θ 1 , θ 2 ∼ N (0 , σ 2 θ ) , we create w eak iden tifiability , namely decreased posterior probabilit y for c far from zero. Figure 1 shows the prior, likelihoo d, and ridge-lik e p osterior for the mo del. F or this problem, we ha ve G ( θ ) = n σ 2 y + 1 σ 2 θ 2 nθ 2 σ 2 y 2 nθ 2 σ 2 y 4 nθ 2 2 σ 2 y + 1 σ 2 θ . Figure 2 compares t ypical tra jectories of b oth HMC and RMHMC, demonstrating the ability of RMHMC to follow the full length of the ridge. HMC and RMHMC also differ in sensitivity to the step size. As describ ed b y Neal ( 2010 ), HMC suffers from the presence of a critical step size ab ov e which the error explo des, accum ulating at eac h leapfrog step. In contrast, RMHMC o ccasionally exhibits a sudden jump in the Hamiltonian at one sp ecific leapfrog step, follo wed by well-behaving steps (as seen in Figure 2.(a)). This is due to the p ossible divergence of the fixed p oin t iterations (FPI) in the generalized leapfrog equations p τ + ε 2 = p ( τ ) − ε 2 ∇ θ H n θ ( τ ) , p τ + ε 2 o (16) for given momentum p ( τ ) , parameter θ ( τ ) and step size ε . Figure 3 shows the probabilit y of (16) ha ving a solution p ( ε/ 2) as a function of θ (0) , and of the deriv ativ e at the fixed p oint b eing “small enough” for the FPI to con verge; the w ell-known sufficien t theoretical threshold on the deriv ativ e ( Fletc her , 1987 , see e.g.) is 1 , but we conserv ativ ely c hose 1 . 2 based on t ypical successful runs. When the finite num b er of FPI div erges, the Hamiltonian explo des; how ever, subsequent steps ma y still admit a fixed p oin t, and hence b eha ve normally . Unsurprisingly , this b ehavior is muc h more lik ely to o ccur for larger step sizes. 3 Figure 1: Prior, lik eliho o d, and p osterior for the w arp ed biv ariate Gaussian with n = 100 v alues generated from the lik eliho o d with parameter settings σ θ = σ y = 1 . As the sample size increases and the prior b ecomes more diffuse, the p osterior b ecomes less iden tifiable and the ridge in the p osterior b ecomes stronger. 4 (a) RMHMC (b) HMC Figure 2: Three t ypical consecutive tra jectories of 20 leapfrog steps each, with step size of 0 . 1 , for the RMHMC and HMC algorithm, c hosen to highlight tw o acceptances (blac k) and one rejection (red), representativ e of the approximately 65% acceptance ratio for b oth HMC and RMHMC. W e see that RMHMC is able to trac k the contours of the density and reac h to the furthest tails of the ridge, adapting to the lo cal geometry , whereas the spherical mo ves of HMC oscillate bac k and forth across the ridge. 5 (a) Probability of existence of fixed p oint (b) Probability of existence of fixed p oint and of a deriv ative lo wer than 1.2 Figure 3: Probabilit y of a single iteration of the generalized leapfrog (a) admit- ting a fixed p oint p ε 2 , (b) admitting a fixed p oin t and having a small enough deriv ativ e at the fixed p oint for the FPI to conv erge. Both plotted as a function of starting p oint θ (0) . Sho wn for tw o step sizes ε ∈ { 0 . 1 , 1 . 0 } , and t wo prior dis- tribution standard deviations σ θ ∈ { 0 . 5 , 1 . 0 } , i.e. v arying levels of identifiabilit y . The region of stabilit y for FPI b ecomes m uch smaller as the step size increases and as identifiabilit y decreases, ev en creating regions with null probability of con- v ergence. 6 While the regions of low probability can strongly decrease the mixing of the algorithm, they do not affect the theoretical conv ergence ensured by the rejection step. F ar from b eing a do wnside, understanding this b eha vior can bring muc h practical insight when c ho osing the step size – p ossibly adapting it on-the-fly , when RMHMC already pro vides a clever wa y to adaptiv ely devise the direction of the mo ves. References C. Andrieu and É. Moulines. On the ergo dicity prop erties of some adaptiv e MCMC algorithms. The Annals of Applie d Pr ob ability , 16(3):1462–1505, 2006. C. Andrieu and J. Thoms. A tutorial on adaptiv e MCMC. Statistics and Computing , 18(4):343–373, 2008. C. Andrieu, A. Doucet, and R. Holenstein. Particle Mark o v chain Mon te Carlo metho ds. J. R. Statis. So c. B , 72(3):269–342, 2010. Y. Atc hadé, G. F ort, E. Moulines, and P . Priouret. A daptive Marko v Chain Monte Carlo: Theory and Metho ds. T ec hnical rep ort, 2009. Y.F. Atc hadé. An adaptive v ersion for the Metrop olis adjusted Langevin algorithm with a truncated drift. Metho dolo gy and Computing in Applie d Pr ob ability , 8(2):235–254, 2006. M.A. Beaumon t, J.M. Cornuet, J.M. Marin, and C.P . Robert. A daptiv e approximate ba yesian computation. Biometrika , 96(4):983–990, 2009. B. Calderhead and M. Girolami. Riemann manifold Langevin and Hamiltonian Monte Carlo metho ds (with discussion). Journal of the R oyal Statistic al So ciety: Series B , to app ear, 2010. J.M. Corn uet, J.M. Marin, A. Mira, and C. Rob ert. A daptive multiple importance sampling. T echnical rep ort, ArXiV, 2009. URL . R. Fletcher. Pr actic al Metho ds of Optimization, se c ond e dition . 1987. C.J. Gey er. Marko v chain Mon te Carlo maximum likelihoo d. In Computing Scienc e and Statistics: Pr o c. 23r d Symp. Interfac e , pages 156–163, 1991. P .J. Green and D.I. Hastie. Reversible jump MCMC. T echnical rep ort, June 2009. H. Haario, E. Saksman, and J. T amminen. Adaptiv e prop osal distribution for random w alk Metrop olis algorithm. Computational Statistics , 14:375–395, 1999. H. Haario, E. Saksman, and J. T amminen. An adaptiv e Metrop olis algorithm. Bernoul li , 7(2):223–242, 2001. 7 J.M. Marin and C.P . Rob ert. Bayesian c or e: a pr actic al appr o ach to c omputational Bayesian statistics . Springer V erlag, 2007. R.M. Neal. Annealed imp ortance sampling. Statistics and Computing , 11(2):125–139, 2001. R.M. Neal. MCMC using Hamiltonian dynamics. In S. Bro oks, A. Gelman, G. Jones, and X.L. Meng, editors, Handb o ok of Markov Chain Monte Carlo . Chapman and Hall/CR C Press, 2010. I. Nev at, G.W. Peters, and J. Y uan. Channel Estimation in OFDM Systems with Un- kno wn Po wer Delay Profile using T rans-Dimensional MCMC via Sto chastic Approx- imation. In V ehicular T e chnolo gy Confer enc e, 2009. VTC Spring 2009. IEEE 69th , pages 1–6. IEEE, 2009. S. Ok abay ashi and C. J. Gey er. Long range search for maximum likelihoo d in exp onen tial families. T echnical rep ort, 2010. G.W. Peters, G.R. Hosac k, and K.R. Hay es. Ecological non-linear state space mo del selection via adaptive particle Marko v c hain Monte Carlo (A dPMCMC). T echnical rep ort, 2010. S. Richardson and P .J. Green. On Ba yesian analysis of mixtures with an unknown n umber of comp onents (with discussion). Journal of the R oyal Statistic al So ciety: Series B , 59(4):731–792, 1997. G.O. Rob erts and J.S. Rosenthal. Examples of adaptive MCMC. Journal of Computa- tional and Gr aphic al Statistics , 18(2):349–367, 2009. J.S. Rosenthal. Optimal prop osal distributions and adaptive MCMC. In S. Bro oks, A. Gelman, G. Jones, and X.L. Meng, editors, Handb o ok of Markov Chain Monte Carlo . Chapman and Hall/CRC Press, 2010. 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment