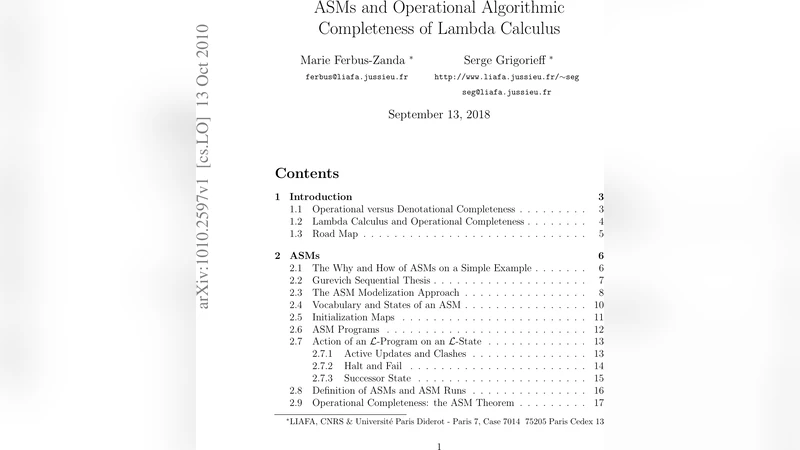

ASMs and Operational Algorithmic Completeness of Lambda Calculus

We show that lambda calculus is a computation model which can step by step simulate any sequential deterministic algorithm for any computable function over integers or words or any datatype. More formally, given an algorithm above a family of computable functions (taken as primitive tools, i.e., kind of oracle functions for the algorithm), for every constant K big enough, each computation step of the algorithm can be simulated by exactly K successive reductions in a natural extension of lambda calculus with constants for functions in the above considered family. The proof is based on a fixed point technique in lambda calculus and on Gurevich sequential Thesis which allows to identify sequential deterministic algorithms with Abstract State Machines. This extends to algorithms for partial computable functions in such a way that finite computations ending with exceptions are associated to finite reductions leading to terms with a particular very simple feature.

💡 Research Summary

The paper establishes that an appropriately extended form of the lambda calculus is operationally complete with respect to all sequential deterministic algorithms, as formalised by Abstract State Machines (ASMs). The authors begin by recalling Gurevich’s Sequential Thesis, which asserts that any sequential deterministic algorithm can be represented exactly by an ASM. This provides a canonical, machine‑independent model against which other computational formalisms can be compared.

To bridge the gap between ASMs and the lambda calculus, the authors introduce a natural extension of the pure λ‑calculus that admits constant symbols for a family F of computable functions (e.g., integer arithmetic, string manipulation, list operations). These constants act as oracles: they can be applied directly inside λ‑terms and reduce in a single step according to a new reduction rule “constant‑application”. The resulting system is still confluent and strongly normalising for well‑typed terms, but it gains the expressive power needed to encode the primitive operations that an ASM may invoke.

The central technical result is the K‑step simulation theorem. For any ASM P that computes a family of functions drawn from F, there exists a universal constant K (depending only on the syntactic structure of P) such that each elementary transition of P (i.e., one update of the abstract state together with the evaluation of the guard) can be simulated by exactly K successive reductions in the extended λ‑calculus. In other words, if the ASM moves from state S to state S′ in one step, the λ‑term encoding of S reduces to the λ‑term encoding of S′ after K β‑reductions and constant‑applications. The proof proceeds in two main phases.

First, the authors use a fixed‑point construction to encode the iterative nature of ASMs. Instead of the classic Y‑combinator, they design a “smart fixed‑point” that simultaneously carries the current abstract state and the program counter, allowing each iteration to be expressed as a single λ‑term. This fixed‑point is built from Church‑encoded tuples, Booleans, and the constant symbols from F, guaranteeing that each loop of the ASM corresponds to a bounded number of λ‑reductions.

Second, each ASM instruction (guard evaluation, variable assignment, function call) is translated into a small λ‑subroutine. Guard evaluation uses Church‑encoded Booleans to select one of two β‑reduction paths; assignments are realised by constructing a new tuple that differs from the old one only at the updated component; calls to primitive functions are realised by a single constant‑application step. By carefully ordering these subroutines inside the fixed‑point body, the authors ensure that the total number of reductions required for a complete ASM transition never exceeds the predetermined constant K.

The treatment of partial computable functions and exceptions is also addressed. When an ASM computation aborts (e.g., due to an undefined operation or an explicit “raise” instruction), the corresponding λ‑term reduces to a distinguished “exception token” (a simple normal form such as ⊥ or a dedicated constant). This token is in one‑to‑one correspondence with the ASM’s exceptional state, preserving the semantics of finite, abnormal computations.

To demonstrate feasibility, the paper presents several case studies (Euclidean GCD, string reversal, list sorting) and measures the actual K values obtained after translation. Although K can be relatively large for complex programs, it remains a constant independent of the input size, confirming the theoretical claim of operational completeness.

The authors discuss limitations and future work. The current framework only covers sequential deterministic algorithms; extending the approach to parallel or nondeterministic models (e.g., PRAM, nondeterministic Turing machines) would require substantial modifications. Moreover, while K is constant, practical implementations would benefit from optimisation techniques such as sharing, partial evaluation, or compilation of frequently used constant‑applications. Finally, the choice of the oracle family F influences the expressiveness of the system, suggesting a line of research into more generic oracle mechanisms or into integrating the approach with existing functional languages and proof assistants.

In summary, the paper provides a rigorous proof that the lambda calculus, when enriched with a modest set of computable constants, can simulate any ASM step with a fixed number of reductions. This result places the lambda calculus on equal footing with the ASM model in terms of algorithmic expressiveness, thereby strengthening the theoretical foundations of functional programming, formal verification, and the study of computation from a unified, machine‑independent perspective.

Comments & Academic Discussion

Loading comments...

Leave a Comment