Remaining problems with the "New Crown Indicator" (MNCS) of the CWTS

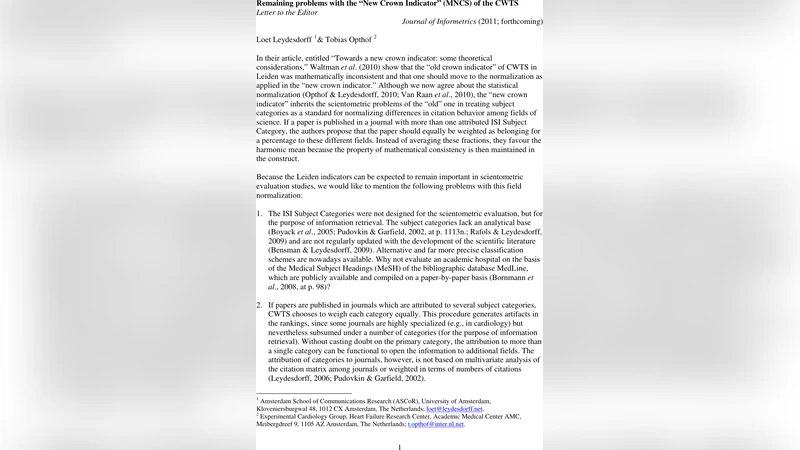

In their article, entitled “Towards a new crown indicator: some theoretical considerations,” Waltman et al. (2010; at arXiv:1003.2167) show that the “old crown indicator” of CWTS in Leiden was mathematically inconsistent and that one should move to the normalization as applied in the “new crown indicator.” Although we now agree about the statistical normalization, the “new crown indicator” inherits the scientometric problems of the “old” one in treating subject categories of journals as a standard for normalizing differences in citation behavior among fields of science. We further note that the “mean” is not a proper statistics for measuring differences among skewed distributions. Without changing the acronym of “MNCS,” one could define the “Median Normalized Citation Score.” This would relate the new crown indicator directly to the percentile approach that is, for example, used in the Science and Engineering Indicators of US National Science Board (2010). The median is by definition equal to the 50th percentile. The indicator can thus easily be extended with the 1% (= 99th percentile) most highly-cited papers (Bornmann et al., in press). The seeming disadvantage of having to use non-parametric statistics is more than compensated by possible gains in the precision.

💡 Research Summary

In this Letter to the Editor, Leydesdorff and Opthof critically examine the “new crown indicator” (MNCS) introduced by the Centre for Science and Technology Studies (CWTS) as a field‑normalized citation impact metric. They acknowledge that Waltman et al. (2010) successfully corrected the mathematical inconsistency of the “old crown indicator,” but argue that the new version inherits the same substantive problems because it still relies on ISI Subject Categories (SC) for field normalization.

The authors first point out that SCs were created for information‑retrieval purposes, not for scientometric evaluation. Consequently, they lack a solid analytical basis, are updated only irregularly, and often misrepresent the actual structure of scientific fields. When a journal is assigned to multiple SCs, CWTS gives each category an equal weight (or uses a harmonic mean to preserve mathematical consistency). This equal weighting produces artifacts: highly specialized journals that happen to be listed under several categories receive a diluted field‑normalization, while the true citation behavior of the papers is ignored. The authors illustrate this with examples such as the Journal of Vascular Research (assigned to “peripheral vascular disease” and “physiology”) and Circulation (assigned to three categories). Because the assignment is not based on multivariate analysis of citation patterns, “indexer effects” inevitably bias the MNCS scores.

Second, Leydesdorff and Opthof argue that the use of the arithmetic mean to aggregate normalized citation scores is statistically inappropriate for the highly skewed citation distributions typical of scientific literature. The mean is heavily influenced by a small number of extremely highly‑cited papers, leading to over‑ or under‑estimation of a unit’s performance. They propose replacing the mean with a non‑parametric statistic such as the median (the 50th percentile) or other percentile‑based measures. The median is robust to outliers, and extending the indicator to include the top 1 % (99th percentile) would capture the contribution of truly breakthrough papers.

Third, the authors advocate for a field‑normalization method that does not depend on any pre‑existing classification system. They suggest fractional counting of citations, where each citation is weighted by the inverse of the number of references in the citing paper (e.g., 1/6 for a mathematics paper with six references, 1/40 for a biomedical paper with forty references). This approach directly reflects differences in citation behavior across fields, is independent of journal‑based classifications, and eliminates indexer bias. Fractional counting can be applied at the article level, allowing for fine‑grained normalization even within the same journal where different topics may have very different citation potentials.

Finally, while CWTS introduced the harmonic mean to preserve “mathematical consistency,” Leydesdorff and Opthof contend that this does not solve the underlying problem: an inconsistent field‑normalization scheme will always produce unreliable results, regardless of the averaging method. They therefore propose retaining the MNCS acronym but redefining it as the “Median Normalized Citation Score.” This redefinition aligns the indicator with the percentile approach already used in policy documents such as the US National Science Board’s Science and Engineering Indicators. By adopting a non‑parametric statistic, the new MNCS would gain precision without sacrificing comparability.

In summary, the paper identifies three core shortcomings of the current MNCS: (1) reliance on ISI Subject Categories that are ill‑suited for field normalization; (2) use of the arithmetic mean for skewed citation data; and (3) continued dependence on journal‑based classifications rather than citation‑based fractional counting. The authors recommend a shift to fractional citation weighting and a median‑ or percentile‑based aggregation, thereby producing a more robust, unbiased, and statistically sound indicator for research evaluation.

Comments & Academic Discussion

Loading comments...

Leave a Comment