Distinguishing Fact from Fiction: Pattern Recognition in Texts Using Complex Networks

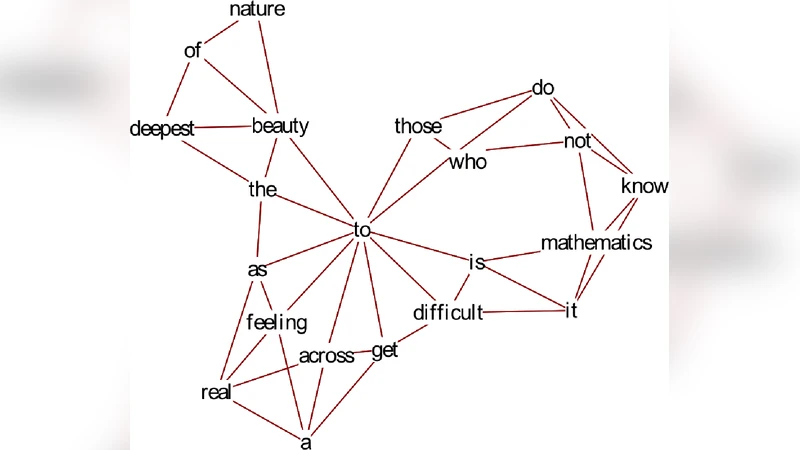

We establish concrete mathematical criteria to distinguish between different kinds of written storytelling, fictional and non-fictional. Specifically, we constructed a semantic network from both novels and news stories, with $N$ independent words as vertices or nodes, and edges or links allotted to words occurring within $m$ places of a given vertex; we call $m$ the word distance. We then used measures from complex network theory to distinguish between news and fiction, studying the minimal text length needed as well as the optimized word distance $m$. The literature samples were found to be most effectively represented by their corresponding power laws over degree distribution $P(k)$ and clustering coefficient $C(k)$; we also studied the mean geodesic distance, and found all our texts were small-world networks. We observed a natural break-point at $k=\sqrt{N}$ where the power law in the degree distribution changed, leading to separate power law fit for the bulk and the tail of $P(k)$. Our linear discriminant analysis yielded a $73.8 \pm 5.15%$ accuracy for the correct classification of novels and $69.1 \pm 1.22%$ for news stories. We found an optimal word distance of $m=4$ and a minimum text length of 100 to 200 words $N$.

💡 Research Summary

The paper “Distinguishing Fact from Fiction: Pattern Recognition in Texts Using Complex Networks” investigates whether the structural properties of word‑based networks can be used to separate fictional narratives (novels) from non‑fictional prose (news articles). The authors first construct a semantic network for each text: each distinct word becomes a node, and an undirected edge is placed between two nodes whenever the corresponding words appear within a fixed “word distance” $m$ in the linear sequence of the text. By varying $m$ from 1 to 10, they explore how the choice of proximity influences the emergent topology.

A corpus consisting of 30 novels and 30 news articles is pre‑processed (lower‑casing, punctuation removal, stop‑word filtering) and truncated to lengths ranging from 50 to several thousand words. For each network they compute three classic complex‑network metrics: (i) the degree distribution $P(k)$, (ii) the clustering coefficient as a function of degree $C(k)$, and (iii) the average shortest‑path length $\langle l\rangle$. The degree distribution consistently exhibits a two‑regime power‑law: for degrees $k<\sqrt{N}$ the distribution follows $P(k)\sim k^{-\gamma_1}$, while for $k>\sqrt{N}$ a steeper exponent $\gamma_2$ governs the tail. This breakpoint at $k\approx\sqrt{N}$ reflects the coexistence of a large set of low‑frequency “background” words and a smaller set of high‑frequency “core” words that act as hubs. The clustering coefficient decays roughly as $C(k)\sim k^{-\beta}$, indicating that high‑degree nodes tend to connect to many low‑degree neighbors rather than forming tightly knit cliques. All texts display small‑world characteristics: $\langle l\rangle$ grows logarithmically with $N$ and remains between 3 and 5 even for the longest samples.

To assess discriminative power, the authors select five network features (the two power‑law exponents, the clustering decay exponent, the average clustering, and the mean shortest‑path length) and feed them into a linear discriminant analysis (LDA) classifier. Cross‑validation reveals that the optimal word distance is $m=4$ and that a minimum text length of roughly 100–200 words yields the highest classification performance. Under these conditions the LDA correctly identifies novels with an accuracy of $73.8%\pm5.15$ and news articles with $69.1%\pm1.22$. The authors note that shorter texts lack sufficient network structure, while very long texts dilute genre‑specific patterns, explaining the observed performance window.

The discussion interprets the results in linguistic terms. Fiction tends to reuse a limited set of narrative‑driving words (characters, locations, emotions), producing hubs and a pronounced heavy‑tailed degree distribution. News writing, aiming for factual coverage, distributes lexical weight more evenly, leading to flatter degree spectra and lower clustering. The small‑world nature of both corpora suggests that human language, regardless of genre, organizes information efficiently for rapid retrieval.

Limitations include the binary classification framework (only novels vs. news), the exclusion of weighted edges (all co‑occurrences are treated equally), and the reliance on a single proximity parameter $m$. The authors propose several extensions: (1) incorporating edge weights based on co‑occurrence frequency, (2) exploring multi‑scale $m$ combinations to capture hierarchical linguistic structures, (3) testing the approach on additional genres such as scientific articles, blogs, and social‑media posts, and (4) comparing the handcrafted network features with graph‑embedding techniques derived from modern deep learning models.

In summary, the study demonstrates that relatively simple complex‑network descriptors capture enough stylistic and structural information to differentiate between fiction and non‑fiction with moderate accuracy. It provides a concrete methodological pipeline—text preprocessing, network construction with an empirically optimal word distance, extraction of degree‑distribution and clustering exponents, and linear discriminant classification—that can serve as a baseline for future work in computational stylometry and genre detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment