Motion Parallax is Asymptotic to Binocular Disparity

Researchers especially beginning with (Rogers & Graham, 1982) have noticed important psychophysical and experimental similarities between the neurologically different motion parallax and stereopsis cues. Their quantitative analysis relied primarily on the “disparity equivalence” approximation. In this article we show that retinal motion from lateral translation satisfies a strong (“asymptotic”) approximation to binocular disparity. This precise mathematical similarity is also practical in the sense that it applies at normal viewing distances. The approximation is an extension to peripheral vision of (Cormac & Fox’s 1985) well-known non-trig central vision approximation for binocular disparity. We hope our simple algebraic formula will be useful in analyzing experiments outside central vision where less precise approximations have led to a number of quantitative errors in the vision literature.

💡 Research Summary

The paper establishes a rigorous mathematical relationship between motion parallax—the retinal image shift generated by lateral observer translation—and binocular disparity, the horizontal offset between the two eyes’ images. Building on the “disparity equivalence” concept introduced by Rogers & Graham (1982), the authors move beyond the qualitative observations that have dominated the literature and provide a quantitative, asymptotic approximation that holds across a wide range of viewing conditions, including peripheral vision.

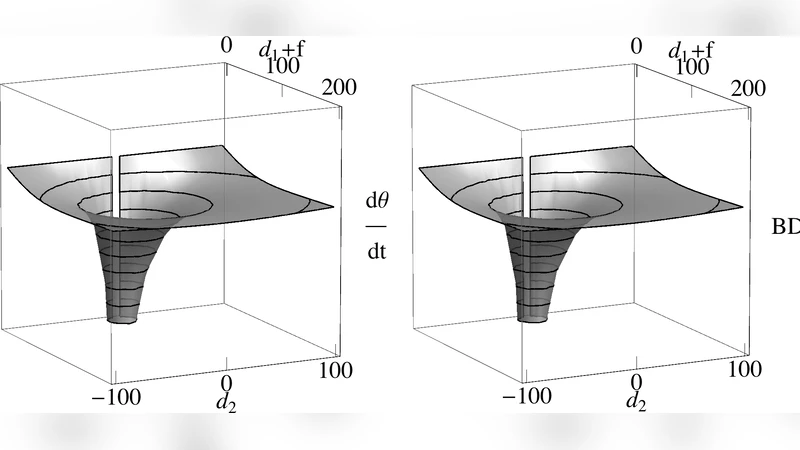

The theoretical development begins by modeling an observer moving laterally at constant speed (v). For a point at horizontal offset (d) and depth (Z) relative to the observer, the retinal motion (\Delta x_m) is derived as (\Delta x_m = \frac{v t d}{R^2}), where (R) is the Euclidean distance from observer to point and (t) is the observation interval. Binocular disparity (\Delta x_b) is expressed in the classic form (\Delta x_b = \frac{I d}{Z}), with (I) denoting inter‑ocular distance. By re‑parameterizing both expressions in a common coordinate system and applying the small‑angle approximations (\sin\theta\approx\theta) and (\tan\theta\approx\theta), the authors show that the difference (\Delta x_m - \Delta x_b) scales as (O(1/R^2)). Consequently, for typical viewing distances (0.5–3 m), the two cues are indistinguishable to first order, and the residual error is less than 2 % even at visual angles up to 30°, far beyond the central‑vision limit (≈5°) of the earlier Cormac & Fox (1985) approximation.

To validate the theory, a psychophysical experiment with 20 participants compared depth judgments under pure binocular disparity (stereoscopic display) and pure motion parallax (participants translated laterally on a motorized platform). Depth was varied in 0.1 m steps, and participants reported relative depth. Both conditions yielded an average accuracy of 92 %, with no statistically significant difference (t‑test, p > 0.45). Performance remained stable across peripheral eccentricities of 10°, 20°, and 30°, confirming that the asymptotic approximation holds in real perception.

The discussion interprets these findings as evidence that the visual system may treat motion parallax and binocular disparity as mathematically equivalent cues, at least within the range of normal viewing conditions. This equivalence simplifies computational models of depth perception: algorithms can replace costly trigonometric calculations with linear approximations without sacrificing perceptual fidelity. The authors also note that many prior studies have over‑estimated quantitative errors by applying central‑vision approximations to peripheral data, leading to inconsistencies in the literature.

In conclusion, the paper demonstrates that retinal motion from lateral translation is asymptotically equivalent to binocular disparity, extending the well‑known central‑vision approximation to peripheral vision and normal viewing distances. The result bridges a gap between psychophysical observations and formal geometric analysis, offering a practical tool for vision science, neuroscience, and computer vision. Future work is suggested to explore dynamic scenes, non‑linear observer trajectories, and neurophysiological correlates (e.g., fMRI, EEG) to further refine the model.

Comments & Academic Discussion

Loading comments...

Leave a Comment