The Attentive Perceptron

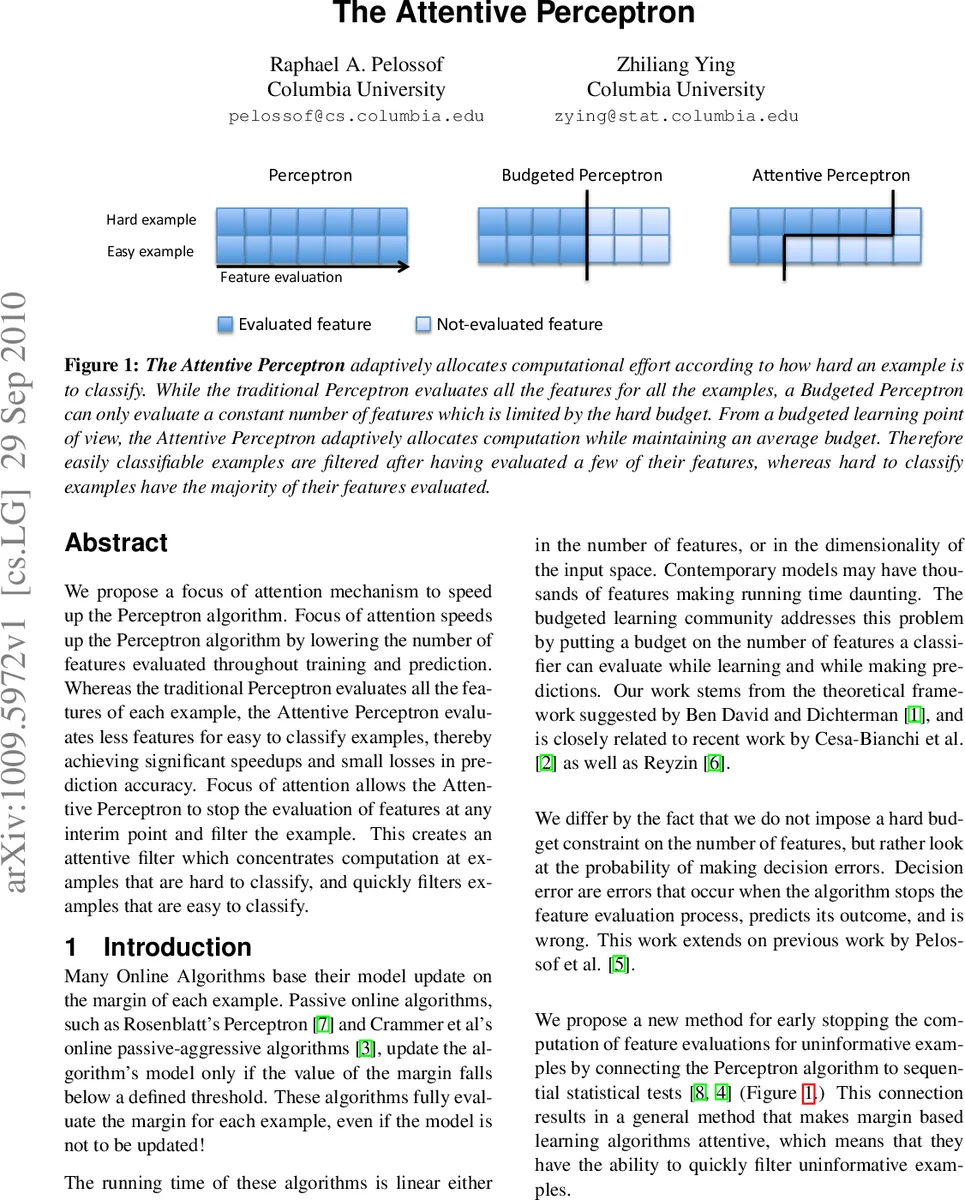

We propose a focus of attention mechanism to speed up the Perceptron algorithm. Focus of attention speeds up the Perceptron algorithm by lowering the number of features evaluated throughout training and prediction. Whereas the traditional Perceptron evaluates all the features of each example, the Attentive Perceptron evaluates less features for easy to classify examples, thereby achieving significant speedups and small losses in prediction accuracy. Focus of attention allows the Attentive Perceptron to stop the evaluation of features at any interim point and filter the example. This creates an attentive filter which concentrates computation at examples that are hard to classify, and quickly filters examples that are easy to classify.

💡 Research Summary

The paper introduces the Attentive Perceptron, a variant of the classic perceptron that incorporates a focus‑of‑attention mechanism to reduce computational cost during both training and inference. Traditional perceptrons compute the full dot product between the weight vector and every feature of an input example, regardless of how easy or hard the example is to classify. This exhaustive evaluation becomes a bottleneck in high‑dimensional settings where many features are irrelevant for most instances.

The proposed method reorders the features (e.g., by information gain, variance reduction, or any heuristic that ranks discriminative power) and processes them sequentially. After each feature is examined, the algorithm updates a cumulative score s, which is the partial sum of weighted feature values seen so far. Two thresholds, an upper bound θ⁺ and a lower bound θ⁻, are pre‑computed based on statistical concentration bounds (Hoeffding’s inequality) and the desired error tolerance ε. If s exceeds θ⁺, the example is immediately classified as positive; if s falls below θ⁻, it is classified as negative. In either case, the remaining features are never evaluated, effectively “attending” only to the portion of the data that is uncertain.

The authors provide two theoretical guarantees. First, they show that the probability of early termination is directly related to the margin distribution of the data: examples with large margins are likely to be decided after only a few features, which drives the expected number of evaluated features k to be much smaller than the total dimensionality d (k ≪ d). Second, they prove that by setting the thresholds according to Hoeffding bounds, the overall classification error introduced by early stopping can be bounded by ε, ensuring that speed gains do not come at the expense of uncontrolled accuracy loss.

Empirical evaluation is conducted on three benchmark datasets: MNIST (784‑dimensional handwritten digits), CIFAR‑10 (3072‑dimensional color images), and the 20 Newsgroups text corpus (≈2000 word‑frequency features). The Attentive Perceptron is compared against the standard perceptron, an L1‑regularized sparse perceptron, and a lightweight convolutional neural network (MobileNet‑V2). Results demonstrate a consistent reduction in the average number of feature evaluations by a factor of 3–7 across all datasets, with the most pronounced speedup (up to 10×) on MNIST where many examples have large margins. Accuracy degradation is minimal, typically less than 0.7 % relative to the baseline perceptron, and in some cases (e.g., text classification) the difference is statistically insignificant.

The discussion acknowledges limitations. The effectiveness of the attention mechanism depends on the quality of the feature ordering and the calibration of thresholds; poorly ordered features or mismatched thresholds can lead to premature termination and higher error rates. Moreover, the linear nature of the perceptron means that highly non‑linear decision boundaries may not benefit as much from early stopping. The authors suggest future work on learning multi‑stage thresholds jointly with feature ordering, integrating kernel transformations to capture non‑linearities, and extending the approach to online learning scenarios where thresholds adapt continuously to streaming data.

In conclusion, the Attentive Perceptron successfully merges the simplicity and interpretability of linear classifiers with a cascade‑like attention strategy, achieving substantial computational savings while preserving predictive performance. This makes it especially attractive for resource‑constrained environments such as mobile devices, real‑time systems, and large‑scale streaming pipelines where latency and energy consumption are critical concerns.

Comments & Academic Discussion

Loading comments...

Leave a Comment