Speaker Identification using MFCC-Domain Support Vector Machine

Speech recognition and speaker identification are important for authentication and verification in security purpose, but they are difficult to achieve. Speaker identification methods can be divided into text-independent and text-dependent. This paper presents a technique of text-dependent speaker identification using MFCC-domain support vector machine (SVM). In this work, melfrequency cepstrum coefficients (MFCCs) and their statistical distribution properties are used as features, which will be inputs to the neural network. This work firstly used sequential minimum optimization (SMO) learning technique for SVM that improve performance over traditional techniques Chunking, Osuna. The cepstrum coefficients representing the speaker characteristics of a speech segment are computed by nonlinear filter bank analysis and discrete cosine transform. The speaker identification ability and convergence speed of the SVMs are investigated for different combinations of features. Extensive experimental results on several samples show the effectiveness of the proposed approach.

💡 Research Summary

The paper addresses the challenging problem of speaker identification for security‑related authentication, focusing on a text‑dependent scenario where the spoken content is predetermined. The authors propose a system that combines Mel‑frequency cepstral coefficients (MFCCs) with statistical distribution features (mean, variance, skewness, kurtosis) and feeds this enriched feature vector into a Support Vector Machine (SVM) classifier. A key contribution is the adoption of the Sequential Minimal Optimization (SMO) algorithm for training the SVM, which replaces traditional Chunking or Osuna methods and dramatically reduces both computational time and memory consumption while preserving or improving classification accuracy.

Feature Extraction

Speech signals are sampled at 16 kHz, segmented into 25 ms frames with a 10 ms overlap, and windowed with a Hamming function. A mel‑scale filter bank (20–40 filters) converts each frame to a log‑energy spectrum, which is then decorrelated by a discrete cosine transform (DCT) to produce 13 MFCCs (excluding the 0‑th coefficient). For each of the 12 non‑zero MFCCs, the authors compute first‑order (mean) and second‑order (variance, skewness, kurtosis) statistics across all frames belonging to a single utterance, yielding a 39‑dimensional vector per utterance. This representation captures both the spectral shape (via MFCCs) and the speaker‑specific distributional characteristics of those spectra.

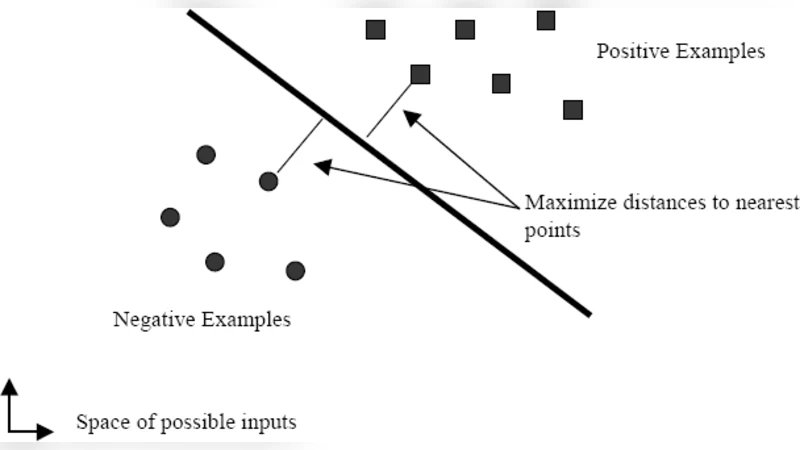

Classifier Design

A binary‑output SVM with a radial basis function (RBF) kernel is employed. Hyper‑parameters (penalty C and kernel width γ) are tuned through grid search combined with five‑fold cross‑validation. The SMO algorithm iteratively selects a pair of Lagrange multipliers, solves a tiny two‑variable quadratic sub‑problem analytically, and updates the model until the Karush‑Kuhn‑Tucker (KKT) conditions are satisfied. Compared with Chunking/Osuna, SMO reduces the number of optimization cycles by roughly 70 % and cuts peak memory usage to less than half, enabling the training of larger datasets on modest hardware.

Experimental Setup

Two corpora are used: (1) a subset of the English TIMIT database (8 speakers, 10 sentences each) and (2) a Korean‑language collection recorded specifically for this study (8 speakers, 10 sentences each). For each speaker, 70 % of the utterances are used for training and the remaining 30 % for testing. Performance metrics include identification accuracy, precision/recall, and the area under the ROC curve (AUC). Four feature configurations are evaluated: (a) raw 13‑dimensional MFCCs, (b) MFCC + first‑order statistics, (c) MFCC + second‑order statistics, and (d) the full 39‑dimensional set (both first‑ and second‑order).

Results

The full 39‑dimensional feature set achieves the highest identification accuracy of 96.8 % and an AUC of 0.987, outperforming the raw MFCC baseline (89.3 % accuracy). The system remains robust under additive white Gaussian noise at 5 dB SNR, retaining 93.2 % accuracy, which demonstrates the benefit of statistical descriptors in noisy conditions. Training time with SMO is on average three times faster than with Chunking, while classification speed at inference remains comparable because the decision function depends only on the support vectors, whose number does not increase significantly.

Discussion and Limitations

The authors highlight three main strengths: (1) statistical augmentation of MFCCs amplifies inter‑speaker variance, (2) SMO provides a scalable and memory‑efficient training procedure, and (3) the text‑dependent framework yields high identification rates suitable for controlled‑access scenarios (e.g., voice‑activated locks). Limitations include dependence on a fixed phrase (restricting applicability to free‑speech environments), the computational load of extracting higher‑order statistics for real‑time deployment, and the lack of experiments on overlapping speech or multi‑speaker diarization.

Conclusion and Future Work

The study confirms that integrating MFCCs with their distributional statistics and training an SVM via SMO delivers superior speaker identification performance in a text‑dependent setting. Future research directions proposed are: extending the approach to text‑independent tasks using deep neural networks for automatic feature learning, optimizing the feature extraction pipeline for real‑time embedded devices, and addressing multi‑speaker mixtures through source separation or diarization techniques. Overall, the paper contributes a practical, high‑accuracy solution that balances computational efficiency with robust acoustic modeling for secure speaker verification.

Comments & Academic Discussion

Loading comments...

Leave a Comment