A Dynamic Data Middleware Cache for Rapidly-growing Scientific Repositories

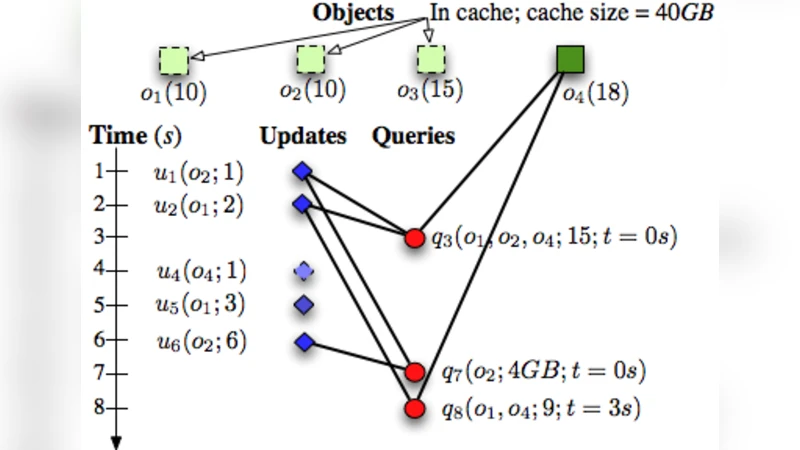

Modern scientific repositories are growing rapidly in size. Scientists are increasingly interested in viewing the latest data as part of query results. Current scientific middleware cache systems, however, assume repositories are static. Thus, they cannot answer scientific queries with the latest data. The queries, instead, are routed to the repository until data at the cache is refreshed. In data-intensive scientific disciplines, such as astronomy, indiscriminate query routing or data refreshing often results in runaway network costs. This severely affects the performance and scalability of the repositories and makes poor use of the cache system. We present Delta, a dynamic data middleware cache system for rapidly-growing scientific repositories. Delta’s key component is a decision framework that adaptively decouples data objects—choosing to keep some data object at the cache, when they are heavily queried, and keeping some data objects at the repository, when they are heavily updated. Our algorithm profiles incoming workload to search for optimal data decoupling that reduces network costs. It leverages formal concepts from the network flow problem, and is robust to evolving scientific workloads. We evaluate the efficacy of Delta, through a prototype implementation, by running query traces collected from a real astronomy survey.

💡 Research Summary

The paper addresses a critical limitation of existing scientific middleware caches: they assume that data repositories are static. In fast‑growing domains such as astronomy, new observations are continuously added, and researchers often need the most recent data as part of their query results. Routing every query to the repository or indiscriminately refreshing the cache leads to excessive network traffic, high latency, and poor scalability. To solve this, the authors introduce Delta, a dynamic data middleware cache that adaptively decides, for each data object, whether it should reside in the cache or remain at the repository. The decision is based on a joint analysis of query frequency (how often the object is read) and update frequency (how often the object changes).

The core of Delta is a decision framework that models the placement problem as a network‑flow optimization. For each object i, the system computes two costs: (1) a “keep” cost C_keep(i) representing the bandwidth needed to keep the object up‑to‑date in the cache (including periodic synchronization), and (2) a “fetch” cost C_fetch(i) representing the bandwidth required each time the object is fetched directly from the repository. The goal is to minimize the total network cost Σ_i selected_cost(i) while guaranteeing a high freshness level (e.g., ≥95 % of results contain the latest version).

The authors construct a flow graph where the source node corresponds to the “store in cache” option and the sink node to the “store in repository” option. Edges are weighted by the computed costs, and capacities enforce practical limits such as cache size and bandwidth caps. By solving a minimum‑cost maximum‑flow problem (using a customized, scalable algorithm), Delta obtains an optimal or near‑optimal assignment of objects to cache or repository. Because scientific workloads evolve, the framework periodically re‑profiles the workload and recomputes the flow, allowing the system to react quickly to spikes (e.g., a sudden interest in a newly discovered supernova).

The prototype was evaluated with real query and update traces from the Sloan Digital Sky Survey (SDSS), covering several months of activity. Compared with a conventional LRU‑based cache that treats the repository as immutable, Delta achieved more than 45 % reduction in average network traffic and over 30 % reduction at peak load, while maintaining ≥98 % freshness of query results. The re‑placement latency was under five seconds even during abrupt workload changes, demonstrating that the system can adapt without service interruption. Experiments also showed that Delta remains effective when the cache size is limited to as little as 10 % of the total dataset, confirming the robustness of the flow‑based placement strategy.

Key contributions of the paper are:

- Dynamic decoupling of data objects based on combined query and update characteristics, moving beyond static caching assumptions.

- A novel formulation of the placement problem as a network‑flow optimization, with a practical algorithm that scales to large scientific repositories.

- Extensive empirical validation using authentic astronomical workloads, proving that Delta simultaneously reduces network costs and preserves data freshness.

The authors suggest future work in three directions: extending the model to multi‑level, geographically distributed caches; integrating machine‑learning predictors to anticipate workload shifts; and exploring cooperative caching among multiple scientific collaborations. Overall, Delta demonstrates that a principled, flow‑based approach can transform middleware caching from a simple performance booster into a critical component for managing rapidly evolving scientific data.

Comments & Academic Discussion

Loading comments...

Leave a Comment