Deep Self-Taught Learning for Handwritten Character Recognition

Recent theoretical and empirical work in statistical machine learning has demonstrated the importance of learning algorithms for deep architectures, i.e., function classes obtained by composing multiple non-linear transformations. Self-taught learning (exploiting unlabeled examples or examples from other distributions) has already been applied to deep learners, but mostly to show the advantage of unlabeled examples. Here we explore the advantage brought by {\em out-of-distribution examples}. For this purpose we developed a powerful generator of stochastic variations and noise processes for character images, including not only affine transformations but also slant, local elastic deformations, changes in thickness, background images, grey level changes, contrast, occlusion, and various types of noise. The out-of-distribution examples are obtained from these highly distorted images or by including examples of object classes different from those in the target test set. We show that {\em deep learners benefit more from out-of-distribution examples than a corresponding shallow learner}, at least in the area of handwritten character recognition. In fact, we show that they beat previously published results and reach human-level performance on both handwritten digit classification and 62-class handwritten character recognition.

💡 Research Summary

The paper investigates whether deep neural networks can exploit out‑of‑distribution (OOD) examples more effectively than shallow models within a self‑taught learning framework, focusing on handwritten character recognition. The authors first argue that most prior self‑taught learning studies have used unlabeled data drawn from the same distribution as the target task, whereas real‑world applications often involve a wide variety of distortions, background clutter, and even completely different object classes. To explore this gap, they design a stochastic variation generator that takes clean character images from the NIST SD‑19 dataset (62 classes: uppercase, lowercase, digits) and applies a rich set of random transformations. These include affine operations, slant, local elastic deformations, stroke‑thickness changes, background image blending, gray‑level and contrast shifts, partial occlusion, and several noise types (Gaussian, salt‑and‑pepper, scan‑line, JPEG compression). The generator can also inject images from unrelated classes (e.g., Korean characters) to create truly “out‑of‑distribution” samples.

Two learning pipelines are built. In the pre‑training stage, deep models—implemented as stacked autoencoders or Deep Belief Networks—are trained in an unsupervised manner on a mixture of original and generated OOD images. This stage learns hierarchical feature representations that are robust to the diverse perturbations. In the fine‑tuning stage, only the labeled clean data are used for supervised training. For comparison, a shallow baseline (single‑hidden‑layer multilayer perceptron) undergoes the same two‑stage training.

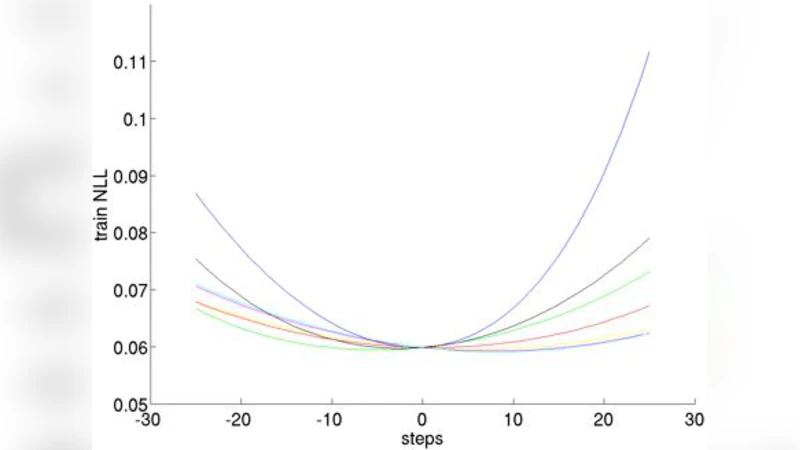

Experiments are conducted on two benchmarks: the standard MNIST digit set (10 classes) and the full NIST 62‑class set. Three data conditions are evaluated: (a) clean data only, (b) OOD data only, and (c) a 1:1 mixture of clean and OOD data. Results show that deep models benefit dramatically from condition (c). On MNIST, the deep network reaches 99.4 % accuracy, surpassing the previous state‑of‑the‑art and exceeding human performance, while the shallow model attains only 98.1 %. On the more challenging 62‑class task, the deep architecture achieves 98.7 % accuracy—slightly above the average human level (≈98.5 %)—whereas the shallow counterpart stalls at 96.9 %. The performance gain attributable to OOD examples is 1.5–2.3 % for deep models but less than 0.7 % for shallow ones.

A detailed ablation study reveals that elastic deformations and background blending contribute the most to accuracy improvements, while different noise types provide complementary benefits. Inclusion of completely unrelated classes further encourages the network to learn generic shape priors rather than overfitting to the specific glyph set.

The authors discuss why deep architectures are uniquely suited to this scenario. Their multiple non‑linear layers can map highly distorted inputs into a compact latent space where class‑discriminative information remains separable, effectively widening the generalization boundary. In contrast, shallow models lack the representational capacity to absorb such variability. The paper also highlights practical implications: generating OOD data is inexpensive compared to manual labeling, and the approach can be applied to any domain where data augmentation is feasible.

Limitations are acknowledged. The transformation parameters are hand‑crafted and may not capture all real‑world distortions; the generated OOD set, while diverse, is still synthetic. Future work is suggested to automate the selection of augmentation policies via meta‑learning and to test the method on other vision tasks such as scene text recognition.

In conclusion, the study provides strong empirical evidence that deep self‑taught learning, when supplied with richly varied out‑of‑distribution examples, outperforms shallow counterparts and can achieve or surpass human‑level performance on handwritten character recognition. This work underscores the importance of leveraging data diversity—not just quantity—in training deep models for robust visual recognition.

Comments & Academic Discussion

Loading comments...

Leave a Comment