The Detection of Human Spreadsheet Errors by Humans versus Inspection (Auditing) Software

Previous spreadsheet inspection experiments have had human subjects look for seeded errors in spreadsheets. In this study, subjects attempted to find errors in human-developed spreadsheets to avoid the potential artifacts created by error seeding. Human subject success rates were compared to the successful rates for error-flagging by spreadsheet static analysis tools (SSATs) applied to the same spreadsheets. The human error detection results were comparable to those of studies using error seeding. However, Excel Error Check and Spreadsheet Professional were almost useless for correctly flagging natural (human) errors in this study.

💡 Research Summary

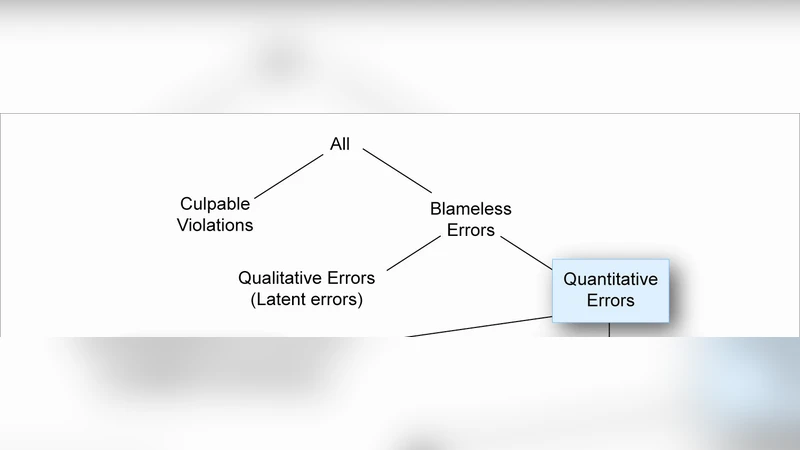

The paper addresses a long‑standing methodological issue in spreadsheet error‑detection research: most prior experiments have relied on “error seeding,” where researchers deliberately insert faults into spreadsheets. While seeding allows precise control over error type and location, it may create artefacts that do not reflect the complexity of naturally occurring mistakes made by real users. To overcome this limitation, the authors assembled a corpus of 28 authentic spreadsheets created by humans for everyday business tasks (financial reporting, project management, data analysis, etc.). Within these files they identified 84 genuine errors, classified into four categories: formula errors (35), data‑entry mistakes (22), logical inconsistencies (15), and layout/formatting problems (12).

The experimental design involved two parallel evaluation streams. The first stream consisted of 30 human participants—graduate students in accounting, professional accountants, and financial analysts—who were asked to audit 2–3 spreadsheets each. Participants recorded every error they discovered and the time taken per error. The second stream applied two commercially available spreadsheet static analysis tools (SSATs): Microsoft Excel’s built‑in “Error Check” and the third‑party product “Spreadsheet Professional.” Both tools were run on the same set of spreadsheets without any manual configuration.

Results show a stark contrast between human performance and the two SSATs. Human auditors identified an average of 62 % of the real errors, with particularly high detection rates for logical inconsistencies (78 %) and data‑entry mistakes (73 %). In contrast, Excel Error Check flagged only 8 % of the errors, and Spreadsheet Professional flagged 12 %. The errors that the tools did catch were almost exclusively obvious syntactic formula problems; they failed to recognize the more subtle, context‑dependent faults that humans spotted. Time‑efficiency analysis revealed that humans required roughly 4.3 minutes per error, whereas the tools produced instantaneous reports but with negligible recall, rendering their speed advantage moot in practice.

The authors interpret these findings through the lens of domain knowledge and contextual reasoning. Human auditors bring an understanding of the business logic, typical data patterns, and spreadsheet layout conventions, enabling them to infer when a cell’s value “doesn’t make sense” even if the formula is syntactically correct. Current SSATs, by contrast, rely on static syntactic checks and simple dependency graphs, lacking the ability to model higher‑level semantics or to learn from historical error patterns.

Several limitations are acknowledged. The participant pool is modest (n = 30), and the spreadsheet set is heavily weighted toward finance and accounting, which may limit generalizability to other domains such as scientific modeling or engineering design. Moreover, only two SSATs were evaluated, both of which represent relatively mature, rule‑based products; newer machine‑learning‑driven tools were not included.

The paper concludes with concrete suggestions for future work. First, expanding the corpus to cover a broader range of industries and spreadsheet sizes would provide a more robust benchmark. Second, integrating machine‑learning techniques that can learn error signatures from large repositories of real spreadsheets could improve detection of logical and data‑entry anomalies. Third, the authors advocate for a hybrid audit workflow: using automated tools for rapid, low‑level syntactic screening while reserving human expertise for higher‑level logical validation. Such a combined approach could capitalize on the speed of automation without sacrificing the nuanced insight that only a knowledgeable reviewer can provide.

Overall, the study supplies empirical evidence that, at least for the tools examined, commercial static analysis software is largely ineffective at flagging naturally occurring spreadsheet errors. This challenges the prevailing assumption that off‑the‑shelf SSATs can replace—or even substantially augment—human auditors in routine spreadsheet quality assurance. The findings call for a reassessment of current auditing practices and for renewed investment in more sophisticated, context‑aware error‑detection technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment