Directed Transmission Method, A Fully Asynchronous approach to Solve Sparse Linear Systems in Parallel

In this paper, we propose a new distributed algorithm, called Directed Transmission Method (DTM). DTM is a fully asynchronous and continuous-time iterative algorithm to solve SPD sparse linear system. As an architecture-aware algorithm, DTM could be freely running on all kinds of heterogeneous parallel computer. We proved that DTM is convergent by making use of the final-value theorem of Laplacian Transformation. Numerical experiments show that DTM is stable and efficient.

💡 Research Summary

The paper introduces the Directed Transmission Method (DTM), a novel fully asynchronous, continuous‑time iterative algorithm for solving large symmetric positive‑definite (SPD) sparse linear systems of the form Ax = b. The authors begin by highlighting the limitations of existing domain‑decomposition and asynchronous methods, which typically rely on global synchronization and suffer from poor convergence compared to their synchronous counterparts.

DTM’s core innovation is the introduction of a virtual circuit element called a Directed Transmission Line (DTL). A DTL models a one‑way communication link with a propagation delay τ and a positive characteristic impedance Z. Its behavior is described by the continuous‑time “Directed Transmission Delay Equation” U_out(t) = U_in(t − τ) − Z · I_out(t). By pairing two DTLs with possibly different forward and backward delays, the authors define a Directed Transmission Line Pair (DTLP), which directly maps the asymmetric communication latencies that occur in heterogeneous parallel machines.

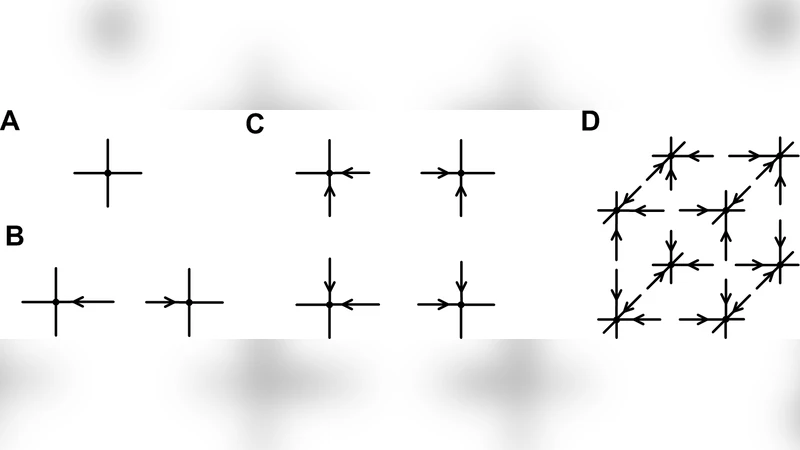

The linear system is first represented as an “electric graph” where vertices correspond to variables and sources, and edges carry the matrix coefficients as conductances. To enable distributed computation, the graph is partitioned using Electric Vertex Splitting (EVS). Each boundary vertex is duplicated into a pair of “twin vertices” that become ports of the sub‑graphs. An inflow current ω is associated with each port to capture the influence of neighboring sub‑graphs.

DTM inserts a DTLP between every pair of twin vertices belonging to adjacent sub‑graphs. The resulting system of each sub‑graph can be written in the compact form

(D_j − Z_j⁻¹) u_j = f_j + Z_j⁻¹ τ_j⁻¹ ω_j,

where D_j is the local SPD matrix (constant throughout the computation), Z_j is a diagonal matrix of characteristic impedances, τ_j contains the propagation delays of the incident DTLs, u_j holds the port potentials, and ω_j contains the most recent port currents received from neighboring sub‑graphs. Because D_j does not change, a single Cholesky factorization (or any other suitable factorization) can be performed once at the start; subsequent iterations require only forward‑backward substitution, making each local solve extremely cheap.

The continuous‑time iteration is driven by the latest remote boundary conditions; no global iteration counter or barrier is needed. The authors prove convergence by applying the Laplace transform to the global system and invoking the final‑value theorem. Under the assumptions that all delays τ are positive, all impedances Z are positive, and each D_j is SPD, the solution trajectories converge to the unique solution of Ax = b.

Numerical experiments are presented on small test problems (e.g., a 4‑node and an 8‑node SPD system) distributed over two processors with asymmetric communication delays (6.7 µs one way, 2.9 µs the other). The experiments demonstrate that DTM remains stable, converges without any synchronization, and that the choice of characteristic impedances significantly affects convergence speed—proper tuning can accelerate convergence by an order of magnitude, as illustrated in the RMS‑error plot (Figure 9).

The paper concludes that DTM offers a new “algorithm‑architecture delay mapping” paradigm, allowing the algorithm to be tightly coupled to the underlying hardware’s communication characteristics. It is architecture‑aware, suitable for heterogeneous clusters, GPU‑CPU hybrids, and other non‑uniform platforms. Limitations include the restriction to SPD matrices, the need for manual selection of τ and Z parameters, and the preprocessing overhead of constructing the electric graph and performing EVS. Future work is suggested on extending the theory to indefinite or non‑symmetric systems, automating parameter selection, exploring multilevel EVS, and scaling experiments to large‑scale real‑world problems.

Overall, DTM represents a significant conceptual shift in asynchronous parallel linear solvers by embedding communication delays directly into the iterative scheme, eliminating global synchronization, and enabling efficient reuse of factorized local matrices.

Comments & Academic Discussion

Loading comments...

Leave a Comment