A Taxonomy and Survey of Energy-Efficient Data Centers and Cloud Computing Systems

Traditionally, the development of computing systems has been focused on performance improvements driven by the demand of applications from consumer, scientific and business domains. However, the ever increasing energy consumption of computing systems has started to limit further performance growth due to overwhelming electricity bills and carbon dioxide footprints. Therefore, the goal of the computer system design has been shifted to power and energy efficiency. To identify open challenges in the area and facilitate future advancements it is essential to synthesize and classify the research on power and energy-efficient design conducted to date. In this work we discuss causes and problems of high power / energy consumption, and present a taxonomy of energy-efficient design of computing systems covering the hardware, operating system, virtualization and data center levels. We survey various key works in the area and map them to our taxonomy to guide future design and development efforts. This chapter is concluded with a discussion of advancements identified in energy-efficient computing and our vision on future research directions.

💡 Research Summary

The paper addresses the escalating power and energy consumption of modern data‑center and cloud‑computing infrastructures, arguing that the traditional performance‑centric design paradigm is no longer sustainable due to rising electricity costs, grid constraints, and carbon‑footprint regulations. It begins by diagnosing the root causes of high energy use: over‑provisioned servers, inefficient power‑delivery hardware, cooling‑system dominance, workload imbalance, and sub‑optimal resource orchestration.

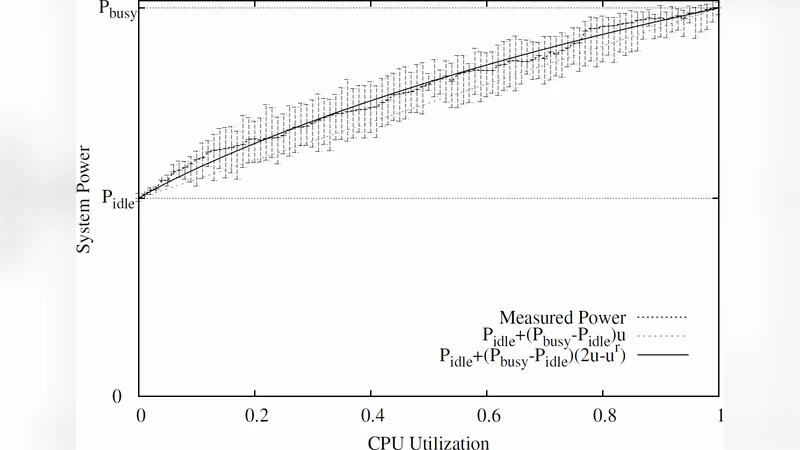

To bring order to a fragmented research landscape, the authors propose a four‑layer taxonomy that spans hardware, operating system, virtualization, and data‑center levels. At the hardware tier, they review low‑voltage design, dynamic voltage and frequency scaling (DVFS), power‑gating, multi‑core power management, and energy‑recovery techniques such as thermoelectric conversion. The OS tier covers power‑aware scheduling, memory and I/O power budgeting, P‑state/C‑state management, and profiling tools that expose fine‑grained consumption. The virtualization tier examines VM/container consolidation, power‑prediction‑driven live migration, shared‑resource throttling, and hypervisor‑level power‑aware dispatching, with examples from OpenStack, Kubernetes, and major hypervisors. Finally, the data‑center tier surveys server density improvements, workload‑placement optimization, advanced cooling (free‑cooling, liquid), power‑distribution management (UPS, PDUs), integration of renewable sources (solar, wind), and on‑site energy storage (batteries, hydrogen).

The survey maps a large body of representative works onto this taxonomy, revealing that hardware‑level and OS‑level techniques are relatively mature, whereas cross‑layer coordination—especially involving virtualization and whole‑facility renewable integration—remains under‑explored. The authors illustrate these gaps with a matrix that highlights where research effort is concentrated and where opportunities exist.

Looking forward, the paper outlines four strategic research directions: (1) cross‑layer cooperative power‑management frameworks that enable real‑time information exchange among hardware, OS, hypervisor, and data‑center controllers; (2) AI‑driven workload forecasting and power optimization, leveraging machine‑learning models to anticipate demand spikes and proactively adjust resource allocations; (3) dynamic coupling of renewable generation and energy‑storage systems, allowing data‑centers to shift workloads in response to fluctuating green‑energy availability; and (4) lightweight virtualization and micro‑service architectures that preserve security and reliability while minimizing overhead.

By synthesizing the state of the art and presenting a clear, hierarchical classification, the paper provides both researchers and practitioners with a roadmap for designing next‑generation, energy‑efficient data‑centers and cloud platforms. It emphasizes that achieving substantial energy savings will require coordinated advances across all four layers, informed by rigorous measurement, predictive analytics, and an openness to integrating sustainable power sources into the core of computing infrastructure.

Comments & Academic Discussion

Loading comments...

Leave a Comment