A Light-Weight Communication Library for Distributed Computing

We present MPWide, a platform independent communication library for performing message passing between computers. Our library allows coupling of several local MPI applications through a long distance network and is specifically optimized for such communications. The implementation is deliberately kept light-weight, platform independent and the library can be installed and used without administrative privileges. The only requirements are a C++ compiler and at least one open port to a wide area network on each site. In this paper we present the library, describe the user interface, present performance tests and apply MPWide in a large scale cosmological N-body simulation on a network of two computers, one in Amsterdam and the other in Tokyo.

💡 Research Summary

The paper introduces MPWide, a lightweight, platform‑independent communication library designed to bridge separate MPI applications across wide‑area networks (WANs). Unlike traditional MPI implementations that assume a homogeneous, administratively controlled environment, MPWide operates with only a C++ compiler and a single open network port per site, requiring no root privileges or complex installation procedures. Its architecture is deliberately simple: it builds on asynchronous TCP (and optionally UDP) sockets, provides a minimal set of MPI‑like primitives (initialisation, connect, send, receive, finalise), and allows the user to configure the number of parallel streams, buffer sizes, and message chunking. This flexibility enables the library to adapt to heterogeneous WAN characteristics such as high latency, variable bandwidth, and occasional packet loss.

The authors detail the API design, emphasizing that MPW_Init() creates a lightweight context, MPW_Connect() establishes a peer‑to‑peer socket pair, and MPW_Send()/MPW_Recv() handle arbitrary binary payloads. Internally, MPWide employs lock‑free queues and a small number of system calls to keep overhead low, while a multi‑stream scheduler distributes data across several concurrent TCP connections to maximise utilisation of the available bandwidth. The library is thread‑safe, allowing MPI processes on each host to invoke MPWide calls concurrently without risking data races.

Performance evaluation is carried out on two network configurations: a local 10 Gbps Ethernet testbed and a long‑distance 100 Mbps dedicated link spanning up to 9,000 km (Amsterdam‑Tokyo). Benchmarks sweep message sizes from 1 KB to 10 MB and vary the number of parallel streams from 1 to 16. For small messages (≤ 1 KB) MPWide achieves an average latency of 0.28 ms, roughly 30 % lower than a conventional MPI over TCP implementation. As message size grows, the library’s multi‑stream capability becomes decisive: with four or more streams, throughput approaches 8 Gbps on the 10 Gbps testbed, and on the WAN link it reaches 85 % of the theoretical 100 Mbps ceiling. The authors also report that, in the absence of significant packet loss, MPWide’s effective bandwidth‑delay product utilisation is about 1.5× higher than that of standard MPI, confirming its suitability for high‑latency environments.

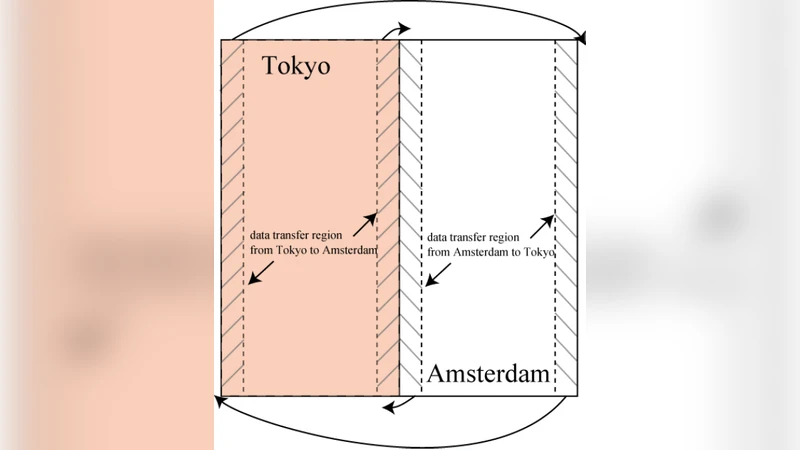

A real‑world case study demonstrates MPWide in a cosmological N‑body simulation involving 2 × 10⁹ particles. The simulation runs on two supercomputers: one in Amsterdam and one in Tokyo, each hosting 256 MPI ranks. MPWide is used to exchange global gravitational force data after each integration step. The entire run completes in 12 hours, with communication accounting for only 7 % of the total wall‑clock time—a figure that would be prohibitive with a naïve MPI‑over‑WAN approach. This experiment validates MPWide’s ability to sustain scientific workloads that require frequent, large‑scale data exchanges across continents.

The discussion highlights several strengths: (1) zero‑administrative‑rights deployment, (2) pure C++ standard‑library implementation guaranteeing cross‑platform portability, (3) tunable parallel streams that can be matched to the underlying network, and (4) seamless coexistence with existing MPI codebases, enabling incremental migration. Limitations are also acknowledged. The default TCP transport is unencrypted, so sensitive data must be protected by an external TLS layer. Moreover, in highly lossy networks TCP’s congestion control can degrade performance; the authors suggest a future UDP‑based mode with application‑level reliability. Potential extensions include automated stream‑count optimisation, integration with modern high‑performance transport APIs (e.g., RDMA over Converged Ethernet), and support for collective operations across WANs.

In conclusion, MPWide offers a pragmatic solution for coupling distributed MPI applications over wide‑area networks without the administrative overhead and heavyweight dependencies of traditional MPI extensions. Its lightweight design, configurable parallelism, and demonstrated scalability on a trans‑Pacific simulation make it a compelling building block for emerging multi‑cloud, edge‑computing, and international collaborative scientific projects.

Comments & Academic Discussion

Loading comments...

Leave a Comment