Control Variates for Reversible MCMC Samplers

A general methodology is introduced for the construction and effective application of control variates to estimation problems involving data from reversible MCMC samplers. We propose the use of a spec

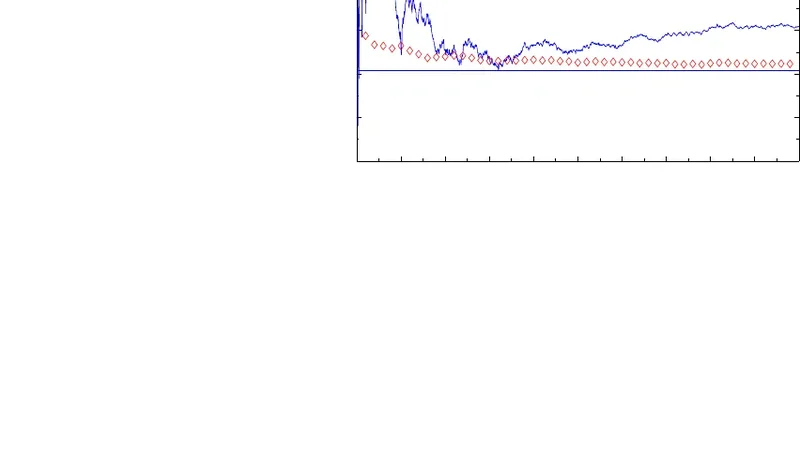

A general methodology is introduced for the construction and effective application of control variates to estimation problems involving data from reversible MCMC samplers. We propose the use of a specific class of functions as control variates, and we introduce a new, consistent estimator for the values of the coefficients of the optimal linear combination of these functions. The form and proposed construction of the control variates is derived from our solution of the Poisson equation associated with a specific MCMC scenario. The new estimator, which can be applied to the same MCMC sample, is derived from a novel, finite-dimensional, explicit representation for the optimal coefficients. The resulting variance-reduction methodology is primarily applicable when the simulated data are generated by a conjugate random-scan Gibbs sampler. MCMC examples of Bayesian inference problems demonstrate that the corresponding reduction in the estimation variance is significant, and that in some cases it can be quite dramatic. Extensions of this methodology in several directions are given, including certain families of Metropolis-Hastings samplers and hybrid Metropolis-within-Gibbs algorithms. Corresponding simulation examples are presented illustrating the utility of the proposed methods. All methodological and asymptotic arguments are rigorously justified under easily verifiable and essentially minimal conditions.

💡 Research Summary

This paper develops a systematic framework for constructing and applying control variates to reduce variance in estimators derived from reversible Markov chain Monte Carlo (MCMC) samplers. The authors focus primarily on random‑scan Gibbs samplers whose full conditional distributions belong to conjugate families, because in this setting the Poisson equation associated with the Markov kernel admits an explicit, finite‑dimensional solution that can be exploited to build useful control variates.

The central theoretical device is the Poisson equation ((I-P)g = f - \pi(f)), where (P) is the transition operator of the reversible chain, (\pi) the target distribution, and (f) the function whose expectation is sought. The solution (g) yields a zero‑mean control variate (h = g - Pg). Directly solving for (g) is usually impossible, so the authors propose to approximate it by a linear combination of a pre‑specified basis ({\psi_j}{j=1}^m). Each candidate control variate takes the form (h_j = \psi_j - P\psi_j), guaranteeing (\mathbb{E}\pi

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...