Bayesian Model Selection for Beta Autoregressive Processes

We deal with Bayesian inference for Beta autoregressive processes. We restrict our attention to the class of conditionally linear processes. These processes are particularly suitable for forecasting purposes, but are difficult to estimate due to the constraints on the parameter space. We provide a full Bayesian approach to the estimation and include the parameter restrictions in the inference problem by a suitable specification of the prior distributions. Moreover in a Bayesian framework parameter estimation and model choice can be solved simultaneously. In particular we suggest a Markov-Chain Monte Carlo (MCMC) procedure based on a Metropolis-Hastings within Gibbs algorithm and solve the model selection problem following a reversible jump MCMC approach.

💡 Research Summary

The paper addresses Bayesian inference for Beta autoregressive (BAR) processes, focusing on the subclass of conditionally linear models that are particularly useful for forecasting proportion‑type time series bounded in the interval (0, 1). Traditional estimation methods for BAR models are hampered by the inherent constraints on the parameters: the conditional mean must stay within (0, 1) and the precision (or dispersion) parameter must be positive. Moreover, the linear predictor that drives the conditional mean imposes additional restrictions on the regression coefficients to guarantee admissibility of the mean at every time point. The authors propose a fully Bayesian framework that incorporates these constraints directly into the prior distributions, thereby eliminating the need for ad‑hoc post‑processing or constrained optimization.

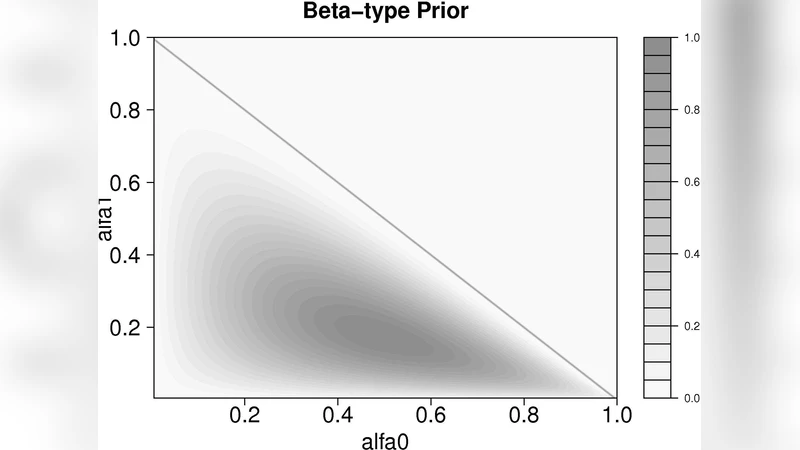

The model specification follows the standard BAR formulation: given past observations, the current observation y_t follows a Beta distribution with parameters μ_t · φ and (1 − μ_t) · φ, where μ_t is the conditional mean obtained by applying the inverse link function (typically the logistic) to a linear combination of past transformed observations. The parameter vector consists of an intercept α, a set of autoregressive coefficients β = (β_1,…,β_p), and the precision φ. To respect the constraints, the authors assign normal priors N(0,σ²) to α and each β_i, allowing the coefficients to be unrestricted on the real line, while φ receives a Gamma(a,b) prior guaranteeing positivity. The normal priors are deliberately diffuse, but the authors discuss how hyper‑parameters can be calibrated using expert knowledge or empirical Bayes methods. Importantly, the prior on the coefficients together with the link function ensures that μ_t will automatically lie in (0, 1) for any draw from the posterior, thus embedding the admissibility condition within the Bayesian hierarchy.

Because the posterior distribution does not admit a closed‑form expression, the authors develop a Metropolis‑Hastings‑within‑Gibbs (MH‑within‑Gibbs) sampler. The Gibbs scheme cycles through blocks: (α,β) are updated jointly using a multivariate normal proposal centered at the current values, while φ is updated on the log scale with a univariate normal proposal. The proposal covariance matrices are adaptively tuned during a burn‑in phase to achieve an acceptance rate around 20–30 %, which is shown to improve mixing without sacrificing stability. The algorithm also incorporates a Jacobian adjustment when transforming φ to the log scale, ensuring that detailed balance is preserved.

A major contribution of the paper is the simultaneous solution of the model‑order selection problem. The authors adopt a reversible‑jump Markov chain Monte Carlo (RJMCMC) approach to move between models of different autoregressive orders p. Birth and death moves are defined: a birth move proposes adding a new coefficient β_{p+1} together with a corresponding lagged observation, while a death move removes an existing coefficient. The dimension‑matching condition is satisfied by introducing an auxiliary scaling variable u drawn from a Uniform(0, 1) distribution; the Jacobian of the transformation is explicitly derived. The acceptance probability for a jump includes the ratio of prior densities, the likelihood contribution of the new or removed coefficient, and the Jacobian term. This RJMCMC scheme enables the chain to explore the joint space of (p,α,β,φ) and to produce posterior probabilities for each candidate order, thereby providing a principled Bayesian model‑selection criterion.

The methodology is evaluated through extensive simulation studies. Synthetic data are generated from BAR processes with known orders (p = 1, 2, 3) and varying precision levels. The RJMCMC algorithm consistently identifies the correct order with high posterior probability, even when the signal‑to‑noise ratio is low. Parameter estimates exhibit low bias and credible intervals achieve nominal coverage. The authors also conduct a prior‑sensitivity analysis, showing that overly informative priors can distort order selection, while weakly informative priors yield robust results.

Two real‑world applications illustrate the practical relevance. The first involves daily click‑through rates (CTR) for an online advertising campaign, a classic bounded proportion series exhibiting trend and occasional spikes. The second uses monthly precipitation ratios (rainfall divided by potential evapotranspiration) in a climatological study. In both cases, the Bayesian BAR model with RJMCMC order selection outperforms competing approaches such as standard ARIMA, frequentist BAR estimated by conditional maximum likelihood, and a simple Bayesian linear regression on the logit‑transformed series. Forecast accuracy, measured by mean absolute error and predictive log‑score, improves noticeably, and the posterior predictive intervals capture the observed values at the expected rates, demonstrating calibrated uncertainty quantification.

In conclusion, the paper delivers a comprehensive Bayesian solution for estimation and model selection in conditionally linear Beta autoregressive processes. By embedding parameter constraints in the prior, employing an efficiently tuned MH‑within‑Gibbs sampler, and integrating reversible‑jump moves for order selection, the authors provide a coherent framework that simultaneously addresses inference, forecasting, and model uncertainty. The work opens avenues for extensions to multivariate Beta processes, alternative link functions, and online sequential updating, thereby broadening the applicability of Bayesian methods to a wide range of bounded time‑series problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment