A state of a dynamic computational structure distributed in an environment: a model and its corollaries

Currently there is great interest in computational models consisting of underlying regular computational environments, and built on them distributed computational structures. Examples of such models are cellular automata, spatial computation and space-time crystallography. For any computational model it is natural to define a functional equivalence of different but related computational structures. In the finite automata theory an example of such equivalence is automata homomorphism and, in particular, automata isomorphism. If we continue to stick to the finite automata theory, a fundamental question arise, what a state of a distributed computational structure is. This work is devoted to particular solution of the issue.

💡 Research Summary

The paper tackles a foundational question that has been largely overlooked in the literature on distributed computational structures: “What exactly is a state when the computation takes place in a dynamic, regular environment?” To answer this, the authors first formalize the notion of a computational environment. Rather than treating the environment as a passive backdrop, they model it as a space—either a lattice, a continuous manifold, or a more abstract graph—whose cells or points possess time‑varying attributes (e.g., temperature, voltage, bandwidth). This view generalizes the classic cellular‑automaton setting, where each cell’s color is the only environmental variable, by allowing arbitrary physical or logical parameters to evolve independently of the computational agents.

On top of this environment they place a distributed computational structure, which is essentially a graph of processing nodes connected by edges. Each node carries an internal state and a local transition function. The transition function receives three kinds of inputs: (1) the node’s current internal state, (2) signals arriving from neighboring nodes, and (3) the current values of the environmental variables at the node’s location. In this sense the local transition is a direct extension of the transition function of a finite automaton, but its input alphabet is enriched by spatial and temporal context.

The central contribution of the work is a combined environment‑structure state definition. At any discrete time step t the global state is denoted S(t) = (E(t), C(t)), where E(t) captures the complete configuration of the environment (all cell values, physical fields, etc.) and C(t) captures the full configuration of the computational structure (the internal state of every node and the topology of the connections). This definition has two important consequences. First, the state space is no longer limited to the product of node states; it is expanded to include the environment, which means that any change in the environment directly reshapes the reachable state set. Second, it enables a rigorous notion of state equivalence across different environments.

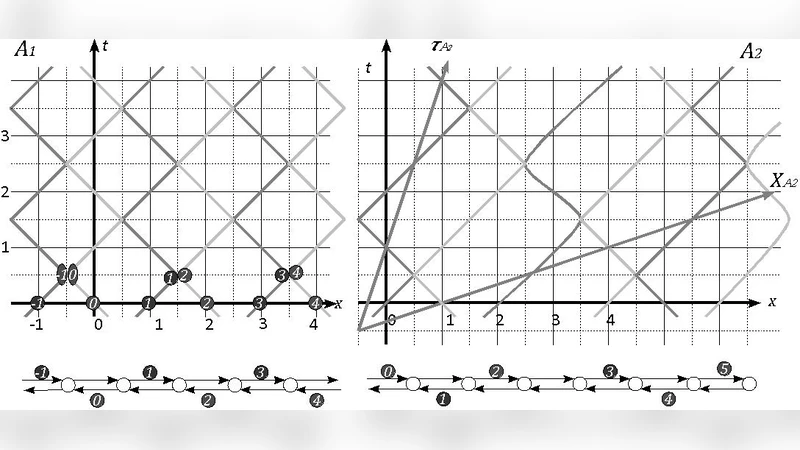

To formalize equivalence the authors borrow the concepts of automata homomorphism and isomorphism. Given two pairs (E₁, C₁) and (E₂, C₂), a mapping φ is a homomorphism if φ(E₁)=E₂ and φ(C₁)=C₂. If φ is bijective, the two systems are isomorphic, i.e., they are indistinguishable in both structure and behavior. This provides a mathematically precise criterion for saying that two distributed systems, possibly deployed in different media, are “the same” with respect to the tasks they perform.

The paper then defines a state transition relation S(t) → S(t+1) that simultaneously applies every node’s local transition function and the environment’s own evolution rule. In effect, the classic transition graph of a finite automaton is lifted to a high‑dimensional graph whose vertices are (environment, structure) pairs and whose edges encode both computational updates and environmental dynamics. Within this framework two corollaries are proved. The first corollary states that homomorphic (or isomorphic) systems produce identical output sequences for any given input sequence, guaranteeing semantic preservation under the equivalence mapping. The second corollary shows that when the environment possesses periodicity or symmetry, the state space of the combined system can be factored by the corresponding symmetry group, leading to a substantial reduction in the number of distinct states that must be analyzed.

The authors illustrate the applicability of their model by revisiting three well‑known domains. In cellular automata the environment and the structure coincide, so the combined state collapses to the traditional cell‑state vector. In space‑time crystallography, the lattice’s spatial symmetry and temporal modulation are treated as environmental variables, while the “defect” dynamics constitute the computational structure. In distributed robotics, the robots’ positions and sensed fields (e.g., obstacles, light intensity) form E(t), whereas the robots’ control software, communication links, and internal variables form C(t). Simulations of a routing protocol under time‑varying network latency demonstrate that two isomorphic deployments—one on a static wired network, the other on a wireless mesh with fluctuating delay—produce identical packet‑delivery patterns, confirming the semantic preservation corollary. Moreover, exploiting environmental symmetry (e.g., a circular arena) reduces the state‑space size by a factor equal to the order of the symmetry group, validating the second corollary.

In conclusion, the paper proposes a unified, environment‑aware definition of state for distributed computational structures and shows how this definition naturally extends classical automata theory. By integrating homomorphism/isomorphism concepts, high‑dimensional transition graphs, and symmetry‑based state reduction, the authors provide a robust theoretical foundation for analyzing, comparing, and optimizing distributed systems that operate in physically or logically dynamic settings. The framework promises to impact a broad spectrum of research areas, including complex‑system modeling, distributed algorithm design, and verification of cyber‑physical systems, by offering a systematic way to reason about the interplay between computation and its surrounding environment.

Comments & Academic Discussion

Loading comments...

Leave a Comment