Development and Validation of a Teaching Practice Scale (TISS) for Instructors of Introductory Statistics at the College Level

This study examined the teaching practices of 227 college instructors of introductory statistics (from the health and behavioral sciences). Using primarily multidimensional scaling (MDS) techniques, a two-dimensional, 10-item teaching practice scale, TISS (Teaching of Introductory Statistics Scale), was developed and validated. The two dimensions (subscales) were characterized as constructivist, and behaviorist, and are orthogonal to each other. Criterion validity of this scale was established in relation to instructors’ attitude toward teaching, and acceptable levels of reliability were obtained. A significantly higher level of behaviorist practice (less reform-oriented) was reported by instructors from the USA, and instructors with academic degrees in mathematics and engineering. This new scale (TISS) will allow us to empirically assess and describe the pedagogical approach (teaching practice) of instructors of introductory statistics. Further research is required in order to be conclusive about the structural and psychometric properties of this scale.

💡 Research Summary

The paper reports the development and validation of the Teaching of Introductory Statistics Scale (TISS), a quantitative instrument designed to capture college‑level instructors’ teaching practices in introductory statistics courses, primarily within health and behavioral sciences. The authors recruited 227 instructors from several English‑speaking countries (the United States, Canada, Australia, etc.) and began by generating a large pool of candidate items through literature review and expert consultation. After a pilot test and item‑reduction procedures, the final instrument comprised ten statements describing concrete classroom behaviors (e.g., “I provide students with real data sets,” “I give students the correct answer in advance”).

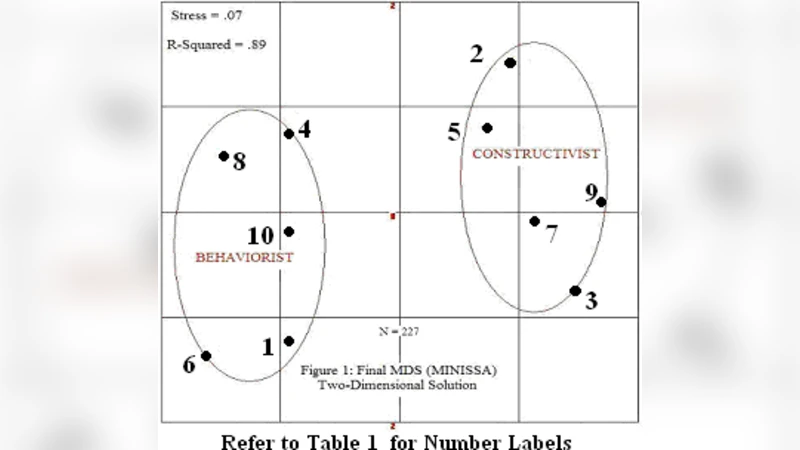

Multidimensional scaling (MDS) and non‑hierarchical cluster analysis were applied to the response data. The analyses revealed a stable two‑dimensional structure that is orthogonal, meaning the dimensions are statistically independent. The authors labeled the dimensions “constructivist” and “behaviorist.” The constructivist dimension clusters items that reflect student‑centered, inquiry‑based, and data‑driven practices, whereas the behaviorist dimension groups items indicative of lecture‑centric, procedural, and answer‑providing approaches.

Criterion validity was examined by correlating TISS scores with an established attitude‑toward‑teaching scale. Constructivist scores showed a moderate positive correlation (r ≈ .42, p < .001) with favorable teaching attitudes, while behaviorist scores displayed a moderate negative correlation (r ≈ –.35, p < .001). Internal consistency reliability, assessed with Cronbach’s α, was .78 for the constructivist subscale and .81 for the behaviorist subscale, both exceeding the conventional .70 threshold for acceptable reliability.

The authors also explored demographic differences. Instructors based in the United States reported significantly higher behaviorist scores than their counterparts from other countries, suggesting a more traditional teaching orientation. Similarly, instructors whose highest degree was in mathematics or engineering exhibited higher behaviorist scores compared with those from the social or health sciences. No substantial differences emerged based on years of teaching experience or doctoral versus master’s level education.

The study acknowledges several limitations. The sample is confined to health‑ and behavioral‑science instructors, limiting the generalizability of the findings to other disciplines. The reliance on self‑report questionnaires raises the possibility of social desirability bias, and the research does not link TISS scores to student learning outcomes or observational data. Consequently, the authors recommend future work that (1) applies TISS across a broader range of academic fields, (2) incorporates classroom observations or video analysis to triangulate self‑report data, and (3) examines predictive validity by relating TISS scores to student performance metrics.

In conclusion, the research provides a psychometrically sound, ten‑item scale that distinguishes between constructivist and behaviorist teaching practices among introductory statistics instructors. By offering a reliable metric, TISS enables researchers, curriculum developers, and policy makers to empirically assess the prevalence of reform‑oriented (constructivist) versus traditional (behaviorist) pedagogies, monitor changes over time, and evaluate the impact of professional development initiatives aimed at promoting active, data‑centric learning in statistics education.

Comments & Academic Discussion

Loading comments...

Leave a Comment