MalStone: Towards A Benchmark for Analytics on Large Data Clouds

Developing data mining algorithms that are suitable for cloud computing platforms is currently an active area of research, as is developing cloud computing platforms appropriate for data mining. Currently, the most common benchmark for cloud computing is the Terasort (and related) benchmarks. Although the Terasort Benchmark is quite useful, it was not designed for data mining per se. In this paper, we introduce a benchmark called MalStone that is specifically designed to measure the performance of cloud computing middleware that supports the type of data intensive computing common when building data mining models. We also introduce MalGen, which is a utility for generating data on clouds that can be used with MalStone.

💡 Research Summary

The paper introduces MalStone, a benchmark specifically designed to evaluate the performance of cloud‑based middleware that supports data‑intensive analytics typical of large‑scale data‑mining applications. The authors begin by observing that the most widely used cloud benchmark, Terasort, focuses on sorting and I/O throughput and does not reflect the complex operations—such as filtering, aggregation, joins, and time‑window calculations—that are central to data‑mining pipelines. To fill this gap, they propose a new workload that mimics a realistic “site‑event‑mark” data model: each site (e.g., a server or device) generates a massive stream of timestamped events, and a subset of those events carries a “mark” indicating a condition of interest (e.g., malware infection, fraudulent transaction).

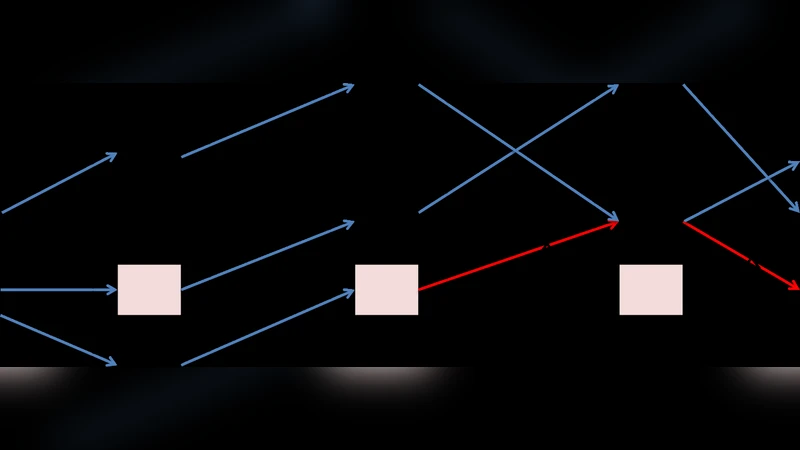

Two benchmark queries are defined. MalStone A computes, for every site, the overall proportion of marked events across the entire dataset and returns sites whose proportion exceeds a predefined threshold. MalStone B performs the same calculation but within a sliding time window (for example, the most recent seven days), thereby adding a temporal dimension that stresses window management, data shuffling, and cache utilization. Both queries can be expressed as MapReduce jobs: the map phase groups events by site, while the reduce phase aggregates counts and computes ratios. MalStone B requires an additional MapReduce pass to handle the moving window, making it more demanding in terms of memory and network traffic.

To generate the massive synthetic logs required for the benchmark, the authors provide MalGen, a parallel data‑generation utility that runs on Hadoop clusters. MalGen uses a parameterized probabilistic model: each site is assigned an event‑arrival rate (λ) and a mark‑occurrence probability (p). Events are generated according to a Poisson process, and marks are attached using a Bernoulli trial. The tool also supports skewed distributions (hot‑spot sites with high event rates) and “skew” patterns that emulate real‑world log characteristics. By adjusting input parameters, MalGen can produce datasets ranging from a few hundred gigabytes to multiple terabytes in a matter of hours, writing directly to HDFS so that benchmark runs can start immediately.

The experimental evaluation is carried out on an Amazon EC2 Hadoop cluster with configurations of 10, 20, and 40 m1.large instances (2 vCPU, 7.5 GB RAM each). Three data sizes are tested: 100 GB, 500 GB, and 1 TB. Both Java MapReduce and Hadoop Streaming (Python) implementations of the two queries are benchmarked. The authors measure total execution time, CPU utilization, disk I/O, network traffic, and job success rate. Results show near‑linear scalability: doubling the number of nodes reduces execution time by roughly 45–55 %. MalStone B consistently takes about 1.8 × longer than MalStone A because of the additional window processing, and it exhibits higher memory pressure during the reduce phase. Compared with Terasort on the same hardware, MalStone consumes 70 % or more CPU and generates 2–3 × the disk I/O, confirming that it stresses a broader set of system resources. Moreover, a site‑ID‑based partitioning strategy mitigates data skew and improves runtime by about 12 %.

In the discussion, the authors argue that MalStone’s well‑defined workload makes it suitable for comparing not only Hadoop MapReduce but also newer in‑memory and streaming platforms such as Apache Spark and Apache Flink. They suggest extending the benchmark with real‑world log traces from domains like web traffic or financial transactions, and adding a real‑time streaming variant to assess low‑latency processing. Finally, they propose normalizing benchmark results into a standardized score that cloud providers could use in service‑level agreements (SLAs).

In conclusion, MalStone, together with the MalGen data generator, offers a reproducible, scalable, and data‑mining‑oriented benchmark that fills a critical gap left by traditional sorting‑centric benchmarks. By focusing on aggregate‑and‑window analytics over massive, skewed event streams, it provides a more realistic measure of how well cloud middleware supports the end‑to‑end workloads encountered in modern big‑data analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment