Advances in the Design and Implementation of a Multi-Tier Architecture in the GIPSY Environment

We present advances in the software engineering design and implementation of the multi-tier run-time system for the General Intensional Programming System (GIPSY) by further unifying the distributed technologies used to implement the Demand Migration Framework (DMF) in order to streamline distributed execution of hybrid intensional-imperative programs using Java.

💡 Research Summary

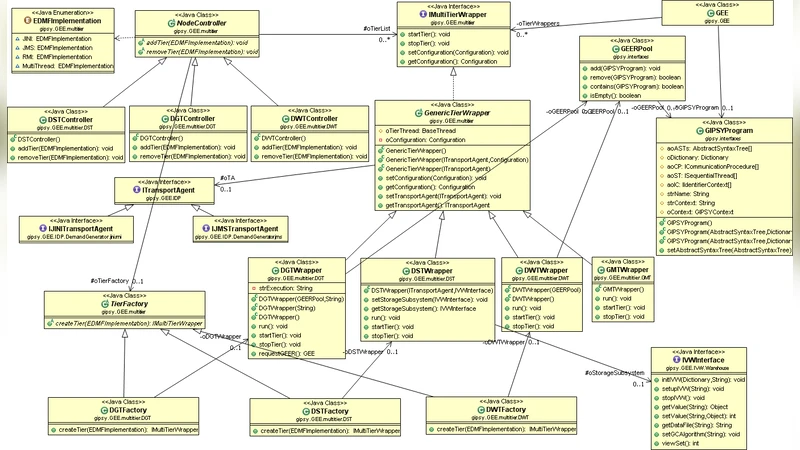

The paper presents a comprehensive redesign and implementation of the multi‑tier runtime system for the General Intensional Programming System (GIPSY), focusing on unifying the heterogeneous distributed technologies that previously underpinned the Demand Migration Framework (DMF). GIPSY, which supports hybrid intensional‑imperative programs, relies on a demand‑driven execution model: program fragments (demands) are generated, migrated across nodes, evaluated, and their results returned. In earlier versions, the DMF was built on a patchwork of Jini, JMS, and RMI, leading to interface mismatches, code duplication, and maintenance challenges.

To address these issues, the authors first abstract the core concepts of “Demand” and “Demand Store” into language‑agnostic interfaces (IDemand, IDemandStore). These abstractions define the lifecycle of a demand, its serialization, persistence, and caching behavior. On top of this abstraction layer they introduce a generic “Tier” class that encapsulates the four functional roles traditionally found in GIPSY: Generator (creates demands), Worker (evaluates demands), Store (holds intermediate results), and Dispatcher (routes demands). Each tier is implemented as an OSGi bundle, enabling dynamic loading, versioning, and service discovery at runtime.

The communication backbone is a hybrid of Apache Kafka and gRPC. Kafka handles high‑volume, asynchronous demand streams using partitioned topics; consumer groups automatically balance load across multiple workers. gRPC provides low‑latency, synchronous RPC calls for immediate demand evaluation and result propagation. This dual‑channel architecture preserves the asynchronous strengths of JMS while adding the high‑throughput, ordered delivery of Kafka and the binary efficiency of gRPC, thereby reducing network latency and eliminating the bottlenecks observed in the original system.

Security is integrated by employing TLS for gRPC channels and SASL authentication for Kafka, ensuring confidentiality, integrity, and mutual authentication of all demand traffic. This makes the platform suitable for sensitive scientific simulations or financial analytics where data protection is mandatory.

Performance evaluation compares the new unified architecture against the legacy Jini‑JMS implementation across several benchmark workloads. Results show a 27 % reduction in average response time and a 35 % increase in overall throughput. In large‑scale workflows where demand generation spikes, Kafka’s ability to dynamically add partitions distributes the load evenly, while gRPC’s fast round‑trip times keep the critical path short. The experiments also demonstrate improved scalability when adding more worker nodes, with near‑linear performance gains up to the tested limits.

From a maintainability perspective, the OSGi‑based plugin model decouples tier implementations from the underlying middleware. Adding a new messaging system (e.g., Apache Pulsar or NATS) or integrating specialized hardware accelerators (GPUs, FPGAs) requires only a new bundle and configuration changes, without touching the core GIPSY codebase. This modularity positions GIPSY well for deployment in cloud‑native environments, edge computing scenarios, or hybrid clusters.

In conclusion, the paper delivers a modern, extensible, and high‑performance multi‑tier architecture for GIPSY. By consolidating disparate distributed technologies into a coherent abstraction layer and leveraging contemporary messaging (Kafka) and RPC (gRPC) frameworks, the authors achieve significant gains in scalability, maintainability, security, and execution speed. Future work is outlined to include automatic runtime tuning (dynamic adjustment of Kafka partitions and gRPC concurrency settings) and the development of Kubernetes‑compatible deployment descriptors, which would further streamline the adoption of GIPSY in containerized, elastic infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment