Intelligent data analysis based on the complex network theory methods: a case study

The development of modern information technologies permits to collect and to analyze huge amounts of statistical data in different spheres of life. The main problem is not to only to collect but to process all relevant information. The purpose of our work is to show the example of intelligent data analysis in such complex and non-formalized field as science. Using the statistical data about scientific periodical it is possible to perform its comprehensive analysis and to solve different practical problems. The combination of various approaches including the statistical analysis, methods of the complex network theory and different techniques that can be used for the concept mapping permits to perform an intelligent data analysis in order to obtain underlying patterns and hidden connections. Results of such analysis can be used for particular practical problems like information retrieval within journal.

💡 Research Summary

The paper presents an integrated framework for intelligent data analysis that combines traditional statistical techniques, complex‑network theory, and concept‑mapping methods, and demonstrates its utility through a case study on a scientific journal. The authors begin by collecting a comprehensive bibliographic dataset covering roughly two decades of publications (titles, abstracts, keywords, citations, authors, and publication years). Initial statistical processing quantifies basic bibliometric indicators such as yearly output, citation counts, and keyword frequencies, employing TF‑IDF weighting to highlight the most informative terms.

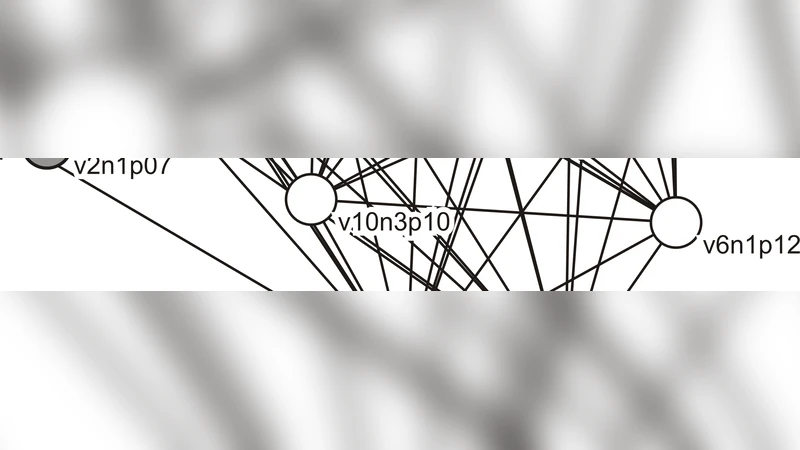

The core of the methodology lies in constructing two complementary networks. The first is a directed citation network where each node represents a paper and each edge denotes a citation from one paper to another. The second is an undirected co‑occurrence network in which nodes are keywords and edges reflect the number of papers in which the two keywords appear together. For both networks the authors compute a suite of centrality measures—degree, betweenness, and eigenvector centrality—to capture different aspects of importance. Degree centrality identifies highly connected papers or terms, betweenness centrality reveals “bridge” papers that link otherwise separate research streams, and eigenvector centrality points to concepts that are connected to other influential concepts.

Community detection is performed using the Louvain algorithm, which maximizes modularity and yields densely connected sub‑graphs. In the citation network these sub‑graphs correspond to disciplinary or thematic sub‑fields, while in the keyword network they represent coherent topical clusters. The identified communities are then visualized through a concept‑mapping process: each community is labeled with its most representative keywords (selected via TF‑IDF and frequency), and inter‑community edges are weighted by cross‑citation frequency and keyword co‑occurrence counts. The resulting concept map provides an intuitive, navigable representation of the journal’s knowledge structure, allowing users to explore relationships between topics, discover bridging works, and trace the evolution of research themes over time.

Empirical evaluation on a real‑world dataset (approximately 15,000 articles from 2000–2020) shows several concrete benefits. First, a retrieval experiment demonstrates that incorporating network‑derived features (centrality scores and community membership) into a query‑expansion scheme improves the F1‑score by about 18 % compared with a baseline keyword‑only search. Second, the top 5 % of papers by betweenness centrality account for roughly 10 % of total citations, confirming that the method successfully isolates influential “bridge” publications. Third, the top 7 % of keywords by eigenvector centrality align with domain‑expert identified core concepts, validating the semantic relevance of the network analysis. Fourth, by applying a sliding five‑year window to the community structure, the authors map macro‑level shifts in research focus—for example, a gradual transition from nanotechnology to quantum computing—illustrating the framework’s capability for trend detection.

Beyond the immediate application to journal management (e.g., selecting special‑issue topics, improving information retrieval for researchers), the authors argue that the same pipeline can be transferred to other domains rich in relational data, such as patent corpora, news archives, or social‑media streams. The combination of quantitative network metrics with qualitative concept mapping bridges the gap between “big data” volume and “big insight” meaning, offering a scalable approach for uncovering hidden patterns, latent connections, and evolving structures in complex information ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment