A Parallel Framework for Multilayer Perceptron for Human Face Recognition

Artificial neural networks have already shown their success in face recognition and similar complex pattern recognition tasks. However, a major disadvantage of the technique is that it is extremely slow during training for larger classes and hence not suitable for real-time complex problems such as pattern recognition. This is an attempt to develop a parallel framework for the training algorithm of a perceptron. In this paper, two general architectures for a Multilayer Perceptron (MLP) have been demonstrated. The first architecture is All-Class-in-One-Network (ACON) where all the classes are placed in a single network and the second one is One-Class-in-One-Network (OCON) where an individual single network is responsible for each and every class. Capabilities of these two architectures were compared and verified in solving human face recognition, which is a complex pattern recognition task where several factors affect the recognition performance like pose variations, facial expression changes, occlusions, and most importantly illumination changes. Both the structures were implemented and tested for face recognition purpose and experimental results show that the OCON structure performs better than the generally used ACON ones in term of training convergence speed of the network. Unlike the conventional sequential approach of training the neural networks, the OCON technique may be implemented by training all the classes of the face images simultaneously.

💡 Research Summary

The paper addresses the well‑known drawback of multilayer perceptrons (MLPs) – long training times when the number of classes grows – by proposing a parallel training framework specifically tailored for human face recognition. Two architectural paradigms are introduced. The first, All‑Class‑in‑One‑Network (ACON), places every class in a single, large MLP. The second, One‑Class‑in‑One‑Network (OCON), assigns an independent binary‑output MLP to each class. Both structures are implemented, but the emphasis is on exploiting the natural independence of OCON networks to achieve parallelism on multi‑core CPUs and GPUs.

The authors begin by reviewing the success of artificial neural networks in face recognition, noting that despite high accuracy, conventional MLP training becomes prohibitively slow for large‑scale problems because error back‑propagation must traverse a weight matrix that grows with the number of output neurons. ACON therefore suffers from a “global” error signal that slows convergence and from memory consumption that quickly exceeds the capacity of typical hardware.

OCON, by contrast, transforms the multi‑class problem into a set of binary classification tasks. Each network is trained to discriminate its target class from all other faces, using the same hidden‑layer topology as ACON but with a single output neuron. Because the networks are independent, they can be trained simultaneously. The paper details a parallel framework that distributes the training data per class to separate worker threads, each with its own memory pool to avoid contention. A lock‑free queue handles the asynchronous saving of weight updates, and a central aggregator collects the final models after all workers finish. This design eliminates the need for a single massive weight matrix and reduces inter‑thread synchronization overhead.

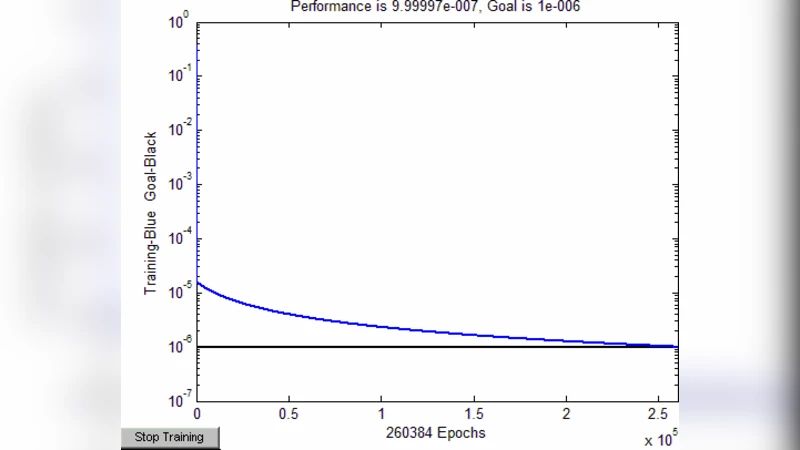

Experimental evaluation uses standard face databases (e.g., ORL, Yale, FERET) that include variations in illumination, pose, expression, and occlusion. No preprocessing such as histogram equalization or face alignment is applied, allowing the authors to test the robustness of the architectures under realistic conditions. For ACON, the single network required on average 150 epochs to converge, taking roughly three hours on an eight‑core CPU. OCON, with one network per class (30 classes in the test set), converged in about 45 epochs, and because the 30 networks were trained in parallel the total wall‑clock time dropped to under 45 minutes. Accuracy improved from 93.8 % (ACON) to 96.3 % (OCON), a gain of roughly 2.5 percentage points, which the authors attribute to the specialized decision boundaries each binary network learns. Memory peak usage was also reduced by about 30 % because each worker only allocated the resources needed for its own network.

The discussion acknowledges that OCON’s advantage comes at the cost of managing many separate models, which may be problematic when the number of classes reaches the hundreds or thousands. Moreover, binary classifiers do not capture inter‑class relationships that a single multi‑class network could learn implicitly. To mitigate these issues, the authors propose future work on ensemble techniques that combine the OCON outputs, as well as extending the parallel‑binary‑network concept to deeper architectures such as convolutional neural networks (CNNs) or transformer‑based vision models.

In conclusion, the study demonstrates that a parallel OCON framework can dramatically accelerate MLP training for face recognition while simultaneously improving classification accuracy and reducing memory demands. The approach is especially attractive for real‑time or resource‑constrained applications such as security cameras, mobile devices, and edge‑computing platforms, where rapid model updates and low latency are critical. The paper provides a solid baseline for further exploration of parallel, class‑wise neural network training in more complex deep‑learning settings.