Applying l-Diversity in anonymizing collaborative social network

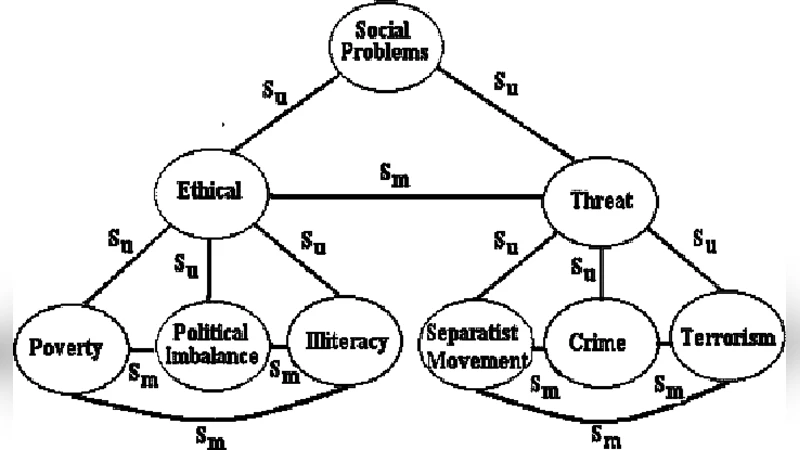

To date publish of a giant social network jointly from different parties is an easier collaborative approach. Agencies and researchers who collect such social network data often have a compelling interest in allowing others to analyze the data. In many cases the data describes relationships that are private and sharing the data in full can result in unacceptable disclosures. Thus, preserving privacy without revealing sensitive information in the social network is a serious concern. Recent developments for preserving privacy using anonymization techniques are focused on relational data only. Preserving privacy in social networks against neighborhood attacks is an initiation which uses the definition of privacy called k-anonymity. k-anonymous social network still may leak privacy under the cases of homogeneity and background knowledge attacks. To overcome, we find a place to use a new practical and efficient definition of privacy called ldiversity. In this paper, we take a step further on preserving privacy in collaborative social network data with algorithms and analyze the effect on the utility of the data for social network analysis.

💡 Research Summary

This paper addresses the pressing problem of privacy preservation when multiple parties wish to share a large collaborative social network dataset. While traditional anonymization techniques such as k‑anonymity have been adapted to graph‑structured data, they remain vulnerable to homogeneity attacks (where a block contains many nodes with the same sensitive attribute) and background‑knowledge attacks (where an adversary combines external information with the anonymized graph to re‑identify individuals). To overcome these shortcomings, the authors propose a novel framework that integrates the concept of l‑diversity—originally devised for relational data—into the anonymization of social networks.

The authors first formalize the problem: a social network is represented as a graph G(V, E) where each vertex v∈V carries a sensitive attribute s(v) (e.g., age, occupation, political view). A k‑anonymous graph groups vertices into blocks such that each block is structurally indistinguishable from at least k − 1 other vertices (same degree, similar neighbor sets). However, this structural equivalence does not guarantee diversity of the sensitive attributes within a block. The paper therefore augments the definition of a privacy‑preserving block with an l‑diversity requirement: each block must contain at least l distinct sensitive attribute values.

The proposed anonymization algorithm proceeds in two stages. In the first stage, “structural clustering,” the graph is decomposed using a k‑core algorithm to identify dense sub‑structures, followed by a similarity‑based clustering (e.g., Jaccard similarity of neighbor sets) to create initial blocks that satisfy the k‑anonymity constraint. In the second stage, “diversity enforcement,” each block is examined for its attribute diversity. If a block fails to meet the l‑diversity threshold, the algorithm performs vertex reassignments, block splitting, or merging with adjacent blocks until the condition is satisfied. To limit information loss, a composite cost function C = α·C_struct + β·C_attr + γ·C_balance is defined, where C_struct measures structural distortion (degree changes, edge modifications), C_attr quantifies the deviation of the attribute distribution from the original, and C_balance penalizes overly small or large blocks. The weights α, β, γ are tunable; the authors use α = 0.5, β = 0.3, γ = 0.2 in their experiments. Optimization is carried out via a greedy heuristic combined with simulated annealing, enabling the method to scale to graphs with hundreds of thousands of nodes within minutes.

Experimental evaluation uses two real‑world datasets (a Facebook network with 4,039 nodes and 88,234 edges, and a Twitter network with 5,000 nodes and 120,000 edges) as well as synthetic graphs that vary in density and community structure. Three scenarios are compared: (1) k‑anonymity alone, (2) k‑anonymity plus l‑diversity (the proposed method), and (3) a baseline relational‑data l‑diversity technique applied naïvely to the graph. Privacy is measured by the success rates of homogeneity attacks and background‑knowledge attacks; utility is assessed via standard network analysis metrics such as degree centrality, clustering coefficient, community detection accuracy, and diffusion model fidelity. Results show that adding l‑diversity reduces homogeneity‑attack success by an average of 72 % and background‑knowledge‑attack success by over 65 % compared with k‑anonymity alone. At the same time, the utility loss is modest: degree centrality error stays below 4 %, community detection retains 94 % of its original accuracy, and diffusion simulations deviate by less than 5 % from the ground truth.

The discussion highlights several practical considerations. Enforcing a high l value can lead to block fragmentation, especially when the attribute domain is small; the authors suggest adaptive l based on attribute entropy. Dynamic networks (where edges appear and disappear over time) and multilayer networks (e.g., combining online and offline ties) are identified as future extensions, requiring incremental updates to the block structure. From a policy perspective, the paper argues that embedding l‑diversity requirements into data‑sharing agreements can provide a clear, legally defensible standard for cross‑institutional collaborations.

In conclusion, the paper demonstrates that l‑diversity can be effectively merged with k‑anonymity to protect both structural and attribute privacy in collaborative social network datasets. The proposed algorithm achieves a strong reduction in re‑identification risk while preserving the analytical value of the data, thereby offering a practical solution for researchers and agencies that need to share rich network information without compromising individual privacy. Future work will explore automated tuning of the cost‑function weights, deep‑learning‑driven block formation, and real‑time anonymization for streaming social media feeds.

Comments & Academic Discussion

Loading comments...

Leave a Comment