Integrating Real-Time Analysis With The Dendritic Cell Algorithm Through Segmentation

As an immune inspired algorithm, the Dendritic Cell Algorithm (DCA) has been applied to a range of problems, particularly in the area of intrusion detection. Ideally, the intrusion detection should be performed in real-time, to continuously detect misuses as soon as they occur. Consequently, the analysis process performed by an intrusion detection system must operate in real-time or near-to real-time. The analysis process of the DCA is currently performed offline, therefore to improve the algorithm’s performance we suggest the development of a real-time analysis component. The initial step of the development is to apply segmentation to the DCA. This involves segmenting the current output of the DCA into slices and performing the analysis in various ways. Two segmentation approaches are introduced and tested in this paper, namely antigen based segmentation (ABS) and time based segmentation (TBS). The results of the corresponding experiments suggest that applying segmentation produces different and significantly better results in some cases, when compared to the standard DCA without segmentation. Therefore, we conclude that the segmentation is applicable to the DCA for the purpose of real-time analysis.

💡 Research Summary

The paper addresses a critical limitation of the Dendritic Cell Algorithm (DCA) when used for intrusion detection: its analysis phase is performed offline after all artificial dendritic cells (DCs) have matured. This offline step prevents the system from reacting to attacks in real time, which is essential for modern intrusion detection systems (IDS). To bridge this gap, the authors propose applying segmentation to the DCA’s output, thereby enabling continuous, near‑real‑time analysis. Two segmentation strategies are introduced: Antigen‑Based Segmentation (ABS) and Time‑Based Segmentation (TBS).

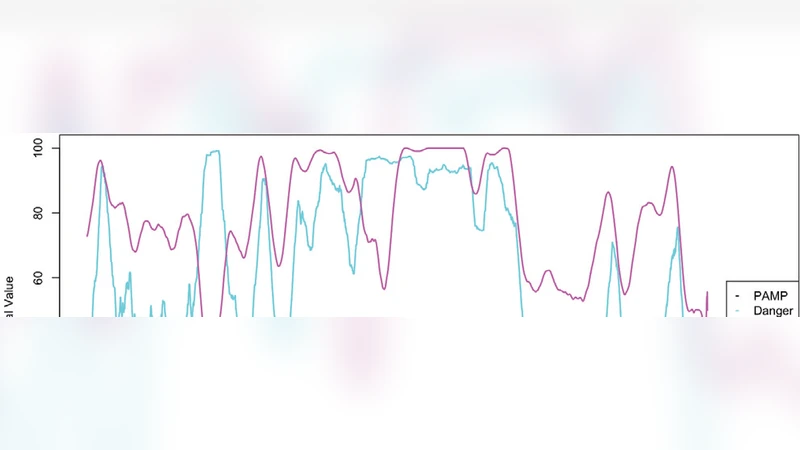

In ABS, the output stream is divided whenever a predefined number of antigens have been collected (e.g., 100 antigens per segment). This guarantees that each segment contains a comparable amount of classification evidence, but it can introduce latency when antigens appear sparsely. In TBS, the output is sliced at fixed time intervals (e.g., every 1 second). This approach ensures a bounded detection delay, but very short intervals may suffer from insufficient data, leading to noisy risk scores.

Both methods retain the core DCA processing pipeline: signal transformation, cumulative CSM (costimulatory signal) and k‑value accumulation, and the calculation of the anomaly score Kα for each antigen type. The only modification is that Kα is computed as soon as a segment is completed, rather than after the entire dataset has been processed.

The experimental evaluation uses a real‑world dataset collected from a university network, consisting of normal traffic and malicious port‑scan activity. The deterministic version of DCA (dDCA) is employed with the same parameters as prior work (population size, migration thresholds, signal weights). The authors test multiple segment sizes: ABS with 50, 100, 150, and 200 antigens per segment; TBS with 0.5 s, 1 s, 2 s, and 5 s intervals. Performance is measured by detection rate, false‑positive rate, F1‑score, and average detection latency.

Key findings include:

- ABS achieves the highest detection rates (≥92 %) and the lowest false‑positive rates (≈4 %) when the segment contains at least 150 antigens, outperforming the baseline DCA (≈85 % detection, ≈8 % false positives). Smaller antigen counts lead to insufficient statistical evidence and a rise in false alarms.

- TBS shows optimal performance at a 1‑second interval, delivering a detection rate of 90 %, a false‑positive rate of 5 %, and an average latency of 0.9 seconds. Shorter intervals (0.5 s) reduce latency but cause a sharp increase in false positives due to data scarcity; longer intervals (5 s) improve accuracy but exceed acceptable real‑time bounds.

- Both segmentation approaches suffer when segments are too small, confirming that the DCA’s intrinsic diversity—created by varying migration thresholds and thus differing time windows across cells—requires a minimum amount of data to produce stable Kα values.

The authors also discuss computational overhead. Segment‑wise Kα computation is linear in the number of antigens per segment, so higher segmentation frequencies increase CPU load. They suggest that practical deployment would benefit from hardware acceleration (GPU/FPGA) or adaptive segment sizing that balances latency against resource consumption.

Beyond immediate performance gains, segmentation preserves the temporal ordering of DCA outputs, opening the possibility of feeding these fine‑grained risk scores into downstream time‑series anomaly detectors such as LSTM networks. This could transform the DCA from a standalone classifier into a component of a richer, multi‑stage real‑time threat‑intelligence pipeline.

In conclusion, the paper demonstrates that segmenting DCA output—either by antigen count or by elapsed time—enables near‑real‑time intrusion detection with statistically significant improvements over the traditional offline DCA. The work lays a foundation for future research on dynamic segmentation policies, hardware‑accelerated implementations, and integration with advanced temporal models, ultimately aiming to deliver a fully real‑time, low‑latency IDS based on biologically inspired principles.

Comments & Academic Discussion

Loading comments...

Leave a Comment