A Framework for Constraint-Based Deployment and Autonomic Management of Distributed Applications (Extended Abstract)

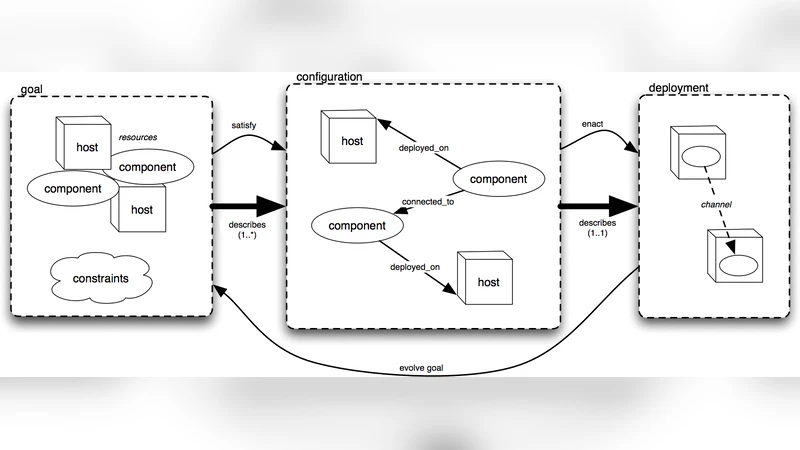

We propose a framework for the deployment and subsequent autonomic management of component-based distributed applications. An initial deployment goal is specified using a declarative constraint language, expressing constraints over aspects such as component-host mappings and component interconnection topology. A constraint solver is used to find a configuration that satisfies the goal, and the configuration is deployed automatically. The deployed application is instrumented to allow subsequent autonomic management. If, during execution, the manager detects that the original goal is no longer being met, the satisfy/deploy process can be repeated automatically in order to generate a revised deployment that does meet the goal.

💡 Research Summary

The paper presents a comprehensive framework that unifies the initial deployment and ongoing autonomic management of component‑based distributed applications through a constraint‑driven approach. The authors begin by defining a declarative constraint language that allows system architects to express high‑level deployment goals. These goals encompass component‑to‑host mappings, required inter‑component connections, replication factors, performance limits such as latency and bandwidth, and even security policies. By abstracting the desired state in this way, the framework separates “what” the system should achieve from “how” it should be realized.

Once a goal is specified, the framework hands the constraint set to a generic constraint solver. The solver—implemented using an off‑the‑shelf SAT/SMT engine (MiniSat in the prototype)—searches for a configuration that satisfies all constraints. The output is a concrete mapping of each component to a physical or virtual host together with a wiring diagram that respects the declared topology. This configuration is then fed to an automated deployment engine that translates the mapping into executable actions (e.g., SSH commands, container launches, VM provisioning). The deployment engine is deliberately modular, allowing integration with different orchestration platforms such as Kubernetes, OpenStack, or traditional script‑based tools.

Crucially, the deployed system is instrumented with lightweight monitoring agents that continuously collect runtime metrics (CPU load, network latency, service health, etc.). A central manager compares the observed state against the original constraints. If any constraint is violated—due to host failure, network degradation, or unexpected load—the manager triggers the same solve‑and‑deploy cycle automatically. The solver recomputes a new feasible configuration, and the deployment engine re‑positions components accordingly, all without human intervention. This closed‑loop process embodies the autonomic management paradigm: self‑configuration, self‑healing, and self‑optimization.

The authors validate the approach with a prototype implementation involving a three‑tier web application (web front‑end, application server, database) deployed across two physical hosts. After an intentional failure of one host, the monitoring subsystem detects the breach of the placement constraint, the solver generates a revised mapping that relocates the affected components to the surviving host, and the deployment engine carries out the migration within roughly thirty seconds. The experiment demonstrates rapid recovery and confirms that the constraint‑based method can adapt to dynamic changes in the underlying infrastructure.

The paper also discusses limitations and future work. As constraint models grow in size and complexity, solving time can become a bottleneck, especially for latency‑sensitive services that require near‑real‑time reconfiguration. The current prototype relies on a single centralized solver and deployment engine, which may not scale to multi‑cloud or highly distributed environments. Future directions include distributed solving techniques, predictive re‑placement based on workload forecasts, and richer policy languages that incorporate security and energy‑efficiency considerations.

In summary, this work contributes a novel, declarative, and automated pathway for both deploying and autonomically managing distributed applications. By leveraging constraint solving as the engine that bridges high‑level goals and concrete system configurations, the framework promises reduced operational overhead, improved resilience, and a clearer separation between architectural intent and operational mechanics—key advantages for modern cloud‑native and grid‑computing ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment