The LCG GRID system is the indispensable infrastructure for large scale computing required for ILC experiments. It had been used extensively for ILD LOI studies and its use will be further increased in coming DBD studies. Experiences during the LOI era and plan towards DBD study in KEK are presented.

Deep Dive into KEK GRID for ILC Experiments.

The LCG GRID system is the indispensable infrastructure for large scale computing required for ILC experiments. It had been used extensively for ILD LOI studies and its use will be further increased in coming DBD studies. Experiences during the LOI era and plan towards DBD study in KEK are presented.

arXiv:1006.3991v1 [physics.ins-det] 21 Jun 2010

KEK GRID for ILC Experiments

Akiya Miyamoto, Go Iwai, and Katsumasa Ikematsu

High Energy Accelerator Research Organization (KEK),

1-1 Oho, Tsukuba, Ibaraki, 305-0801 Japan

The LCG GRID system is the indispensable infrastructure for large scale computing

required for ILC experiments. It had been used extensively for ILD LOI studies and

its use will be further increased in coming DBD studies. Experiences during the LOI

era and plan towards DBD study in KEK are presented.

1

Introduction

International Linear Collider(ILC) is a global project and a good network connection among

participant members through Internet is a crucial infrastructure for the success. A GRID

system is constructed on the Internet and it provides not only CPU and storage for large

scale computing required for ILC experiments, but also sharing of data among ILC experi-

mentalists.

Software bases studies in International Large Detector concept (ILD)[1] has utilized LCG

GRID[2]; It had been used extensively during an era of LOI for Monte Carlo production and

sharing of produced data among members. Especially, there was a strong need in Japan to

access MC DST samples of about 5 TB placed in Europe. LCG GRID had been used for

file transfers successfully.

Experiences during this period are described in the following sections, after describing the

GRID system in KEK. A plan towards Detector Baseline Design is described subsequently.

2

Network feature

A wide-band backbone network has been constructed for HEP community in Japan, which

connects Japanese universities and laboratories participating HEP projects such as Belle,

J-PARC, ATLAS, ILC, and so on. In addition, the network covers non-HEP users such

as material science, bio-chemistry, synchrotron light source and neutron source. KEK is

playing a major role in supporting network services for these activities, including a GRID

deployment and operation.

The network is connected to the outside of Japan though SINET3[3].

The SINET3

provides a connection to Hong Kong and Singapore then to European network such as

GEANT, however the band width to Asian countries is limited to about less than 1 Gbps.

The trans-Pacific network from Japan to US has an order wider bandwidth.

Thus the

network packet between Japan and Europe go through North America, though the actual

path length is longer than a route through Eurasian Continent.

Long distance between Japan and Europe is the limiting factor of fast transfer of network

packets. Typically, we observed a round trip time of packets from KEK to IHEP and KISTI,

which are institutes in China and Korea, to be about 100 msec. On the other hand, those to

FNAL in US is about 200 msec and to DESY/IN2P3 in Germany/France is about 300 msec.

The round trip time is a pedestal time required for every network data transfer, independent

of a packet size, thus it is not efficient to send and receive small files.

LCWS/ILC 2010

3

GRID system in KEK and experiences in LOI period

KEK Computing Center is supporting two GRID system, LCG/gLite and RENKEI/NAREGI.

LCG has been used not only by LHC groups but also other HEP groups such as Belle, J-

PARC and ILC. RENKEI(REsources liNKage for E-science)[4] is a research to link resources

among communities of e-sciences in Japan. It is developing NAREGI GRID middle ware.

For ILC activities, two VOs have been used; CALICE-VO and ILC-VO, which use LCG

GRID middle ware. CALICE-VO has been used by CALICE group[5] for their test beam

data analysis and Monte Carlo simulation. ILC-VO provided CPU resources required for

ILD LOI studies. In Japan, KEK, Kobe university and Tohoku university are joining ILC-

VO. During the LOI study period, CPU resources in Japanese GRID sites were very small; It

was less than two order of magnitude smaller than those available at European institutes and

GRID in Japan had been used mainly for transfers of files produced at European institutes

or at KEK local batch servers.

For ILD LOI studies, about 70 TB data samples were produced mainly at DESY and

IN2P3 site. Sample consisted of simulated, reconstructed and DST samples produced by

processing ILC LOI benchmark processes[6] and Standard Model processes at 500 and 250

GeV center of mass energies. The data size were placed on GRID SEs for international and

inter-regional data accesses. In the period of LOI studies, the time from the production of

MC samples to the completion of data analysis was limited, thus only DST samples were

transferred during the period of about 3 moths, except some samples. The file transfers

were mainly from Europe to Japan but also partially from Japan to Europe.

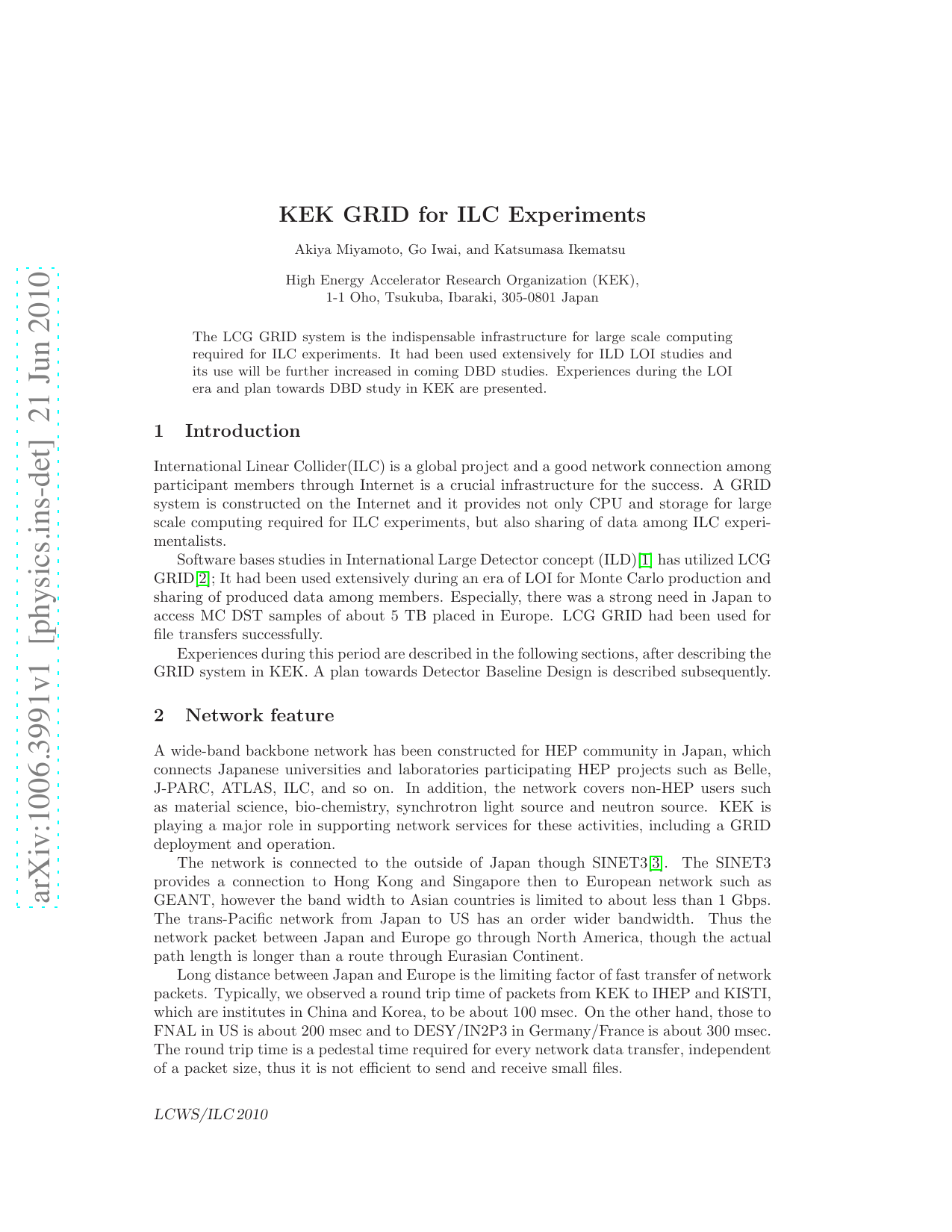

In total, about 5 TB data have been transferred with a typical transfer rate of about

200 kB/sec/port. Due to a limited transfer speed, we experienced a frequent time out of

transfer, which made it difficult to transfer of files with size exceeding 2 GB. The problem

had been cured by removing the time out limit in file copy.

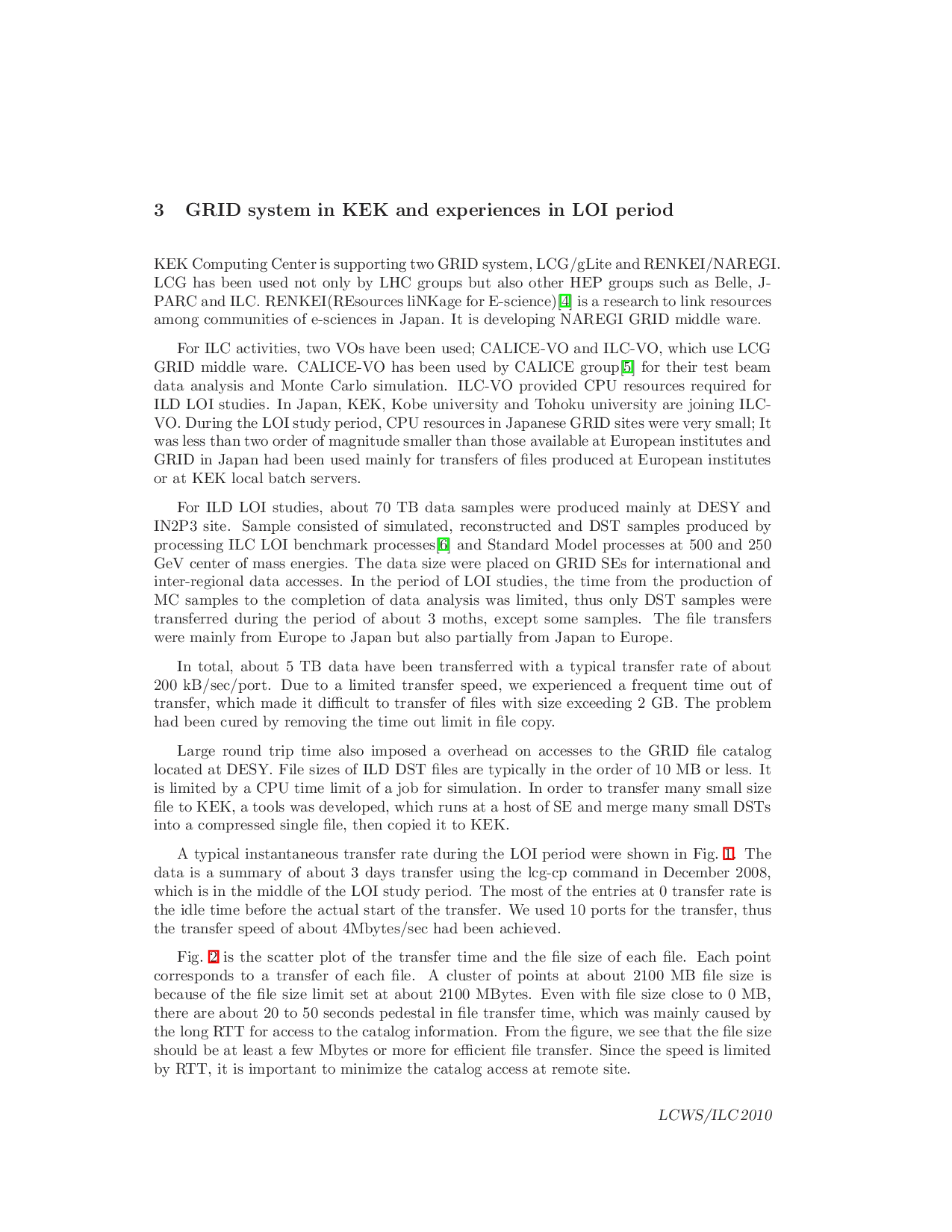

Large round trip tim

…(Full text truncated)…

This content is AI-processed based on ArXiv data.