Least Squares Superposition Codes of Moderate Dictionary Size, Reliable at Rates up to Capacity

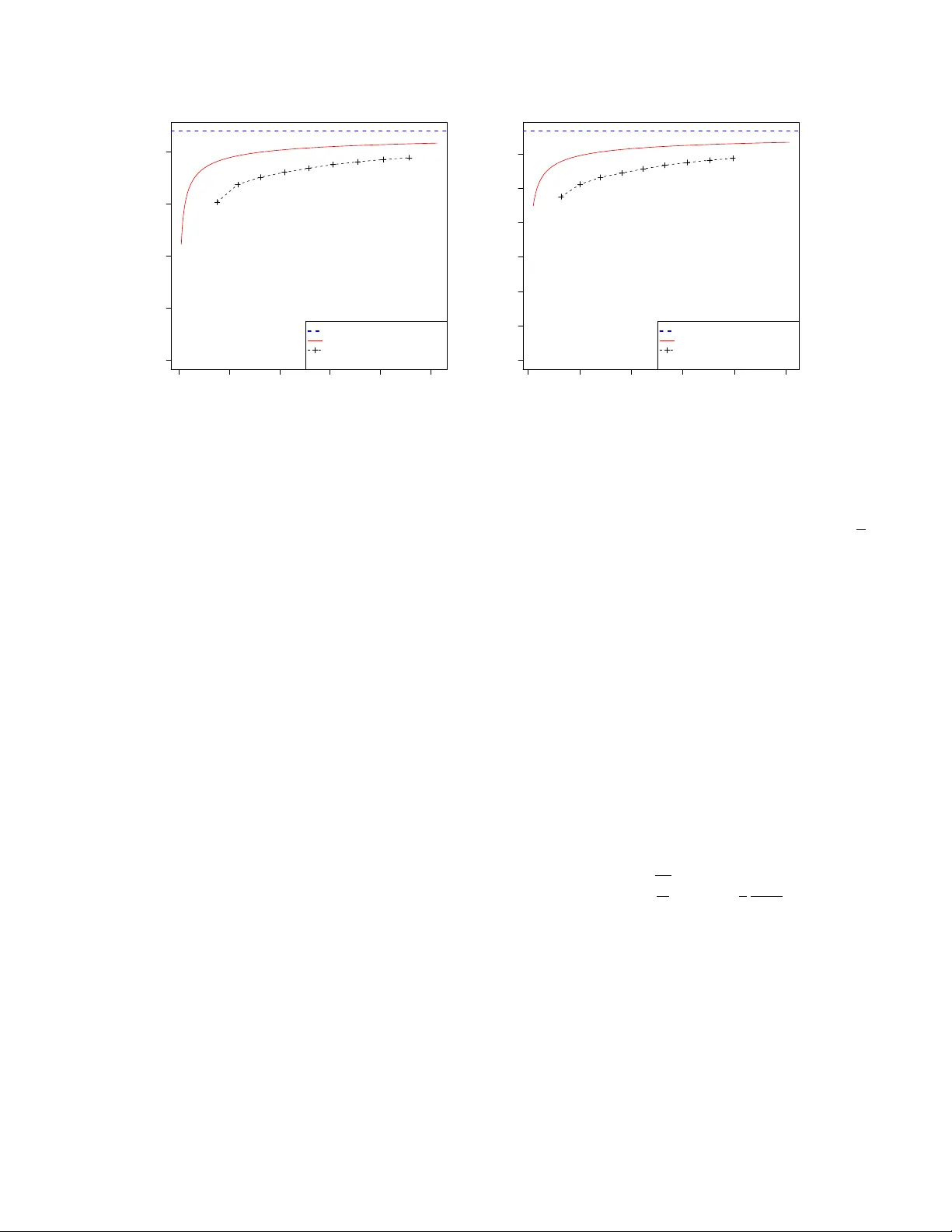

For the additive white Gaussian noise channel with average codeword power constraint, new coding methods are devised in which the codewords are sparse superpositions, that is, linear combinations of subsets of vectors from a given design, with the po…

Authors: ** - Andrew R. Barron - A. Joseph **