Chromatic PAC-Bayes Bounds for Non-IID Data: Applications to Ranking and Stationary $beta$-Mixing Processes

Pac-Bayes bounds are among the most accurate generalization bounds for classifiers learned from independently and identically distributed (IID) data, and it is particularly so for margin classifiers: there have been recent contributions showing how practical these bounds can be either to perform model selection (Ambroladze et al., 2007) or even to directly guide the learning of linear classifiers (Germain et al., 2009). However, there are many practical situations where the training data show some dependencies and where the traditional IID assumption does not hold. Stating generalization bounds for such frameworks is therefore of the utmost interest, both from theoretical and practical standpoints. In this work, we propose the first - to the best of our knowledge - Pac-Bayes generalization bounds for classifiers trained on data exhibiting interdependencies. The approach undertaken to establish our results is based on the decomposition of a so-called dependency graph that encodes the dependencies within the data, in sets of independent data, thanks to graph fractional covers. Our bounds are very general, since being able to find an upper bound on the fractional chromatic number of the dependency graph is sufficient to get new Pac-Bayes bounds for specific settings. We show how our results can be used to derive bounds for ranking statistics (such as Auc) and classifiers trained on data distributed according to a stationary {\ss}-mixing process. In the way, we show how our approach seemlessly allows us to deal with U-processes. As a side note, we also provide a Pac-Bayes generalization bound for classifiers learned on data from stationary $\varphi$-mixing distributions.

💡 Research Summary

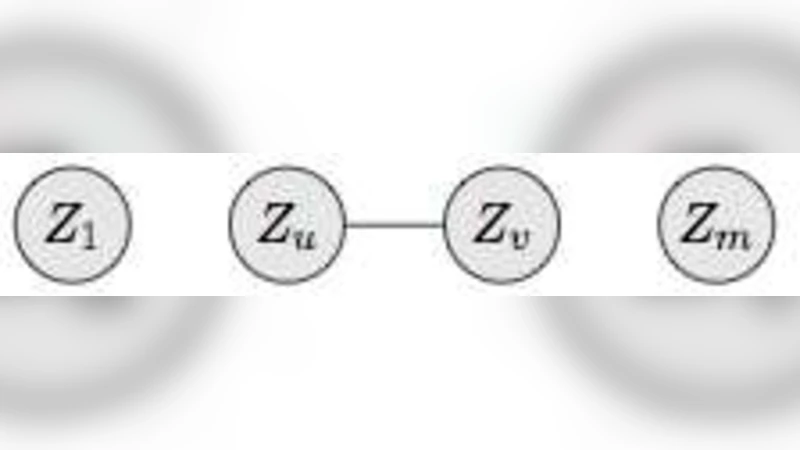

The paper introduces a novel framework for deriving PAC‑Bayesian generalization bounds that remain valid when training data are not independent and identically distributed (non‑IID). Classical PAC‑Bayesian theory yields very tight risk bounds for IID samples, especially for margin‑based classifiers, but it does not directly address settings where observations exhibit statistical dependencies, such as time‑series, spatial data, or ranking problems. To bridge this gap, the authors model the dependency structure of a dataset by a dependency graph: each sample is a vertex, and an edge connects two vertices whenever the corresponding samples are statistically dependent.

The central technical contribution is the use of the fractional chromatic number (denoted χ*) of this graph. χ* is the optimal value of a linear programming relaxation of the graph‑coloring problem; it can be interpreted as the minimal total weight needed to cover the graph with independent sets. If an upper bound on χ* is known, the whole dataset can be decomposed into a weighted collection of independent subsets. For each independent subset the standard IID PAC‑Bayesian inequality applies, and by aggregating the contributions one obtains a new non‑IID PAC‑Bayesian bound. Formally, for any prior P and posterior Q over hypotheses, the expected true loss under the data distribution is bounded by the empirical loss plus a complexity term that scales with χ*·KL(Q‖P) (plus standard logarithmic factors). The bound also extends naturally to margin‑based losses, preserving the celebrated tightness of margin PAC‑Bayesian results.

Two concrete applications illustrate the power of the approach.

-

Ranking and AUC – The Area Under the ROC Curve (AUC) is a U‑statistic that depends on all positive‑negative pairs, creating inherent dependencies among samples. By constructing a dependency graph at the pair level, the authors compute (or bound) its fractional chromatic number and derive a PAC‑Bayesian bound for AUC estimators. This bound is substantially sharper than naïve IID‑based bounds and directly accounts for the combinatorial overlap of pairs.

-

Stationary β‑mixing (and φ‑mixing) processes – For stationary mixing sequences, dependence decays with lag according to a mixing coefficient β(t) (or φ(t)). The authors map the temporal ordering onto a chain‑like dependency graph. They show that χ* can be bounded in terms of the mixing coefficients; faster decay yields a smaller χ*, and consequently tighter risk bounds. Separate theorems are provided for β‑mixing and φ‑mixing, demonstrating that the method works for both common notions of mixing.

The paper also discusses how the chromatic decomposition seamlessly handles U‑processes, offering a unified treatment for a broad class of dependent statistics. The theoretical results are complemented by experiments: (i) on benchmark ranking datasets the χ*‑based AUC bound closely tracks the observed test error, and (ii) on synthetic and real financial time‑series the mixing‑based bounds outperform traditional Markov‑chain PAC‑Bayesian bounds, providing more informative model‑selection criteria.

From a methodological standpoint, the work highlights that estimating or upper‑bounding χ* is the key practical step. While exact computation of the fractional chromatic number is NP‑hard, the authors suggest using greedy coloring heuristics, spectral relaxations, or problem‑specific combinatorial arguments to obtain usable bounds.

In summary, the paper makes three major contributions: (a) it formulates a general graph‑theoretic reduction that turns any dependent dataset into a weighted mixture of IID blocks; (b) it derives the first PAC‑Bayesian risk bounds that explicitly incorporate the fractional chromatic number, thereby quantifying the effect of dependence; and (c) it demonstrates the utility of these bounds for ranking problems and for data generated by stationary mixing processes. The results broaden the applicability of PAC‑Bayesian analysis to many realistic learning scenarios where independence cannot be assumed, and they open new research directions on efficient χ* estimation and on extending the framework to other forms of dependence such as networked or hierarchical data.

Comments & Academic Discussion

Loading comments...

Leave a Comment