Modeling Social Annotation: a Bayesian Approach

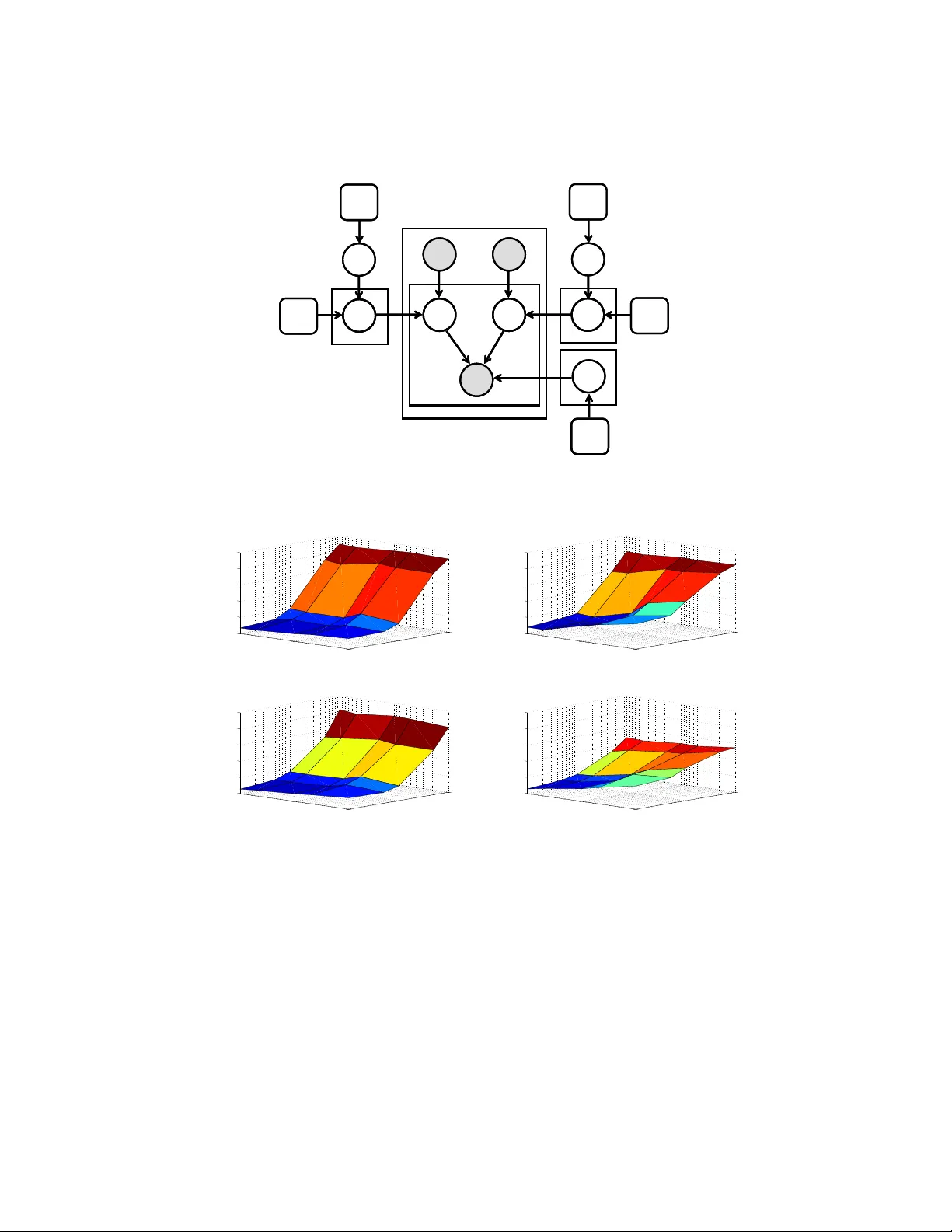

Collaborative tagging systems, such as Delicious, CiteULike, and others, allow users to annotate resources, e.g., Web pages or scientific papers, with descriptive labels called tags. The social annotations contributed by thousands of users, can poten…

Authors: Anon Plangprasopchok, Kristina Lerman