Active Learning for Hidden Attributes in Networks

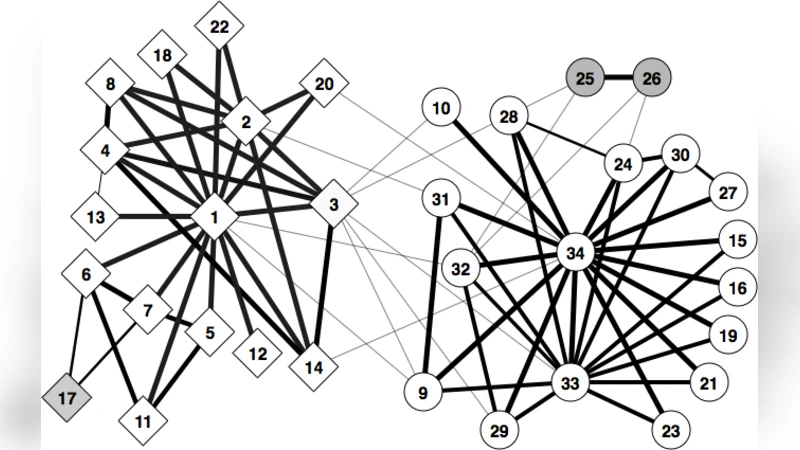

In many networks, vertices have hidden attributes, or types, that are correlated with the networks topology. If the topology is known but these attributes are not, and if learning the attributes is costly, we need a method for choosing which vertex to query in order to learn as much as possible about the attributes of the other vertices. We assume the network is generated by a stochastic block model, but we make no assumptions about its assortativity or disassortativity. We choose which vertex to query using two methods: 1) maximizing the mutual information between its attributes and those of the others (a well-known approach in active learning) and 2) maximizing the average agreement between two independent samples of the conditional Gibbs distribution. Experimental results show that both these methods do much better than simple heuristics. They also consistently identify certain vertices as important by querying them early on.

💡 Research Summary

The paper addresses the problem of efficiently learning hidden vertex attributes (or types) in a network when querying those attributes is expensive. Assuming the network is generated by a stochastic block model (SBM) – a flexible generative model that can capture both assortative and disassortative community structures – the authors formulate the task as an active‑learning problem: at each step they must decide which vertex to query so that the information gained about the unobserved vertices is maximized.

Two query‑selection criteria are proposed. The first is based on mutual information (MI) between the attribute of a candidate vertex and the attributes of all other vertices conditioned on the observed graph. Formally, MI(i) = H(t_{‑i}|G) – E_{t_i}

Comments & Academic Discussion

Loading comments...

Leave a Comment