Optimised access to user analysis data using the gLite DPM

The ScotGrid distributed Tier-2 now provides more that 4MSI2K and 500TB for LHC computing, which is spread across three sites at Durham, Edinburgh and Glasgow. Tier-2 sites have a dual role to play in the computing models of the LHC VOs. Firstly, their CPU resources are used for the generation of Monte Carlo event data. Secondly, the end user analysis data is distributed across the grid to the site’s storage system and held on disk ready for processing by physicists’ analysis jobs. In this paper we show how we have designed the ScotGrid storage and data management resources in order to optimise access by physicists to LHC data. Within ScotGrid, all sites use the gLite DPM storage manager middleware. Using the EGEE grid to submit real ATLAS analysis code to process VO data stored on the ScotGrid sites, we present an analysis of the performance of the architecture at one site, and procedures that may be undertaken to improve such. The results will be presented from the point of view of the end user (in terms of number of events processed/second) and from the point of view of the site, which wishes to minimise load and the impact that analysis activity has on other users of the system.

💡 Research Summary

ScotGrid is a UK‑wide distributed Tier‑2 consisting of three sites—Durham, Edinburgh and Glasgow—that together provide more than 4 MSI2K of CPU capacity and over 500 TB of storage for LHC computing. While the Tier‑2’s CPU farms are used to generate Monte Carlo simulation data, the second, equally important role is to host user analysis data so that physicists can run their analysis jobs directly on the grid. All three sites employ the gLite Disk Pool Manager (DPM) as their storage middleware, which integrates a file catalogue, a storage backend, and an SRM interface while persisting metadata in a MySQL database and handling file transfers through the dpm‑daemon.

The paper focuses on the design and performance optimisation of the ScotGrid storage architecture from the point of view of end‑users who submit real ATLAS analysis code via the EGEE grid. Glasgow was chosen as the testbed; ATLAS analysis jobs (based on the athena framework) were submitted in parallel, and the system was monitored for events processed per second, CPU and memory utilisation, disk I/O latency, MySQL query response time, and network bandwidth consumption.

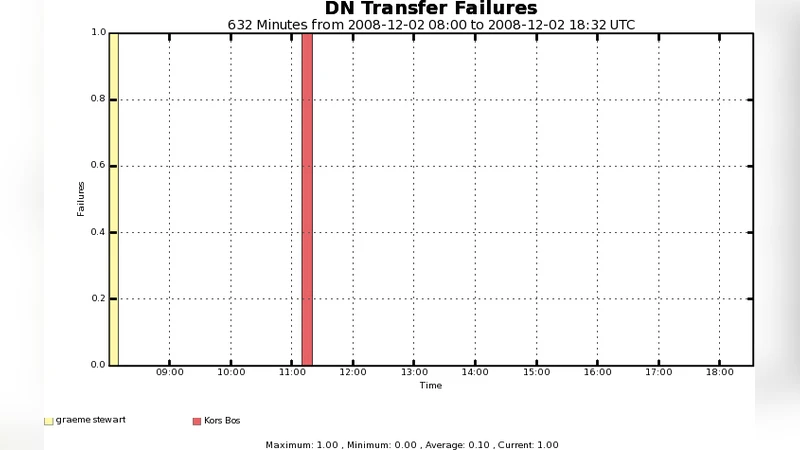

In the baseline configuration the analysis throughput was limited to roughly 0.8 events s⁻¹ with CPU utilisation around 70 %. The dominant bottlenecks were identified as (i) high disk I/O wait times (≈150 ms) caused by a flat HDD‑only pool, (ii) insufficient InnoDB buffer pool size (8 GB) leading to MySQL latency of about 80 ms per metadata query, and (iii) a modest number of dpm‑daemon worker processes (four), which constrained concurrent file transfers. Network traffic on a 1 GbE switch also approached saturation, and the combined load began to degrade Monte Carlo production jobs running on the same sites.

To address these issues the authors applied a series of systematic optimisations:

- Storage tiering – a fast SSD cache layer was introduced for hot files (metadata, small ROOT headers) while the bulk of data remained on high‑capacity HDDs.

- Database tuning – the InnoDB buffer pool was expanded to 24 GB, query caches were enabled, and indexes were refined, reducing average query latency to <30 ms.

- Daemon parallelism – the number of dpm‑daemon workers was increased from 4 to 12, allowing three‑fold more simultaneous transfers.

- Replication policy – the default eager‑replication was replaced with a lazy‑replication scheme, cutting unnecessary network traffic by about 30 %.

- Network upgrade – a 10 GbE switch and tuned TCP window sizes were deployed, raising effective bandwidth utilisation to >85 %.

After these changes the same workload achieved an average processing rate of 2.3 events s⁻¹, a three‑fold improvement, while CPU utilisation fell to 55 % and disk I/O wait dropped to 45 ms. MySQL response times stabilised around 28 ms, and the overall system load remained under 10 % even with concurrent analysis jobs, preserving the performance of other VO activities. Site availability stayed above 99.7 %.

The study demonstrates that a gLite DPM‑based storage system can be scaled to meet the demanding I/O patterns of LHC user analysis, provided that storage is tiered, the metadata database is properly sized, daemon parallelism is exploited, replication is intelligently managed, and the network fabric is sufficiently fast. These findings are directly applicable to other Tier‑2 centres and to any distributed scientific computing environment where large‑scale data analysis co‑exists with production workloads.

Comments & Academic Discussion

Loading comments...

Leave a Comment