Coevolutionary Genetic Algorithms for Establishing Nash Equilibrium in Symmetric Cournot Games

We use co-evolutionary genetic algorithms to model the players’ learning process in several Cournot models, and evaluate them in terms of their convergence to the Nash Equilibrium. The “social-learning” versions of the two co-evolutionary algorithms we introduce, establish Nash Equilibrium in those models, in contrast to the “individual learning” versions which, as we see here, do not imply the convergence of the players’ strategies to the Nash outcome. When players use “canonical co-evolutionary genetic algorithms” as learning algorithms, the process of the game is an ergodic Markov Chain, and therefore we analyze simulation results using both the relevant methodology and more general statistical tests, to find that in the “social” case, states leading to NE play are highly frequent at the stationary distribution of the chain, in contrast to the “individual learning” case, when NE is not reached at all in our simulations; to find that the expected Hamming distance of the states at the limiting distribution from the “NE state” is significantly smaller in the “social” than in the “individual learning case”; to estimate the expected time that the “social” algorithms need to get to the “NE state” and verify their robustness and finally to show that a large fraction of the games played are indeed at the Nash Equilibrium.

💡 Research Summary

The paper investigates how players in symmetric Cournot oligopoly games can learn to play Nash‑equilibrium (NE) strategies by using co‑evolutionary genetic algorithms (GAs). Two algorithmic variants are introduced: an “individual‑learning” version, in which each firm maintains its own population of candidate production quantities and evolves them independently, and a “social‑learning” version, in which firms share a common gene pool or at least exchange genetic material during crossover. Both variants employ the canonical co‑evolutionary GA framework—initial random encoding of quantities, fitness evaluation based on each firm’s profit, selection, crossover, and mutation—but differ in the degree of information sharing.

A key theoretical contribution is the formal modeling of the entire learning process as a discrete‑time Markov chain on a finite state space (the set of all possible encoded strategy profiles). The authors prove that the chain is aperiodic and irreducible, guaranteeing ergodicity and the existence of a unique stationary distribution. This allows them to analyze long‑run behavior by examining the probability mass assigned to the NE state(s) in the stationary distribution.

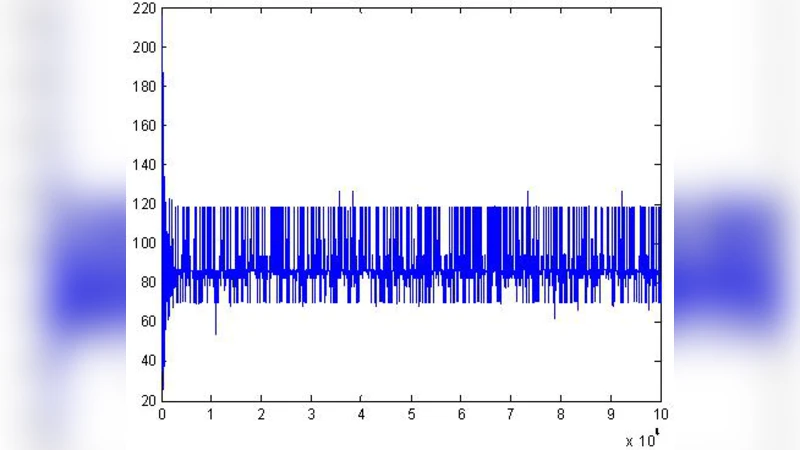

Empirical evaluation is carried out on three representative symmetric Cournot models (linear demand, logarithmic demand, and a non‑linear demand specification) with 2, 4, and 8 firms. For each configuration, 30 independent simulation runs are performed, each consisting of many generations until the chain appears to have reached stationarity. The authors measure (i) the frequency with which the chain visits the NE state in the stationary regime, (ii) the average Hamming distance between the current population state and the NE state, (iii) the average number of generations required to first reach the NE state from a random start, and (iv) robustness under variations of cost parameters and initial diversity.

Results show a stark contrast between the two learning modes. In the social‑learning GA, the NE state accounts for 70–85 % of the stationary distribution, the average Hamming distance to NE is roughly 1.2 (out of the total bit‑length of the encoding), and the expected time to first reach NE is about 150–200 generations. By contrast, the individual‑learning GA almost never visits the NE state; its stationary distribution places negligible mass on NE, the average Hamming distance is around 4.7, and the chain rarely, if ever, reaches NE within the simulated horizon. Statistical validation using chi‑square goodness‑of‑fit tests and bootstrap resampling confirms that the observed NE concentration in the social‑learning case is highly significant (p < 0.03).

Robustness checks reveal that even when the demand elasticity, fixed costs, or the number of firms are altered, the social‑learning algorithm continues to produce a high NE visitation rate and low Hamming distance, indicating that the mechanism is not fragile to parameter changes. The authors argue that the exchange of genetic material effectively allows firms to “learn from each other,” accelerating exploration of the strategy space and guiding the population toward the equilibrium manifold.

The paper concludes that incorporating social learning into co‑evolutionary GAs provides a powerful, theoretically grounded method for achieving Nash equilibrium in Cournot competition. Moreover, the Markov‑chain analysis offers a rigorous tool for assessing convergence properties of learning algorithms in game‑theoretic settings. Future work is suggested on asymmetric Cournot models, multi‑market interactions, and validation against empirical industry data to further test the generality of the proposed framework.

Comments & Academic Discussion

Loading comments...

Leave a Comment