Approximate dynamic programming has been used successfully in a large variety of domains, but it relies on a small set of provided approximation features to calculate solutions reliably. Large and rich sets of features can cause existing algorithms to overfit because of a limited number of samples. We address this shortcoming using $L_1$ regularization in approximate linear programming. Because the proposed method can automatically select the appropriate richness of features, its performance does not degrade with an increasing number of features. These results rely on new and stronger sampling bounds for regularized approximate linear programs. We also propose a computationally efficient homotopy method. The empirical evaluation of the approach shows that the proposed method performs well on simple MDPs and standard benchmark problems.

Deep Dive into Feature Selection Using Regularization in Approximate Linear Programs for Markov Decision Processes.

Approximate dynamic programming has been used successfully in a large variety of domains, but it relies on a small set of provided approximation features to calculate solutions reliably. Large and rich sets of features can cause existing algorithms to overfit because of a limited number of samples. We address this shortcoming using $L_1$ regularization in approximate linear programming. Because the proposed method can automatically select the appropriate richness of features, its performance does not degrade with an increasing number of features. These results rely on new and stronger sampling bounds for regularized approximate linear programs. We also propose a computationally efficient homotopy method. The empirical evaluation of the approach shows that the proposed method performs well on simple MDPs and standard benchmark problems.

Solving large Markov Decision Processes (MDPs) is a very useful, but computationally challenging problem addressed widely by reinforcement learning. It is widely accepted that large MDPs can only be solved approximately. This approximation is commonly done by relying on linear value function approximation, in which the value function is chosen from a small-dimensional vector space of features. While this framework offers computational benefits and protection from the overfitting in the training data, selecting an effective, small set of features is difficult and requires a deep understanding of the domain. Feature selection, therefore, seeks to automate this process in a way that may preserve the computational simplicity of linear approximation (Parr et al., 2007;Mahadevan, 2008). We show in this paper that L 1 -regularized approximate linear programs (RALP) can be used with very rich feature spaces. RALP relies, like other value function approximation methods, on samples of the state space. The value function error on states that are not sampled is known as the sampling error. This paper shows that regularization in RALP can guarantee small sampling error. The bounds on the sampling error require somewhat limiting assumptions on the structure of the MDPs, as any guarantees must, but this framework can be used to derive tighter bounds for specific problems in the future. The relatively simple bounds can be used to determine automatically the regularization coefficient to balance the expressivity of the features with the sampling error.

We derive the approach with the L 1 norm, but it could be used with other regularizations with small modifications. The L 1 norm is advantageous for two main reasons. First, the L 1 norm encourages the sparse solutions, which can reduce the computational requirements. Second, the L 1 norm preserves the linearity of RALPs; the L 2 norm would require quadratic optimization.

Regularization using the L 1 norm has been widely used in regression problems by methods such as LASSO (Tibshirani, 1996) and Dantzig selector (Candes & Tao, 2007). The value-function approximation setting is, however, quite different and the regression methods are not directly applicable. Regularization has been previously used in value function approximation (Taylor & Parr, 2009;Farahmand et al., 2008;Kolter & Ng, 2009). In comparison with LARS-TD (Kolter & Ng, 2009), an L 1 regularized value function approximation method, we explicitly show the influence of regularization on the sampling error, provide a well-founded method for selecting the regularization parameter, and solve the full control problem. In comparison with existing sampling bounds for ALP (de Farias & Van Roy, 2001), we do not assume that the optimal policy is available, make more general assumptions, and derive bounds that are independent of the number of features.

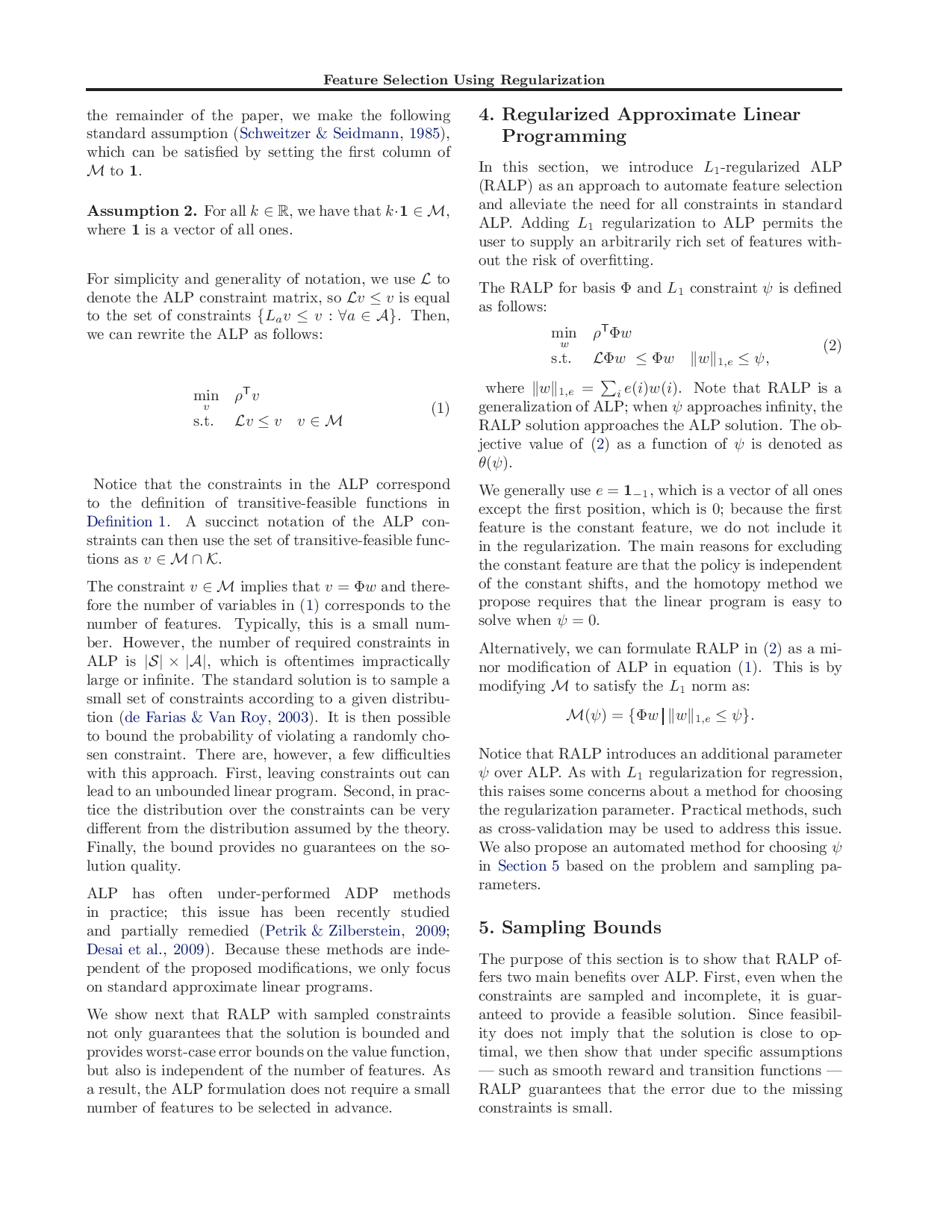

Our approach is based on approximate linear programming (ALP), which offers stronger theoretical guarantees than some other value function approximation algorithms. We describe ALP in Section 3 and RALP and its basic properties in Section 4. RALP, unlike ordinary ALPs, is guaranteed to compute bounded solutions. We also briefly describe a homotopy algorithm for solving RALP, which exhibits anytime behavior by gradually increasing the norm of feature weights. To develop methods that automatically select features with generalization guarantees, we propose general sampling bounds in Section 5. These sampling bounds, coupled with the homotopy method, can automatically choose the complexity of the features to minimize over-fitting. Our experimental results in Section 6 show that the proposed approach with large feature sets is competitive with LSPI when performed even with small feature spaces hand selected for standard benchmark problems. Section 7 concludes with future work and a more detailed relationship with other methods.

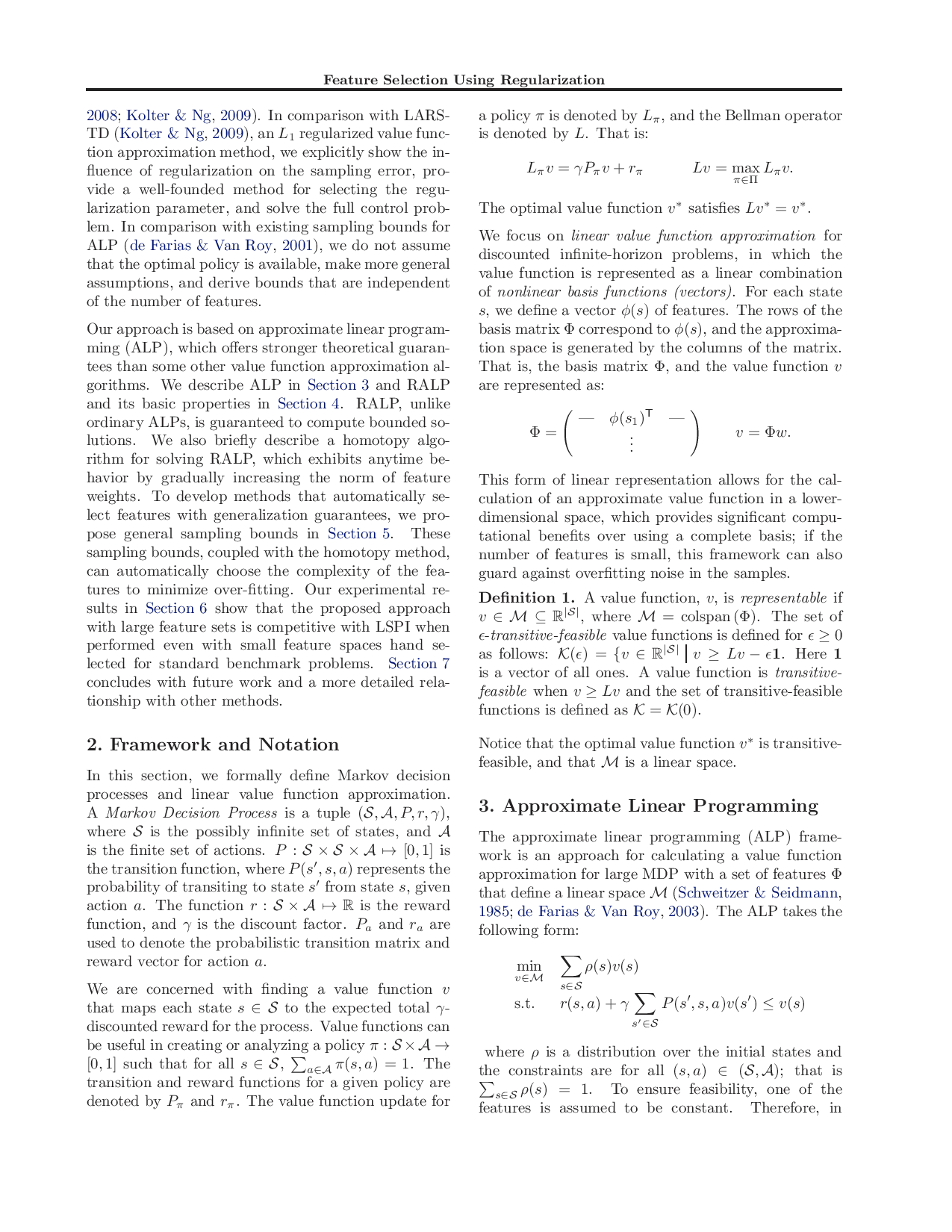

In this section, we formally define Markov decision processes and linear value function approximation. A Markov Decision Process is a tuple (S, A, P, r, γ), where S is the possibly infinite set of states, and A is the finite set of actions. P : S × S × A → [0, 1] is the transition function, where P (s ′ , s, a) represents the probability of transiting to state s ′ from state s, given action a. The function r : S × A → R is the reward function, and γ is the discount factor. P a and r a are used to denote the probabilistic transition matrix and reward vector for action a.

We are concerned with finding a value function v that maps each state s ∈ S to the expected total γdiscounted reward for the process. Value functions can be useful in creating or analyzing a policy π : S × A → [0, 1] such that for all s ∈ S, a∈A π(s, a) = 1. The transition and reward functions for a given policy are denoted by P π and r π . The value function update for a policy π is denoted by L π , and the Bellman operator is denoted by L. That is:

The optimal value function v * satisfi

…(Full text truncated)…

This content is AI-processed based on ArXiv data.