Decoding Generalized Concatenated Codes Using Interleaved Reed-Solomon Codes

Generalized Concatenated codes are a code construction consisting of a number of outer codes whose code symbols are protected by an inner code. As outer codes, we assume the most frequently used Reed-Solomon codes; as inner code, we assume some linea…

Authors: Christian Senger, Vladimir Sidorenko, Martin Bossert

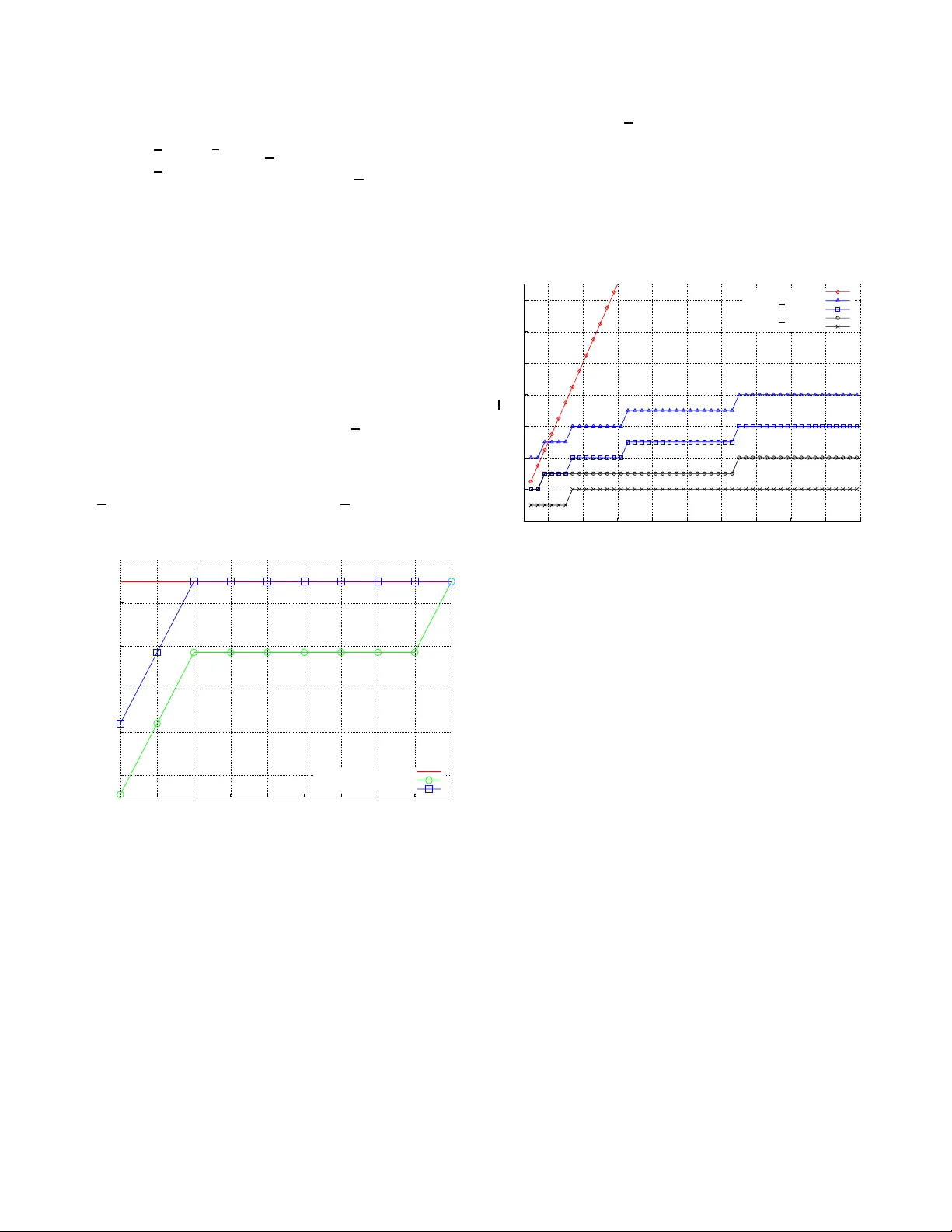

Decoding Generaliz ed Concatenated Codes Using Interlea v ed Reed–Solomon Codes Christian Senger , Vladimir Sidorenko, Martin Bosse rt Inst. of T elecommunications and Applied Information Theory Ulm Uni versity , Ulm, Germany { christian.senger | vladimir .sidoren ko | martin.bossert } @un i-ulm.de V ictor Zyab lov Inst. for Information T ransmission Problems Russian Academy of Sciences, Mosco w , Russia zyablo v@iitp.ru Abstract — Generalized Concatenated codes are a code con- struction consisting of a number of ou ter codes whose code symbols are protected by an inner code. As ou ter codes, we assume the most fre quently used Reed–Solomon codes; as in ner code, we assume some l inear block code which can b e decoded up to half its minimum distance. Decoding up to half the mini mum distance of Generalized Concate nated codes is classically ac hieved by the Blokh–Zyablov–Dumer algo rithm, which iteratively de- codes by first using the in ner decoder to get an estimate of the outer code words and then using an outer error/er asure decoder with a v arying number of erasures d etermined by a set of pre- calculated thresholds. In this paper , a modified version of the Blokh–Zyablov–Dumer algorithm is proposed, which exploits the fact th at a number of outer Reed–Solomon codes wi th aver age minimum di stance ¯ d can b e grouped into one single Interleav ed Reed–Solomon code which can be decoded b ey ond ¯ d/ 2 . This allows to skip a number of decoding iterations on the one hand and to reduce the co mplexity of each decoding iteration significantly – while maintainin g t he decodin g performa nce – on the other . I . I N T R O D U C T I O N In 1966, Forney introd uced the con cept o f concaten ated codes [1]. It was ge neralized in 1 976 by Blokh and Zyablov to Generalized Concaten ated (GC) codes [2]. Th e GC appro ach allows to design powerful cod es with large block leng ths using short, and thus easily deco dable, comp onent cod es. The designed distance and the performa nce of GC codes can be easily estimate d theoretically . This allo ws to design GC codes for applicatio ns like e.g . o ptical lines, wh ere block error rates in the or der of 10 − 15 are requir ed, a region, where simulation s ar e no t f easible. GC c odes can be decoded up to half their minimum distance using a suffi ciently large number of d ecoding attempts f or each outer cod e in the Blokh– Zyablov–Du mer algo rithm (BZD A) [3], see also Du mer [4], [5]. W e shou ld also mention p apers by Sorger [6], and K ¨ otter [7] who suggested interesting modifications of a BMD decoder in such a way th at multi-attemp t decodin g of the outer code can be m ade ”in o ne step ”. Nielsen suggested in [ 8] to u se the Guruswami–Sudan list decoding algo rithm [9] for decoding the outer codes and has shown tha t in this case also one decodin g attem pt is sufficient to allow de coding up to half the minimum distance of the GC code. In this paper, we employ This work has been supporte d by DFG, Germany , under grants BO 867/15, and BO 867/17. Vladimir Sidorenk o is on leave from IITP , Russian Academy of S cienc es, Moscow , Russia. another idea, which allo ws to decrease th e number of outer decodin gs, but also to sk ip many d ecoding s of the inn er code. This idea is based on Interleaved Reed –Solomo n (I RS) codes [10]. Oth er aspects of using IRS codes in concatenated code s were considered in [11], [12]. The rest of our paper is o rganized as follows: In Section II we e xplain GC co des as we ll as the requ ired no tations and assumptions. Section II I explains IRS codes and where they appear within GC codes. Section I V gives an overvie w of the BZD A as introduced in [3]. A gen eralization of this is given in Sectio n V, leading to a n ew algorithm which maintains the e rror-correcting perfo rmance of GC codes w hile reducing the n umber of o uter decodings and skipp ing m any inner decodings. In Section VI we illustrate our results by means of som e exam ples. I I . G E N E R A L I Z E D C O N C A T E NAT E D C O D E S A N D T H E I R D E C O D I N G Encodin g of a GC code C ( n, k , d ) of o rder ℓ is as follows , where we restrict ourselves to outer RS codes C o l ( n o , k o l , d o l ) , l = 0 , . . . , ℓ , over the b inary extension field F 2 m and an inn er binary block cod e C i ( n i , k i , d i ) with dimension k i = ℓ m . The first step is ou ter enc oding. For this we take ℓ code- words c o l of the outer codes C o l and p ut them as ro ws into an ℓ × n o matrix A := c o 0 . . . c o ℓ − 1 , c o l ∈ C o l over F 2 m . Th e second step is inn er enco ding, where the binary counterparts of th e columns j = 0 , . . . , n o − 1 of A are en coded by the inner code to obtain cod e word colum ns c i ,T j ∈ C i . The result of this pro cedure is a binary n i × n o matrix C := ( c i ,T 0 , . . . , c i ,T n o − 1 ) , which in turn is a cod e word of the GC cod e C . The inner cod e C i has the fo llowing nested structure : The code words are obtained by encodin g an ar bitrary binary informa tion vector ( u 0 , . . . , u k i − 1 ) . If we fix l ∈ { 0 , . . . , ℓ − 1 } group s of m in formatio n b its starting from u 0 and encode, we obtain a subc ode C i l ( u 0 , . . . , u ( l − 1) m − 1 ) ⊆ C i with distanc e d i l . Note that d i 0 ≤ · · · ≤ d i ℓ − 1 due to the special ch oice of encoders for the subcodes C i l . Obviously , C i 0 = C i . The minimum distance of the GC code C is th en lower boun ded by its designed distan ce d := min { d o 0 d i 0 , . . . , d o ℓ − 1 d i ℓ − 1 } . (1) The channel adds a binary error matr ix E of weight e to the transmitted concatenated co de matrix C , resulting in a receiv ed matrix R := ( r i ,T 0 , . . . , r i ,T n o − 1 ) = C + E at the receiver . As output of GC decoding with the BZD A we want to o btain a matrix ¯ A := (¯ a i,j ) i =0 ,...,ℓ − 1 , j =0 ,.. .,n o − 1 = ¯ c o 0 . . . ¯ c o ℓ − 1 , ¯ c o l ∈ C o l over F 2 m , which is an estimate of the matrix A . Decoding consists of ℓ iter ations, where itera tion l = 0 , . . . , ℓ − 1 is as follows: (i) Decodin g of all colum ns r i ,T j , j = 0 , . . . , n o − 1 , of the received m atrix R by BMD dec oders for the n ested sub- codes C i l (¯ a 0 ,j , . . . , ¯ a l − 1 ,j ) , corr ecting up to ⌊ ( d i l − 1 ) / 2 ⌋ errors and altogether y ielding a ne w estima te ˜ c o l for the l -th row o f ¯ A . (ii) Execution of z l attempts of decoding ˜ c o l with C o l and a different number of erased symbols in each attempt, which yields a candidate list of at most size z l . Finally , the ”best” candid ate ¯ c o l from this list has to be selected using some criterion an d inserted in to ¯ A . Note tha t b ecause o f this recurr ent structure it is suf- ficient to consider th e BZD A on ly for ordinary concate- nated cod es, as decod ing of GC codes simply mean s re- peated application of this special case for the sequence C i 0 , . . . , C i l (¯ a 0 ,j , . . . , ¯ a l − 1 ,j ) , . . . , C i ℓ − 1 (¯ a 0 ,j , . . . , ¯ a ℓ − 2 ,j ) of th e nested in ner subco des, j = 0 , . . . , n o − 1 , and the co rrespon d- ing outer codes [4], [5]. Th e details o f the BZDA f or ord inary concatenate d cod es are described in Section IV. In [3] it is shown that if ∀ l ∈ { 0 , . . . , ℓ − 1 } : z l = d i l / 2 for e ven d i , the BZD A can decode u p to ⌊ ( d o l d i l − 1) / 2 ⌋ errors in the l - th iteration and thu s by ( 1) also up to ⌊ ( d − 1) / 2 ⌋ error s in a GC code word. I I I . I N T E R L E A V E D R E E D – S O L O M O N C O D E S Observe that the matrix A is just an interleav ed set of ℓ different RS codes, hence an I RS code. For IRS co des an efficient decoding algorith m w as sug gested in [12], which has only ℓ times the co mplexity o f the Berlekamp–Massey algorithm for decoding one single RS code. The algorithm allows to correct at most r ( ℓ ) := ⌊ ( ¯ d o − 1) ℓ/ ( ℓ + 1) ⌋ er roneou s columns o f A , where ¯ d o := 1 /ℓ P ℓ − 1 l =0 d o l is th e average minimum distance o f the interlea ved set of RS codes and d o l > r ( ℓ ) , l = 0 , ..., ℓ − 1 . (2) The IRS decodin g algorithm from [1 2] yield s a d ecoding failure with some pro bability . Howev er , this probability can be made small and is neglected h ere. If th e co mplete matr ix A does n ot satisfy (2) , we c an sp lit it into a number of s ubmatr ices with th e same length as A , which all f ulfill (2) and thus can be decoded by the IRS decoding algorithm from [1 2]. Assume th at A v := c o v . . . c o v + ˜ ℓ − 1 , c o l ∈ C o l is such a subm atrix of A and forms an I RS co de with average minimum distance ¯ d o , that satisfies both constraint (2) an d ¯ d o d i v ˜ ℓ/ ( ˜ ℓ + 1) ≥ d . The main idea o f a pplying the IRS decodin g algorithm from [12] to GC cod es is as f ollows: W e can replace ˜ ℓ iteratio ns v , ..., v + ˜ ℓ − 1 of th e BZD A by the following single iteration: (i) Deco ding of all colum ns r i ,T j , j = 0 , . . . , n o − 1 , of the received m atrix R b y BMD dec oders for the subcod es C i v (¯ a 0 ,j , . . . , ¯ a v − 1 , j ) , correctin g up to ⌊ ( d i v − 1) / 2 ⌋ errors and yielding an estimate ˜ A v for the submatrix A v . (ii) Executio n o f z v attempts of IRS decoding ˜ A v with a different numb er of erased column s in each attemp t, which y ields a candid ate list of at m ost size z v . Fin ally , the ”best” candidate ¯ A v from this list h as to be selected using some criterion an d inserted in to ¯ A . As a result of this method, we skipped n o ( ℓ − 1) inn er decodin gs and we will show th at the nu mber of req uired decodin g attemp ts for the outer code to guaran tee decoding up to ⌊ ( d o d i v − 1) / 2 ⌋ chann el errors is much smaller than in the o riginal BZD A, which in practice me ans z v ∈ { 2 , 3 } . Eventually , our modified algorithm corrects up to half the minimum distance o f the GC cod e. I V . B Z D A W I T H O U T E R B M D D E C O D I N G In this section , we con sider dec oding of a simple con - catenated code C , which con sists of the outer RS code C o and the inner bina ry code C i . This corre sponds to the l -th iteration of the refined BZD A fro m [4] f or a GC code where it holds w .l.o .g C o = C o l , C i = C i l (¯ a 0 ,j , . . . , ¯ a l − 1 ,j ) a nd ∀ i ∈ { 0 , . . . , l − 1 } , j 1 , j 2 ∈ { 0 , . . . , n o − 1 } : ¯ a i,j 1 = ¯ a i,j 2 . First (step (i) of the BZD A), we decode the co lumns r i ,T j of the r eceiv ed matrix R b y a BMD decoder fo r C i , co rrecting u p to ⌊ ( d i − 1) / 2 ⌋ errors and y ielding code word estimates ˜ c i ,T j or decodin g failures. Deco ding o f th e ou ter c ode C o is p erform ed with respec t to the ord ered set of thresho lds { T ( z ) 1 , . . . , T ( z ) z } with 0 ≤ T ( z ) 1 < · · · < T ( z ) z ≤ ( d i − 1 ) / 2 . For each decodin g attempt k ∈ { 1 , . . . , z } th e d ecoding re sults of the inne r decoder dep end o n the threshold T k in the following mann er: The symbols ˜ r o j ( k ) ∈ F 2 m ∪ { " } deliv ered to the outer decoder at position j are ˜ r o j ( k ) := enc − 1 C i ( ˜ c i j ) , d H ( r i j , ˜ c i j ) ≤ T k , " , d H ( r i j , ˜ c i j ) > T k , " , failure o f the inner deco der , (3) where r i ,T j is the received word in the j -th co lumn, ˜ c i ,T j is the resu lt o f inn er decod ing, enc − 1 C i ( · ) maps code w ords o f C i to the corr espondin g q -ary info rmation symbols, and " is the symbol for an e rasure. As result from outer decoding ˜ r o ( k ) := ( ˜ r o 0 ( k ) , . . . , ˜ r o n o − 1 ( k )) we obtain the ou ter code w ord estimate ˜ c o ( k ) = ( ˜ c o 0 ( k ) , . . . , ˜ c o n o − 1 ( k )) . From (3) follows that thresholds with equal integers pa rts y ield equ al deco ding attempts, so the num ber of z ⋆ actual attempts may b e smaller than the number z of thresh olds, i.e. z ⋆ ≤ z . The number s of decod ing errors and erasures occur ring at decodin g ˜ r o ( k ) are d enoted by ε ( k ) and τ ( k ) , respectively . The outer RS co de C o can successfully deco de as long as 2 ε ( k ) + τ ( k ) < d o , sin ce w e assume outer BMD decoding in this section. For a fixed numb er z of threshold s, the f ollowing theorem fixes the optimu m v alues of the thresho lds such that the decoding bound o f the BZDA is maximized. Theorem 1 (Blokh, Zyablov [3]) F or a conca tenated co de with outer BMD-d ecoded RS code and inn er BMD-decod ed code C i ( n i , k i , d i ) , the set of thresholds { T ( z ) 1 , . . . , T ( z ) z } which max imizes the d ecoding bound is determined by T ( z ) k := k · d i + 1 2 z + 1 − 1 , (4) k ∈ { 1 , . . . , z } . If the thresholds are c hosen according to (4 ), the decoding bound is g iv en b y the follo wing th eorem in a sen se that the transmitted cod e word is amo ng the elements of th e result list L := { ˜ c o (1) , . . . , ˜ c o ( z ⋆ ) } . Theorem 2 (Blokh, Zyablov [3]) F or a conca tenated co de with o uter BMD-d ecoded RS code C o ( n o , k o , d o ) a nd in ner BMD-decod ed code C i ( n i , k i , d i ) , the decoding bo und is e < d o ( ⌊ T ( z ) z ⌋ + 1) = d o z · d i + 1 2 z + 1 . (5) In Figure 1, the deco ding bound (5) is plotted with circles versus the number of thresholds z fo r an example with d o = 33 , d i = 20 . It can clearly be seen that th e bo und reaches d o d i / 2 , i.e. h alf the minimu m d istance of the concatenated code with increasing nu mber of thresholds. The bo und ob - viously only depends o n the g reatest threshold T ( z ) z . If we hypoth esize that the number of thresholds ten ds to in finity , we can see that for the greatest thr eshold T ( z ) z − → z →∞ d i − 1 2 =: T ( ∞ ) ∞ . But a s T ( ∞ ) ∞ = ( d i − 1) / 2 , w e know that th e greatest p ossible integer th reshold ⌊ T ( ∞ ) ∞ ⌋ is d i / 2 − 1 if d i is e ven, and ( d i − 1) / 2 if d i is od d. This allows to state the following theo rem, which confirms our o bservation fro m Figur e 1 . Theorem 3 If the number of thr esholds z tends to infinity , the decod ing boun d of the BZD A for a conca tenated cod e C with o uter BMD-d ecoded RS code C o ( n o , k o , d o ) a nd in ner BMD-decod ed code C i ( n i , k i , d i ) is e < d o d i 2 . (6) Pr oof: The decoding b ound (5) is non -decreasing in z , hence it assumes its maximu m at z → ∞ . Con sider two ca ses: (i) d i is even, th us the greatest po ssible integer thre shold is d i / 2 − 1 and e < d o ( d i / 2 − 1 + 1) = d o d i / 2 . (ii) d i is odd, hen ce th e greatest possible in teger thr eshold is ( d i − 1) / 2 and e < d o ( d i − 1) / 2 + 1 = d o d i / 2 + d o / 2 . In the f ollowing, we restrict ourselves to bin ary erro r matrices E meeting (6). T o obtain d ecoding b ound (6), th e greatest possible integer threshold need s to be among the th reshold set. For even d i this greatest integer thresho ld is T even := ⌊ T ( ∞ ) ∞ ⌋ = d i / 2 − 1 , which is strictly smaller than the limit T ( ∞ ) ∞ . By th e fo llowing lemma it can be re ached already f or a rather small value of z . Lemma 1 F or a co ncaten ated cod e with inner BMD-de coded code C i ( n i , k i , d i ) a nd outer BMD- decoded RS co de C o the gr eatest possible inte ger threshold T even is r eached if z ≥ z := d i / 2 . Pr oof: Solve T ( z ) z ≥ T even for z . W e can thus obtain (6 ) with o nly d i / 2 th resholds acco rding to (4) if d i is ev en. If h owe ver d i is od d, the gr eatest p ossible in teger thresh old is T od d := T ( ∞ ) ∞ = ( d i − 1) / 2 , i.e. the limit T ( ∞ ) ∞ itself. It can obviously only be r eached f or an infinte num ber of threshold s. But the numb er o f integers b elow T od d is ( d i − 1) / 2 , hence the number of actual dec oding attempts is upper bound ed by ( d i − 1) / 2 . It follo ws th at even th ough in the d i odd case the number o f r equired thr esholds is infinite, only z ⋆ = ( d i − 1 ) / 2 outer decoding attempts are su fficient to ach iev e decoding bound (6). Up to n ow , we on ly know that the tra nsmitted outer code word c o is somewher e within th e result list L o f the BZDA if (6) is f ulfilled. The following lemma p rovides a m eans of exactly determ ining its po sition among the elements of L . Lemma 2 (Blokh, Zyablov [3 ]) Let t ( k ) := P n o − 1 j =0 t j ( k ) with t j ( k ) := ∆ j , if ˜ c o j ( k ) = e nc − 1 C i ( ˜ c i j ) d i − ∆ j , if ˜ c o j ( k ) 6 = e nc − 1 C i ( ˜ c i j ) d i 2 , failur e of the inn er de coder , and ∆ j := d H ( r i j , ˜ c i j ) . Assume e < d o d i / 2 and that T k 0 is a thr eshold with ˜ c o ( k 0 ) = c o . Th en t ( k 0 ) < d o d i 2 , (7) and ∀ k ∈ { 1 , . . . , |L|} , k 6 = k 0 : t ( k ) > d o d i 2 . The lem ma gu arantees that only the transmitted o uter code word c o = ˜ c o ( k 0 ) fu lfills (7 ), i.e. that no fu rther de coding attempts have to be executed as so on a s (7 ) is fulfilled for th e smallest threshold index k ∈ { 1 , . . . , z } . Th en, we set k 0 := k and choose ¯ c o = ˜ c o ( k 0 ) . V . B Z D A W I T H O U T E R I R S C O D E S , I . E . O U T E R B D D E C O D I N G Now we conside r the ca se wh ere C o is an IRS code, i.e. a ro w-wise a rrangem ent of ℓ ≥ 2 RS codes o f equal length but p otentially d ifferent dimension s, which ar e d ecoded collaboratively as described in Section I II. This allows C o to correct a larger num ber of error s leading to a d ecoding success whilst λε ( k ) + τ ( k ) ≤ d o − 1 , where 1 < λ := ( ℓ + 1) /ℓ < 2 . This me ans Bou nded Distance (BD) d ecoding . Our aim now is to derive formu lae co rrespond ing to (4) and (5) for this specific case. In do ing so, we generalize the appro ach for outer BMD deco ding from [3]. Th e proce dure is as fo llows: Let e fail be the smallest n umber of channel err ors for a g i ven set of thresholds { T ( z ) 1 , . . . , T ( z ) z } , such that all decoding attempts k ∈ { 1 , . . . , z } fail, i.e. such that ∀ k ∈ { 1 , . . . , z } : λε ( k ) + τ ( k ) > d o − 1 . (8) W e d etermine e fail := min ( ε (1) ,τ (1) ,.. .,ε ( z ) ,τ ( z )) { e } under the co ndition that (8) is fulfilled. Then , we find the set { T ( z ) 1 , . . . , T ( z ) z } o f thresho lds which m aximizes this m ini- mum, i.e . the set of thresholds, which maximizes th e d ecoding bound . This set is determined by the expression { T ( z ) 1 , . . . , T ( z ) z } := arg max { ¯ T ( z ) 1 ,..., ¯ T ( z ) z } { e fail } . The detailed deriv ation is too in volved to be p resented here, so we confine ourselves to the resu lts in fo rm of th e f ollowing theorems. Theorem 4 F or a concatenated code C with o uter collabo- ratively decoded IRS code C o consisting of ℓ RS codes and inner BMD- decoded cod e C i ( n i , k i , d i ) , th e set of thr esholds { T ( z ) 1 , . . . , T ( z ) z } which maximizes the decoding bound is defined by T ( z ) k := b − a ( λ − 1) k (9) with b := d i − 1 + λ ( λ − 1) z 2 − λ ( λ − 1) z , a := d i + 1 2 − λ ( λ − 1) z , k ∈ { 1 , . . . , z } , wher e z is the number of thres holds and 1 < λ = ( ℓ + 1 ) /ℓ < 2 . Theorem 5 F or a concatenated code C with o uter collabo- ratively decoded IR S code C o ( n o , k o , d o ) consisting of ℓ RS codes and z thr esholds chosen as in (9) , the d ecoding bound is given b y e < d o ( ⌊ T ( z ) z ⌋ + 1) . (10) By Theo rem 5 the dec oding bou nd on ly depen ds o n thresh- old T ( z ) z , the g reatest one among the ord ered threshold set { T ( z ) 1 , . . . , T ( z ) z } . He nce to max imize the d ecoding bo und (10) we have to maximize T ( z ) z . Since the thresho ld location function (9) is non-decre asing, the greatest threshold occurs fo r z → ∞ , and is T ( ∞ ) ∞ := ( d i − 1) / 2 . The following theo rem states the de coding bou nd for this gr eatest possible thr eshold. Theorem 6 Let C b e a c oncaten ated code with inner BMD- decoded cod e C i ( n i , k i , d i ) a nd o uter IRS code C o with ℓ > 2 . If the maximum possible inte ger thres hold is among the thr eshold set, the decod ing bou nd is given by e < d o d i 2 . Pr oof: Inserting the th e integer parts T even := ⌊ T ( ∞ ) ∞ ⌋ = d i / 2 − 1 an d T od d := T ( ∞ ) ∞ = ( d i − 1) / 2 , respectively , of the greatest possible thre sholds into bound (10) proves the statement. For even d i , the greatest po ssible integer threshold T even already is reac hed con sidering a finite numb er of threshold s, i.e. if z ≥ z := min { z } s . t ⌊ T ( z ) z ⌋ = T even = d i / 2 − 1 . Thus for e ven d i the finite threshold set T even := { T ( z ) 1 , . . . , T ( z ) z } (11) is suf ficient to o btain th e maximum of (1 0). If on the o ther h and d i is od d, the greatest possible integer threshold T od d is T ( ∞ ) ∞ itself, hence th e num ber of r equired thresholds in fact is infinite. Bu t since w e know th at deco d- ing attem pts corresponding to thr esholds w ith equal integer parts co incide, we ca n skip all thresholds within the in terval ( T ( ∞ ) ∞ − 1 , T ( ∞ ) ∞ ) by the fo llowing lemma. Lemma 3 F or a co ncaten ated cod e with inner BMD-de coded code C i ( n i , k i , d i ) a nd an d oute r I RS cod e C o with ℓ collab- oratively decoded RS codes T ( ∞ ) ∞ − 1 = ( d i − 1) / 2 − 1 is r eached if k ≥ k := log ℓ ( d i + 1 ) . Pr oof: If z → ∞ , then the threshold lo cation fun ction (9) beco mes T ( ∞ ) k := ( d i − 1) / 2 − ( d i + 1)( λ − 1) k / 2 . But T ( ∞ ) k ≥ ( d i − 1 ) / 2 − 1 ⇔ k ≥ log ℓ ( d i + 1 ) / 2 . By Lemma 3 we k now that a ll threshold s T ( ∞ ) k in the range k < k < ∞ ha ve equal integer p arts and therefo re can be omitted. Thu s, instead of the infinite threshold set { T ( ∞ ) 1 , . . . , T ( ∞ ) ∞ } it is equi valent to conside r the finite set T od d := { T ( ∞ ) 1 , . . . , T ( ∞ ) k } ∪ { T ( ∞ ) ∞ } (12) with only k + 1 = log ℓ ( d i + 1 ) / 2 + 1 elem ents. W e know that if we u tilize the sets T even and T od d of thresholds accordin g to (9 ) f or even and odd inn er m ini- mum distan ce d i , resp ectiv ely , we can decode up to half the minimu m distance o f the concaten ated code C . Howe ver , the integer parts n ot of all the threshold s among the sets are necessarily p airwise dif ferent. Since decoding attempts in respect to thresholds with equal in teger parts coincid e, the number z ⋆ of actual decodin g attempts which n eed to b e executed to decod e u p to half the minimum distance of C may be smaller than the nu mber of th resholds. W e can calcu late it explicitly by z ⋆ = ( S z k =1 ⌊ T ( z ) k ⌋ ≤ z , d i ev en S k k =1 ⌊ T ( ∞ ) k ⌋ ∪ ⌊ T ( ∞ ) ∞ ⌋ ≤ k + 1 , d i odd . (13) V I . C O N C L U D I N G E X A M P L E S Our r esults a re sub sumed using the follo wing example s. W e assum e a conc atenated code C consisting of an inne r code C i ( n i , k i , d i ) an d an outer code C o ( n o , k o , d o ) con- sisting of ℓ rows contain ing cod e word s o f th e RS code RS (2 8 ; 25 5 , 22 3 , 3 3) . For even inner minimum distance d i = 20 th e decoding bound s (5) for independent outer de coding and (10) for collaborative ou ter decod ing, respectiv ely , dep ending on the number z of thresho lds are shown in Figure 1 . Accord ing to Lemma 1, fo r indep endent outer decod ing z = 10 thr esholds are suf ficient to decode up to half the min imum distance of C . If collabo rativ e decoding of ℓ = 2 outer RS cod es is applied, we can calculate the num ber o f required thresho lds by z = min { z } s . t ⌊ T ( z ) z ⌋ = 9 an d g et z = 3 . B oth v alues are confirmed b y the bounds in Figure 1. 240 260 280 300 320 340 1 2 3 4 5 6 7 8 9 10 ind. outer decoding P S f r a g r e p l a c e m e n t s z , z ⋆ m i n i m u m i n n e r d i s t a n c e d i z , ℓ = 2 z ⋆ , ℓ = 2 z , ℓ = 8 z ⋆ , ℓ = 8 ℓ =2 decodin g boun d number of thresholds z d o d i / 2 Fig. 1. Nu mber of thresholds versus decodi ng bounds (5) and (10). The outer code C o consists of ℓ = 2 RS codes and C i has (ev en) minimum distance d i = 20 . If the RS codes are outer cod es C o v , . . . , C o v + ℓ − 1 of a GC code as describ ed in Section II, wh ich fulfill (2) , the saving in terms of operations is ev en greater . Besides the 7 sa ved o uter decodin g attem pts, th e n umber of inner decodin gs can then be cut down by n o ( ℓ − 1) = 255 . Note that deco ding one IRS code with ℓ interleaved RS codes with the algorithm from [12] has the same complexity as decodin g the ℓ RS codes indepen dently . Thus, our com parison of both constructions is fair in term s of co mplexity . After establishing the r esult list L , Lemma 2 can be app lied to select th e transmitted cod e word among its |L| ≤ z ⋆ elements. Figure 2 shows the number o f actual deco ding attemp ts z ⋆ as well as the number z of thre sholds for som e rea sonable odd inner minimum distances d i . Co llaborative deco ding of ℓ = 2 and ℓ = 8 ou ter RS co des is co nsidered. For ind ependen t o uter decodin g as descr ibed in Section IV, z ⋆ grows linear ly with d i . It d iminishes to at most z ⋆ = 6 already for an outer IRS code with ℓ = 2 . For an outer I RS co de with ℓ = 8 alr eady z ⋆ = 2 decod ing attempts are su fficient to decod e up to half the minimum distance of C over the fu ll range of all consider ed odd inner m inimum distances d i ∈ [3 , 100] . 0 2 4 6 8 10 12 14 10 20 30 40 50 60 70 80 90 100 ind. outer dec. P S f r a g r e p l a c e m e n t s z , z ⋆ minimum inner distance d i z, ℓ =2 z ⋆ , ℓ =2 z , ℓ =8 z ⋆ , ℓ =8 ℓ = 2 d e c o d i n g b o u n d n u m b e r o f t h r e s h o l d s z d o d i / 2 Fig. 2. Require d numbers of thresholds and actual decodi ng attempts to allo w decoding up to half the minimum distance of a con catena ted code C with parameters as described above . For clarity , only odd d i are plotted. R E F E R E N C E S [1] G. D. Forne y , Jr ., Concaten ated Codes . Cambri dge, MA, USA: M.I.T . Press, 1966. [2] E. L. Blokh and V . V . Zyablov , General ized Concatenate d Codes . Svyaz’, 1976. In Russian. [3] E. L. Bl okh and V . V . Zyablov , Linear Concatenated Codes . Nauka, 1982. In Russian. [4] I. I. Dumer , “On decoding of general ized concate nated codes, ” in Proc. F ifth All-Union W orkshop Comp. Networks, Pt. 4 , (Vladiv ostok, Russia), pp. 61–65, 1980. In Russian. [5] I. I. Dumer , “Co ncaten ated codes and thei r multile v el general izatio ns, ” in Handbook of Coding Theory , vol. II, ch. 23, Amsterdam: North-Holland , 1998. ISBN 0-444-50087-1. [6] U. K. Sorger , “ A new Reed–Solomon code decoding algorithm based on Ne wton’ s interpolati on, ” IE EE T ra ns. Inform. Theory , vol. IT -39, no. 2, pp. 358–365, 1993. [7] R. K ¨ otter , “Fast general ized minimum-distance decoding of Algebra ic– Geometry and Reed –Solomon code s, ” IEEE T rans. Inform. Theory , vol. IT -42, no. 3, pp. 721–737, 1993. [8] R. R. Nielsen, List decoding of linear block codes . PhD thesis, Dept. Math., T ech. Uni v . Denmark, Denmark, September 2001. A vail able online at http://phd.d tv.dk/2001/mat/ r_r_nilsen.ps . [9] V . Guruswami and M. Sudan, “Improv ed decoding of Reed-Sol omon and algebraic-ge ometric codes, ” IEE E T rans. Info rm. Theory , vol . IT - 45, pp. 1755–1764, Septembe r 1999. [10] G. Schmidt, V . R. Sidorenk o, and M. Bossert, “Interlea ve d Reed– Solomon code s in concate nated code desi gns, ” in Pr oc. IEE E ITSOC Inform. Theory W orkshop , (Rotorua, New Zealand), pp. 187–191, Au- gust 2005. [11] J. Justesen, C. Thommesen, and T . Høholdt, “Decoding of concate nated codes with interlea ved outer codes, ” in Pr oc. IEEE Int. Symposium on Inform. Theory , (Chicago, IL, USA), p. 329, 2004. [12] G. Schmidt, V . R. Sidorenk o, and M. Bossert, “Collaborat i ve decoding of interlea ved R eed–Solomon codes and concatenate d code designs. ” Preprint, ava ilable online at ArXiv , arXiv:cs .IT/0610074 , 2006.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment