A logical approach to object recognition on image is proposed. The main idea of the approach is to perform the object recognition as a logical inference on a set of rules describing an object shape.

Deep Dive into Logical methods of object recognition on satellite images using spatial constraints.

A logical approach to object recognition on image is proposed. The main idea of the approach is to perform the object recognition as a logical inference on a set of rules describing an object shape.

The problem of urban objects recognition on satellite images is rather complicated. The characteristic features of satellite images are noisiness, blur and low resolution as compared with aerial photo. A satellite image contains a lot of objects to be recognized, which can overlap and can have complex textures (e.g. tile roofing). Textures of adjacent objects can coincide, which results in loss of parts of contours between them. Moreover different object parts can be displayed differently because of illuminations (e.g. roof slopes). As a result, the objects, on the one hand, can be represented by inhomogeneous regions, and, on the other hand, some object regions can be merged.

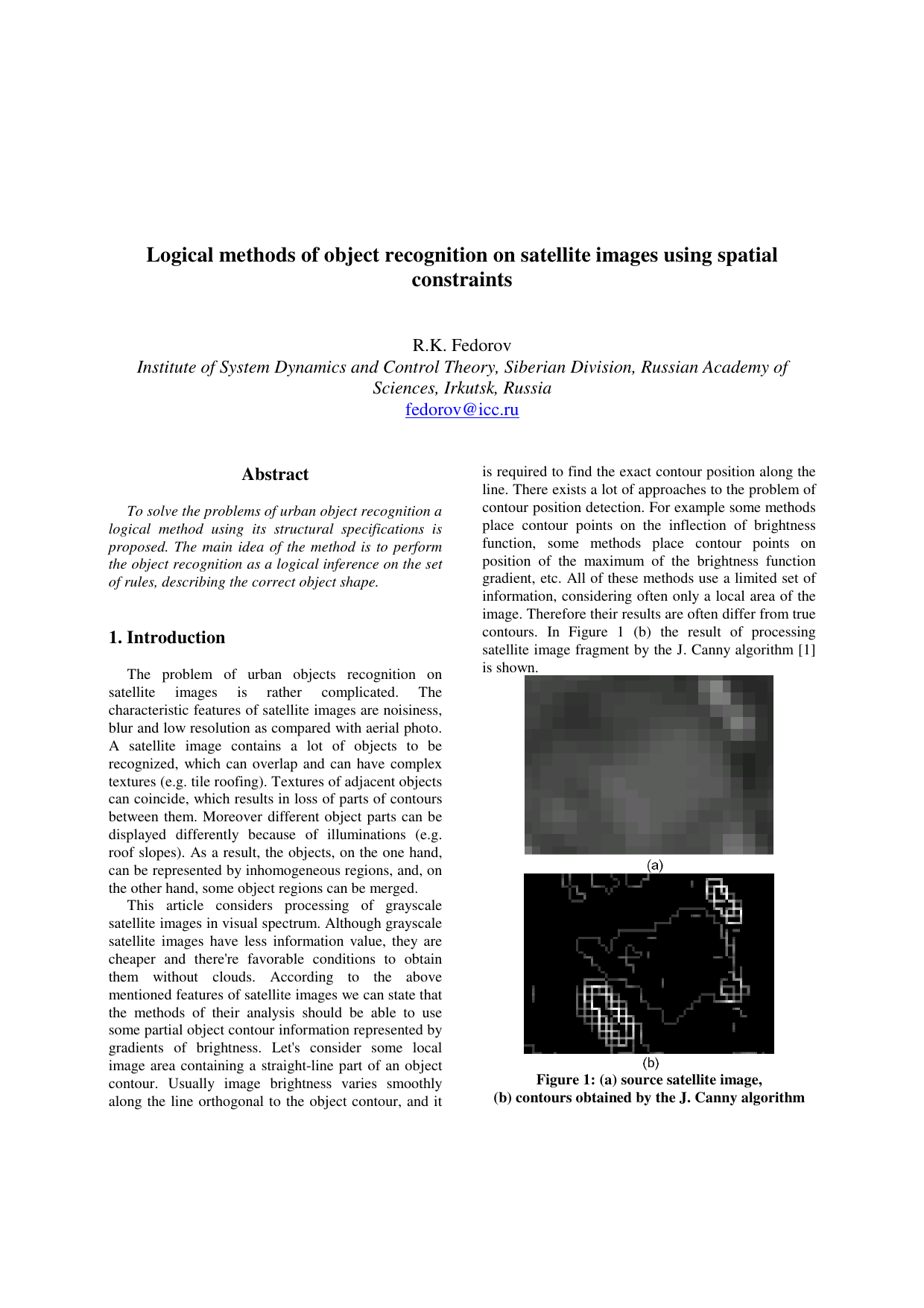

This article considers processing of grayscale satellite images in visual spectrum. Although grayscale satellite images have less information value, they are cheaper and there’re favorable conditions to obtain them without clouds. According to the above mentioned features of satellite images we can state that the methods of their analysis should be able to use some partial object contour information represented by gradients of brightness. Let’s consider some local image area containing a straight-line part of an object contour. Usually image brightness varies smoothly along the line orthogonal to the object contour, and it is required to find the exact contour position along the line. There exists a lot of approaches to the problem of contour position detection. For example some methods place contour points on the inflection of brightness function, some methods place contour points on position of the maximum of the brightness function gradient, etc. All of these methods use a limited set of information, considering often only a local area of the image. Therefore their results are often differ from true contours. In Figure 1 (b) the result of processing satellite image fragment by the J. Canny algorithm [1] is shown. A building is located in the center of the image. The building contour is represented by rather smooth brightness changes. Using the local methods leads to obtaining instead of a single contour segment a set of small contour parts of different directions because of the image noise, inaccuracy of border position detection, and so on. During object recognition the set of contour parts has to be matched against an expected straight-line segment of object contour. Moreover there can exist brightness jumps which aren’t part of recognition objects, e.g. ridges of tiles. All of these factors leads to generation of the large set of possible contours, most part of which are wrong. Matching objects to the set is a complex task.

To solve the problems under consideration a logical method of recognition of urban objects using its structural specifications is proposed. The main idea of the method is to perform the object recognition as matching the straight-line segments of the object contour to the brightness jumps of the image. Although many segments can be detected on an image fragment having brightness jump, a logical inference on the set of rules, describing the correct object shape, can be used to limit the set of lines under consideration. Using relative position information of object straight-lines allows to find contours not well defined or defined partially.

Let’s introduce the following notation: 2 :

, ,

RGB, CMYK etc.). One of the ways to substantially reduce the size of the set of considered straight-lines is to limit their lengths. To calculate the straight-line length we’ll use the following auxiliary function, which computes Euclidean distance between two points:

Another way to cut down some segments is to pose a constraint on the relative position of object segments, which is defined by computing the angle between two segments with common end point:

Spatial relations between segments can be defined by a set of constraints to these function values.

Let’s introduce the function:

which estimates the strength on the image of the border segment between the two points. Value 1 corresponds to the segment, which is a sharp border between two parts of its neighborhood, value 0 denotes the absence of difference between the parts. In comparison with the detector [2] the given function analyses some neighborhood of a straight-line segment between any two points of the image. Using the function line we can choose between the segments, which are compatible with the position of the whole object and the separate parts of its contour.

One can distinguish two types of contours on the image: 1) the lines, separating two different areas; 2) the lines, separating two similar areas, but having brightness change along the line itself (boundary line).

It is possible to suggest various estimations of straight-line segment strength. We’ll use a heuristic method, which allows to extract contours of the first type. The method is based upon analysis of two rectangles along the segment (Figure 2). The width of rectangles is defined by the

…(Full text truncated)…

This content is AI-processed based on ArXiv data.