Preserving Privacy and Sharing the Data in Distributed Environment using Cryptographic Technique on Perturbed data

The main objective of data mining is to extract previously unknown patterns from large collection of data. With the rapid growth in hardware, software and networking technology there is outstanding growth in the amount data collection. Organizations collect huge volumes of data from heterogeneous databases which also contain sensitive and private information about and individual .The data mining extracts novel patterns from such data which can be used in various domains for decision making .The problem with data mining output is that it also reveals some information, which are considered to be private and personal. Easy access to such personal data poses a threat to individual privacy. There has been growing concern about the chance of misusing personal information behind the scene without the knowledge of actual data owner. Privacy is becoming an increasingly important issue in many data mining applications in distributed environment. Privacy preserving data mining technique gives new direction to solve this problem. PPDM gives valid data mining results without learning the underlying data values .The benefits of data mining can be enjoyed, without compromising the privacy of concerned individuals. The original data is modified or a process is used in such a way that private data and private knowledge remain private even after the mining process. In this paper we have proposed a framework that allows systemic transformation of original data using randomized data perturbation technique and the modified data is then submitted as result of client’s query through cryptographic approach. Using this approach we can achieve confidentiality at client as well as data owner sites. This model gives valid data mining results for analysis purpose but the actual or true data is not revealed.

💡 Research Summary

The paper addresses the growing tension between the need to extract valuable knowledge from large, distributed data collections and the imperative to protect the privacy of individuals whose sensitive information resides in those datasets. After outlining the problem—namely that traditional data‑mining processes can inadvertently reveal personal details—the authors review two major families of privacy‑preserving data mining (PPDM) techniques. The first family, perturbation‑based methods, adds random noise to original records so that statistical properties are retained while individual values become indistinguishable. The second family, cryptographic approaches, encrypts data or employs secure query‑answer protocols to prevent any direct exposure of raw records. Both families have proven useful, yet each suffers from drawbacks: perturbation alone can be vulnerable to statistical attacks, and pure cryptographic solutions often incur prohibitive computational overhead, especially in a distributed setting where multiple autonomous data owners must cooperate.

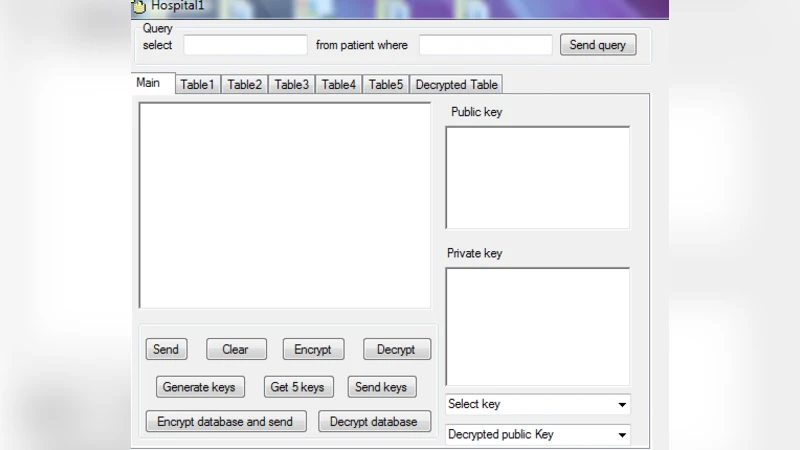

To overcome these limitations, the authors propose a hybrid framework that combines randomized data perturbation with a cryptographic transmission layer. The process begins at each data‑owner site, where the original database is transformed using a random perturbation algorithm. The algorithm injects noise drawn from a predefined probability distribution (e.g., Gaussian) according to a configurable perturbation ratio, ensuring that aggregate statistics such as means and variances remain largely unchanged. Once perturbed, the entire dataset is encrypted—either with a symmetric cipher such as AES or with an asymmetric scheme like RSA—using keys that are pre‑shared or exchanged securely via a trusted authority.

Clients submit analytical queries (e.g., association‑rule mining, clustering) to the framework. Queries are sent in encrypted form to the data owners, who execute them over the perturbed, encrypted data. The results are then re‑encrypted and returned to the client, which decrypts them and proceeds with analysis on the noisy data. Because the data have been both perturbed and encrypted, the original values are never exposed, and any eavesdropping on the communication channel yields only ciphertext that is computationally infeasible to interpret. The authors argue that the two‑layer protection is mutually reinforcing: perturbation mitigates statistical inference attacks, while encryption safeguards against interception and tampering.

A brief experimental evaluation is presented. Varying the perturbation ratio between 10 % and 30 % leads to a modest degradation in mining accuracy (approximately 5 %–15 % relative to results on the raw data). Encryption and decryption overhead is reported as roughly two seconds per gigabyte of data, which the authors acknowledge may be a bottleneck for real‑time analytics but is acceptable for many batch‑oriented mining tasks. The primary operational benefit highlighted is that data owners can participate in collaborative mining without ever releasing their true records, thereby preserving legal and ethical compliance.

The paper concludes by emphasizing the framework’s potential to balance privacy and utility in distributed environments. It calls for future work in several directions: systematic tuning of perturbation parameters to optimize the privacy‑utility trade‑off, dynamic key‑management schemes that scale to many participants, protocols for multi‑owner cooperation, and extensive testing on real‑world, large‑scale distributed systems. While the conceptual integration of perturbation and cryptography is compelling, the manuscript lacks detailed specifications of the noise model, key sizes, and security proofs, leaving open questions about the concrete level of protection offered. Nonetheless, the proposed architecture represents a noteworthy step toward practical, privacy‑preserving data mining across heterogeneous, distributed data sources.

Comments & Academic Discussion

Loading comments...

Leave a Comment