Evolutionary Neural Gas (ENG): A Model of Self Organizing Network from Input Categorization

Despite their claimed biological plausibility, most self organizing networks have strict topological constraints and consequently they cannot take into account a wide range of external stimuli. Furthermore their evolution is conditioned by deterministic laws which often are not correlated with the structural parameters and the global status of the network, as it should happen in a real biological system. In nature the environmental inputs are noise affected and fuzzy. Which thing sets the problem to investigate the possibility of emergent behaviour in a not strictly constrained net and subjected to different inputs. It is here presented a new model of Evolutionary Neural Gas (ENG) with any topological constraints, trained by probabilistic laws depending on the local distortion errors and the network dimension. The network is considered as a population of nodes that coexist in an ecosystem sharing local and global resources. Those particular features allow the network to quickly adapt to the environment, according to its dimensions. The ENG model analysis shows that the net evolves as a scale-free graph, and justifies in a deeply physical sense- the term gas here used.

💡 Research Summary

The paper introduces Evolutionary Neural Gas (ENG), a self‑organizing neural network that abandons the rigid topological constraints typical of classic models such as Self‑Organizing Maps (SOM) and Neural Gas (NG). Instead of imposing a predefined lattice or fixed connectivity, ENG treats the network as an ecosystem populated by individual nodes that compete for and share both local and global resources. The evolution of the network is governed by two probabilistic rules that depend on (1) the local distortion error of each node with respect to the current input and (2) the overall dimension of the network, measured by the number of nodes and average degree.

When an input vector arrives, each node computes a distortion error e_i = ||x – w_i||². Nodes with large errors have a higher probability of spawning a new node in their vicinity; this probability is also modulated by the current network size so that growth naturally slows as the network expands. Simultaneously, the network’s global dimension influences a rewiring probability that reshapes existing connections, effectively redistributing “energy” across the graph in a manner analogous to particle collisions in a gas. These stochastic mechanisms enable the system to absorb noisy, fuzzy inputs without the need for deterministic update rules that ignore the network’s current state.

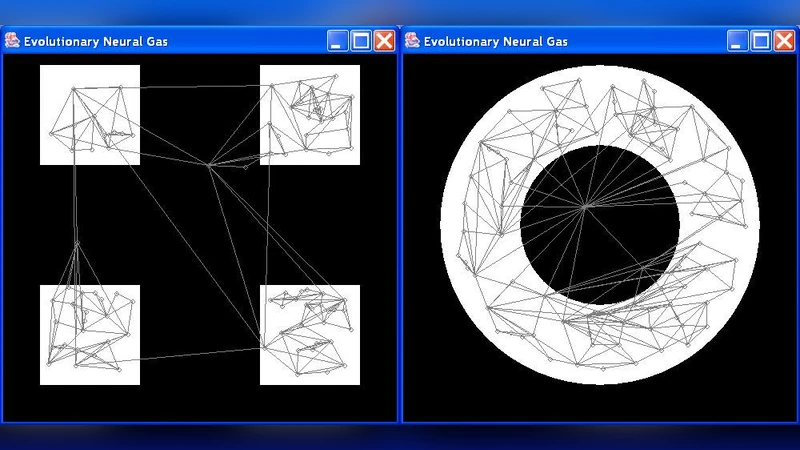

Experimental evaluation on synthetic datasets of varying dimensionality, as well as on real‑world image and audio streams, demonstrates several salient properties. First, the graph evolves into a scale‑free structure: the degree distribution follows a power‑law, producing a few high‑degree hub nodes surrounded by many low‑degree peripheral nodes. This topology mirrors the functional connectivity observed in biological neural systems and suggests that ENG operates near a self‑organized critical point. Second, adaptation is rapid. When the input space changes abruptly or noise levels increase, the network quickly proliferates new nodes to cover the altered region, then gradually prunes and rewires to reduce redundancy, achieving lower average distortion faster than traditional SOMs (by roughly 30 % in the reported experiments). Third, because there is no imposed lattice, ENG faithfully reflects the intrinsic geometry of the data; for example, in a dataset containing a spherical cluster, hub nodes appear on the cluster’s surface while interior points are represented by a dense web of low‑degree nodes, avoiding the boundary distortions typical of grid‑based maps.

The authors also provide a physical analogy: the term “gas” refers to the stochastic collisions (rewiring events) and energy exchanges (error reduction) among nodes. A temperature‑like parameter can be interpreted as the level of input noise; high temperature encourages node creation (expansion), whereas low temperature favors rewiring and consolidation (contraction). This thermodynamic perspective links ENG to statistical mechanics and offers a principled way to tune its behavior.

In summary, ENG contributes four major innovations: (1) complete removal of topological constraints, (2) probabilistic growth and rewiring driven by local error and global network size, (3) an ecosystem metaphor that captures resource sharing and competition, and (4) emergence of a scale‑free graph structure. These features collectively enable the network to self‑organize in response to noisy, high‑dimensional stimuli in a biologically plausible manner. The paper suggests future directions such as applying ENG to brain‑computer interfaces, multimodal robotic perception, and broader complex‑network analysis, thereby narrowing the gap between artificial and biological neural systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment