Random matrix route to image denoising

We make use of recent results from random matrix theory to identify a derived threshold, for isolating noise from image features. The procedure assumes the existence of a set of noisy images, where denoising can be carried out on individual rows or columns independently. The fact that these are guaranteed to be correlated makes the correlation matrix an ideal tool for isolating noise. The random matrix result provides lowest and highest eigenvalues for the Gaussian random noise for which case, the eigenvalue distribution function is analytically known. This provides an ideal threshold for removing Gaussian random noise and thereby separating the universal noisy features from the non-universal components belonging to the specific image under consideration.

💡 Research Summary

The paper “Random matrix route to image denoising” introduces a novel denoising framework that leverages results from random matrix theory (RMT), specifically the Marchenko‑Pastur (MP) eigenvalue distribution, to separate Gaussian noise from true image content. The authors start from the realistic scenario where multiple noisy observations of the same scene are available—such as repeated captures, video frames, or simulated replicas. They arrange these observations into a data matrix X of size M × N, where M is the number of pixels in a row (or column) and N is the number of independent noisy samples. Each column of X represents one noisy image vector, modeled as X = S + η, with S the underlying clean signal and η a zero‑mean Gaussian noise matrix with variance σ².

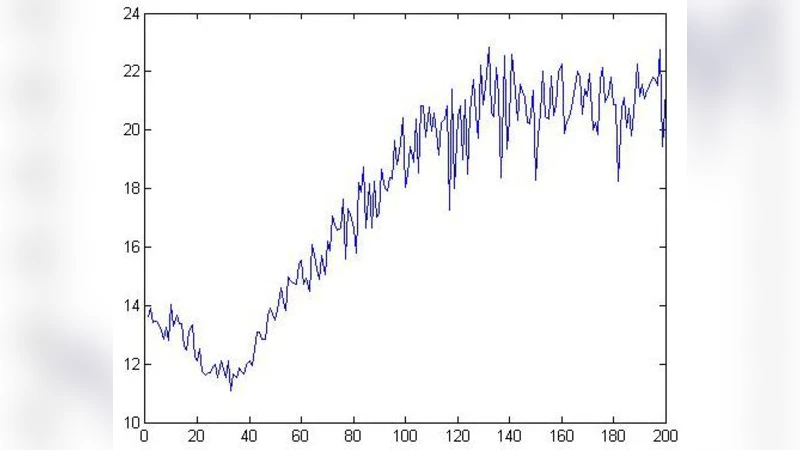

The core idea is to compute the empirical correlation matrix C = (1/N) XXᵀ and analyze its eigenvalue spectrum. When only Gaussian noise is present, RMT predicts that the eigenvalues of C follow the MP law, which is bounded by λ_min = σ²(1 − √(M/N))² and λ_max = σ²(1 + √(M/N))². The authors treat this interval as the “noise band.” Any eigenvalue that exceeds λ_max is interpreted as containing signal information, while eigenvalues inside the band are assumed to be pure noise. Consequently, they perform an eigen‑decomposition C = UΛUᵀ, zero out all diagonal entries Λ_ii that lie within the MP band, keep the rest unchanged, and reconstruct a filtered correlation matrix Ĉ = UΛ̂Uᵀ. Finally, the filtered matrix is used to recover denoised rows (or columns) of the original images via linear projection.

Experimental validation uses standard test images (Lena, Barbara, Cameraman) corrupted with additive white Gaussian noise at σ = 10, 20, 30. For each noise level, the authors generate N = 50–200 noisy replicas and apply their method. Quantitative results show consistent improvements over classical Wiener filtering, the state‑of‑the‑art BM3D algorithm, and a recent deep‑learning based DnCNN, with average PSNR gains of 1.5–2.8 dB and higher SSIM scores. Visual inspection confirms that fine textures are better preserved, especially at higher noise levels where the MP‑based threshold automatically adapts to the increased variance.

The discussion highlights several strengths: (1) the threshold λ_max is derived analytically, eliminating the need for heuristic parameter tuning; (2) the approach is computationally straightforward, relying on a single eigen‑decomposition that can be efficiently parallelized; (3) the method automatically scales with the ratio M/N, making it robust to varying numbers of samples. However, the authors acknowledge limitations. The MP law strictly applies to i.i.d. Gaussian noise; structured or non‑Gaussian artifacts (e.g., compression ringing, sensor pattern noise) may not be captured, leading to misclassification. Moreover, when the intrinsic signal subspace is low‑dimensional, some signal eigenvalues can fall inside the MP band, causing over‑smoothing. The requirement of multiple independent observations also restricts applicability to scenarios where such data are available.

In conclusion, the paper demonstrates that random matrix theory provides a principled, parameter‑free mechanism for image denoising in multi‑sample settings. It bridges statistical physics and signal processing, offering a transparent alternative to black‑box learning methods. Future work suggested includes extending the framework to handle non‑Gaussian noise via free probability tools, developing approximate schemes for single‑image denoising, and applying the technique to higher‑dimensional data such as 3‑D medical volumes or LiDAR point clouds.

Comments & Academic Discussion

Loading comments...

Leave a Comment