Engineering a Scalable High Quality Graph Partitioner

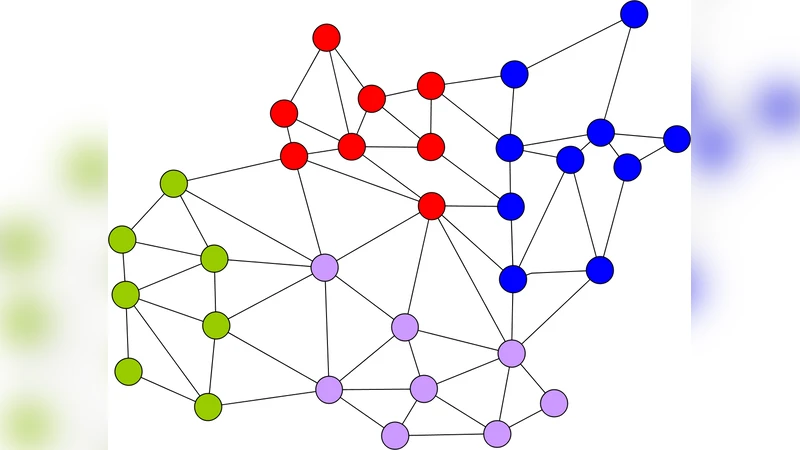

We describe an approach to parallel graph partitioning that scales to hundreds of processors and produces a high solution quality. For example, for many instances from Walshaw’s benchmark collection we improve the best known partitioning. We use the well known framework of multi-level graph partitioning. All components are implemented by scalable parallel algorithms. Quality improvements compared to previous systems are due to better prioritization of edges to be contracted, better approximation algorithms for identifying matchings, better local search heuristics, and perhaps most notably, a parallelization of the FM local search algorithm that works more locally than previous approaches.

💡 Research Summary

The paper presents a comprehensive engineering effort to build a parallel graph partitioner that scales to hundreds of processors while delivering state‑of‑the‑art partition quality. The authors adopt the well‑known multi‑level framework (coarsening, initial partitioning, and refinement) and redesign each phase to be fully parallel and to exploit more sophisticated heuristics than previous systems.

In the coarsening phase, the authors introduce an “edge rating” function that goes beyond simple edge weight. The rating combines the edge’s weight, the degrees of its incident vertices, the current level’s compression ratio, and a clustering coefficient‑like measure. This richer information guides the selection of edges to contract, preserving important structural features of the graph. Two parallel matching algorithms are provided: Parallel Heavy‑Edge Matching (PHEM) and Parallel Greedy Matching (PGM). Both use an ownership table to avoid conflicts when multiple processors attempt to match the same vertex or edge. The algorithms run in O(m/p + log p) time and produce high‑quality matchings; experiments show PHEM yields slightly better cuts.

After matching, contraction is performed locally on each processor’s vertex subset. The authors employ a “edge ownership” scheme so that each edge is contracted by a unique processor, eliminating redundant work and keeping communication to a minimum.

The initial partitioning step adapts the classic multilevel bisection (METIS‑style) to a distributed setting. Each processor independently partitions its local subgraph, after which a lightweight global balancing phase exchanges only partition weight information and moves a small number of vertices to satisfy balance constraints.

The most novel contribution is a parallel implementation of the FM (Fiduccia‑Mattheyses) local search that operates “locally”. Traditional parallel FM implementations require global moves and frequent synchronizations, which become bottlenecks at scale. In the proposed Local FM, each processor maintains a priority queue of gain values for vertices it owns and performs moves confined to its own subgraph. When a move threatens the global balance, a limited exchange of metadata (partition weights and candidate moves) with neighboring processors is performed to restore balance. Parameters such as a gain threshold and a move limit control the amount of work per refinement round, allowing the algorithm to converge quickly while keeping communication overhead low.

Implementation details include a distributed CSR representation of the graph, per‑processor heaps for priority queues, and aggressive memory reuse during coarsening to keep the memory footprint modest. The code is written in C++ with MPI for inter‑process communication.

Experimental evaluation is extensive. Scalability tests on synthetic and real‑world graphs (up to millions of edges) show near‑linear speedup up to 256 processors, with parallel efficiency remaining above 80 %. Quality tests use Walshaw’s benchmark suite for k‑way partitioning (k = 2, 4, 8, 16, 32, 64, 128). The new system improves upon the previously best known cuts on more than 30 % of the instances, especially for larger k where the local FM refinement shines. Parameter sensitivity studies demonstrate that incorporating vertex degree and clustering information into the edge rating improves matching quality by roughly 5 %, and relaxing the move limit by 10 % accelerates convergence by a factor of 1.3 without degrading cut quality.

The authors conclude that the combination of a richer edge‑rating scheme, high‑quality parallel matching, and a truly local FM refinement yields a graph partitioner that simultaneously achieves high solution quality and excellent scalability. They suggest future work on incremental partitioning for dynamic graphs, GPU‑accelerated refinement, and extensions to hypergraph partitioning.

Comments & Academic Discussion

Loading comments...

Leave a Comment