Packing and Covering Properties of Subspace Codes for Error Control in Random Linear Network Coding

Codes in the projective space and codes in the Grassmannian over a finite field - referred to as subspace codes and constant-dimension codes (CDCs), respectively - have been proposed for error control in random linear network coding. For subspace cod…

Authors: Maximilien Gadouleau, Zhiyuan Yan

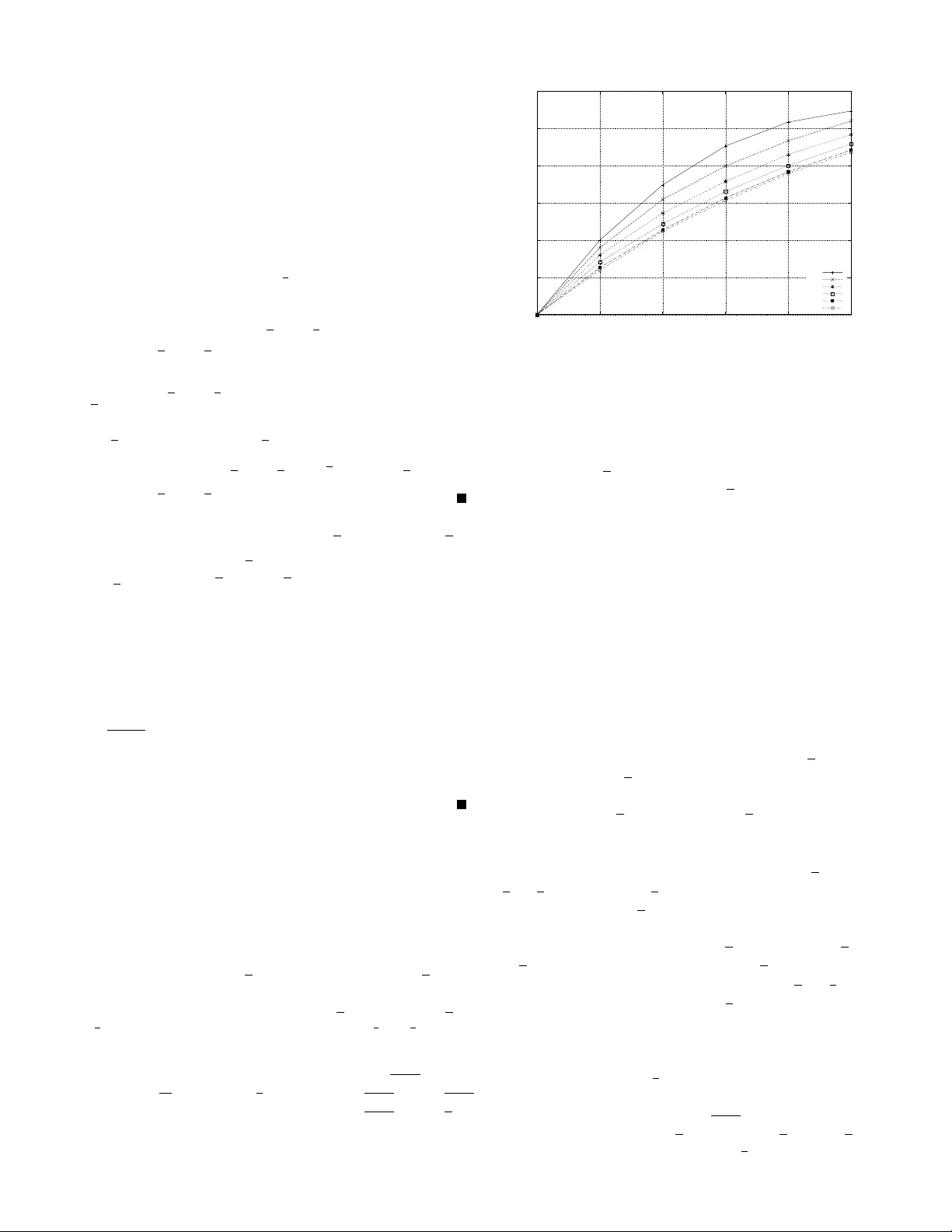

P acking and Co v ering Properties of Subspace Codes for Error Control in Random Linear Network Coding Maximilien Gadouleau, Member , IEEE, and Zhiyuan Y an, Senior Member , IEEE Abstract —Codes in the projecti ve space and codes in the Grassmannian over a finite field — referred to as subspace codes and constant-dimension codes (CDCs), respecti vely — hav e been proposed for error contr ol in random linear network coding. F or subspace codes and CDCs, a subspace metric was introduced to correct both errors and erasures, and an injection metric was proposed to correct adversarial errors. In this paper , we in vesti- gate the packing and covering properties of subspace codes with both metrics. W e first determine some fundamental geometric properties of the projective space with both metrics. Using these properties, we then deriv e bounds on the cardinalities of packing and cov ering subspace codes, and determine the asymptotic rates of optimal packing and optimal covering subspace codes with both metrics. Our results not only provide guiding principles for the code design f or error control in random linear network coding, but also illustrate the difference between the two metrics from a geometric perspective. In particular , our results show that optimal packing CDCs are optimal packing subspace codes up to a scalar for both metrics if and only if their dimension is half of their length (up to rounding). In this case, CDCs suffer from only limited rate loss as opposed to subspace codes with the same minimum distance. W e also show that optimal covering CDCs can be used to construct asymptotically optimal covering subspace codes with the injection metric only . Index T erms —Network coding, random linear network coding, error control codes, subspace codes, constant-dimension codes, packing, covering, subspace metric, injection metric. I . I N T RO D U C T I O N Due to its v ector-space preserving property , random linear network coding [1], [2] can be viewed as transmitting sub- spaces ov er an operator channel [3]. As such, error control for random linear network coding can be modeled as a coding problem, where code words are subspaces and the distance is measured by either the subspace distance [3] or the injection metric [4]. Codes in the projecti ve space, referred to as subspace codes henceforth, and codes in the Grassmannian, This work was supported in part by Thales Communications Inc. and in part by a grant from the Commonwealth of Pennsylvania, Department of Com- munity and Economic Dev elopment, through the Pennsylvania Infrastructure T echnology Alliance (PIT A). The work of Zhiyuan Y an was supported in part by a summer extension grant from Air Force Research Laboratory , Rome, New Y ork, and the work of Maximilien Gadouleau was supported in part by the ANR project RISC. Part of the material in this paper was presented at 2009 IEEE International Symposium on Information Theory (ISIT 2009) in Seoul, Korea. Maximilien Gadouleau was with the Department of Electrical and Com- puter Engineering, Lehigh University , Bethlehem, P A 18015 USA. Now he is with CReSTIC, Universit ´ e de Reims Champagne-Ardenne, Reims 51100 France. Zhiyuan Y an is with the Department of Electrical and Com- puter Engineering, Lehigh University , Bethlehem, P A, 18015 USA (e-mail: maximilien.gadouleau@univ-reims.fr; yan@lehigh.edu). referred to as constant-dimension codes (CDCs) henceforth, hav e been both inv estigated for error control in random linear network coding. Using CDCs is sometimes adv antageous since the fixed dimension of CDCs simplifies the network protocol somewhat [3]. The construction and properties of CDCs thus have attracted a lot of attention. Different constructions of CDCs have been proposed [3], [5]–[7]. Bounds on CDCs based on packing properties are in vestigated (see, for example, [3], [6], [8], [9]), and the covering properties of CDCs are in vestigated in [7]. The construction and properties of subspace codes have receiv ed less consideration, and previous works on subspace codes (see, for example, [10]–[12]) ha ve focused on the packing properties. In [10], bounds on the maximum cardi- nality of a subspace code with the subspace metric, notably the counterpart of the Gilbert bound, are deriv ed. Another bound relating the maximum cardinality of CDCs to that of subspace codes is gi ven in [11]. Bounds and constructions of subspace codes are also in vestigated in [12]. Despite the previous works, two significant problems remain open. First, despite the aforementioned advantage of CDCs, what is the rate loss of CDCs as opposed to subspace codes of the same minimum distance and hence error correction capability? Since random linear network coding achie ves multicast capacity with probability exponentially approaching 1 with the length of the code [1], the asymptotic rates of subspace codes and asymptotic rate loss of CDCs are both significant. The second problem in v olves the tw o metrics that hav e been introduced for subspace codes: what is the dif ference between the two metrics proposed for subspace codes and CDCs beyond those discussed in [4]? Note that the two questions are some what related, since the first question is applicable for both metrics. The answers to these questions are significant to the code design for error control in random linear network coding. Aiming to answer these two questions, our work in this pa- per focuses on the packing and cov ering properties of subspace codes. Packing and covering properties not only are interesting in their own right as fundamental geometric properties, also are significant for various practical purposes. First, our work is motiv ated by their significance to design and decoding of subspace codes. Since a code can be viewed as a packing of its ambient space, the significance of packing properties is clear . In contrast, the importance of cov ering properties is more subtle and deserves more explanation. For example, a class of nearly optimal CDCs, referred to as liftings of rank metric codes, hav e covering radii no less than their minimum distance 2 and thus are not optimal CDCs [7]. This example shows how a cov ering property is rele v ant to the design of subspace codes. The cov ering radius also characterizes the decoding performance of a code, since it is the maximum weight of a decodable error by minimum distance decoding [13] and also has applications to decoding with erasures [14]. Second, cov ering properties are also important for other reasons. For example, covering properties are important for the security of keystreams against cryptanalytic attacks [15]. Our main contributions of this paper are that for both met- rics, we first determine some fundamental geometric properties of the projectiv e space, and then use these properties to deriv e bounds and to determine the asymptotic rates of subspace codes based on packing and cov ering. Our results provide some answers to both open problems abov e. First, our results show that for both metrics optimal packing CDCs are optimal packing subspace codes up to a scalar if and only if their dimension is half of their length (up to rounding), which implies that in this case CDCs suf fer from a limited rate loss as opposed to subspace codes with the same minimum distance. Furthermore, when the asymptotic rate of subspace codes is fixed, the relative subspace distance of optimal subspace codes is twice as much as the relati ve injection distance. Second, our results illustrate the dif ference between the two metrics from a geometric perspective. Abo ve all, the projectiv e space has different geometric properties under the two metrics. The different geometric properties further result in different asymptotic rates of cov ering codes with the two metrics. W ith the injection metric, optimal covering CDCs can be used to construct asymptotically optimal cov ering subspace codes. Howe ver , with the subspace metric, this does not hold. T o the best of our knowledge, our results on the geometric properties of the projectiv e space are novel, and our in ves- tigation of cov ering properties of subspace codes is the first one in the literature. Note that our inv estigation of covering properties differs from the study in [7]: while ho w CDCs cov er the Grassmannian was inv estigated in [7], we consider how subspace codes cov er the whole projectiv e space in this paper . Our in vestigation of packing properties leads to tighter bounds than the Gilbert bound in [10], and our relation between the optimal cardinalities of subspace codes and CDCs is also more precise than that in [11]. Our asymptotic rates based on packing properties also appear to be novel. The rest of the paper is organized as follo ws. Section II revie ws necessary background on subspace codes, CDCs, and related concepts. In Section III, we inv estigate the packing and covering properties of subspace codes with the subspace metric. In Section IV, we study the packing and cov ering properties of subspace codes with the injection metric. Finally , Section V summarizes our results and provides future work directions. I I . P R E L I M I NA R I E S W e refer to the set of all subspaces of GF( q ) n with dimension r as the Grassmannian of dimension r and de- note it as E r ( q , n ) ; we refer to E ( q , n ) = S n r =0 E r ( q , n ) as the projective space. W e have | E r ( q , n ) | = n r , where n r = Q r − 1 i =0 q n − q i q r − q i is the Gaussian binomial [16]. A very instrumental result [17] about the Gaussian binomial is that for all 0 ≤ r ≤ n : q r ( n − r ) ≤ n r < K − 1 q q r ( n − r ) , (1) where K q = Q ∞ j =1 (1 − q − j ) represents the ratio of non- singular matrices in GF( q ) n × n as n tends to infinity . By definition, K q = φ ( q − 1 ) , where φ is the Euler function. Furthermore, by the pentagonal number theorem, K q = P ∞ n = −∞ ( − 1) n q ( n − 3 n 2 ) / 2 [18]. Finally , we also have K − 1 q = P ∞ k =0 p ( k ) q − k , where p ( k ) is the partition number of k [16]. For U, V ∈ E ( q , n ) , both the subspace metric [3, (3)] d S ( U, V ) def = dim( U + V ) − dim( U ∩ V ) and injection metric [4, Def. 1] d I ( U, V ) def = 1 2 d S ( U, V ) + 1 2 | dim( U ) − dim( V ) | = max { dim( U ) , dim( V ) } − dim( U ∩ V ) (2) = dim( U + V ) − min { dim( U ) , dim( V ) } (3) ≥ | dim( U ) − dim( V ) | are metrics ov er E ( q , n ) . For all U, V ∈ E ( q , n ) , 1 2 d S ( U, V ) ≤ d I ( U, V ) ≤ d S ( U, V ) , (4) and d I ( U, V ) = 1 2 d S ( U, V ) if and only if dim( U ) = dim( V ) , and d I ( U, V ) = d S ( U, V ) if and only if U ⊆ V or V ⊆ U . A subspace code is a nonempty subset of E ( q , n ) . The minimum subspace (respectiv ely , injection) distance of a sub- space code is the minimum subspace (respecti vely , injection) distance o ver all pairs of distinct codewords. A subset of E r ( q , n ) is called a constant-dimension code (CDC). A CDC is thus a subspace code whose codewords have the same dimension. Since for CDCs d I ( U, V ) = 1 2 d S ( U, V ) , we focus on the injection metric when considering CDCs. W e denote the maximum cardinality of a CDC in E r ( q , n ) with minimum injection distance d as A C ( q , n, r, d ) . W e hav e A C ( q , n, r, d ) = A C ( q , n, n − r, d ) , A C ( q , n, r, 1) = n r and it is shown [7], [9] for r ≤ n 2 and 2 ≤ d ≤ r , q ( n − r )( r − d +1) + 1 ≤ A C ( q , n, r, d ) ≤ n r − d +1 r r − d +1 < K − 1 q q ( n − r )( r − d +1) . (5) The lo wer bound on A C ( q , n, r, d ) in (5) is implicit from the code construction in [7], and the upper bounds on A C ( q , n, r, d ) in (5) are from [3]. Thus, CDCs in E r ( q , n ) ( r ≤ n 2 ) with minimum injection distance d and cardinality q ( n − r )( r − d +1) proposed in [3] are optimal up to a scalar; we refer to these CDCs as KK codes henceforth. The cov ering radius in E r ( q , n ) of a CDC C is defined as max U ∈ E r ( q ,n ) d I ( U, C ) . W e also denote the minimum cardinality of a CDC with covering radius ρ in E r ( q , n ) as K C ( q , n, r, ρ ) [7]. It was shown in [7] that K C ( q , n, r, ρ ) is on the order of q r ( n − r ) − ρ ( n − ρ ) , and an asymptotically optimal construction of covering CDCs is designed in [7, Proposition 12]. 3 I I I . P AC K I N G A N D C O V E R I N G P R O P ER T I ES O F S U B S PAC E C O D E S W I T H T H E S U B S PAC E M E T R I C A. Pr operties of balls with subspace radii W e first in vestigate the properties of balls with subspace radii in E ( q , n ) , which will be instrumental in our study of packing and cov ering properties of subspace codes with the subspace metric. W e first deri ve bounds on | E ( q , n ) | belo w . In order to simplify notations, we denote θ ( q ) def = P ∞ n =0 q − n 2 , which is related to the Jacobi theta function ϑ 3 ( z , q ) = P ∞ n = −∞ q n 2 e 2 niz by θ ( q ) = 1 2 ϑ 3 (0 , q − 1 ) + 1 [19]. W e remark that θ ( q ) > 1 for all q ≥ 2 , and that θ ( q ) is a decreasing function of q and approaches 1 as q tends to infinity . Lemma 1: For all n , q b n 2 c ( n − b n 2 c ) ≤ | E ( q , n ) | < 2 K − 1 q θ ( q ) q b n 2 c ( n − b n 2 c ) . Pr oof: W e hav e | E ( q , n ) | = P n r =0 n r ≥ n b n 2 c ≥ q b n 2 c ( n − b n 2 c ) by (1), which proves the lower bound. Also, n n − r = n r and hence P n r =0 n r ≤ 2 P b n 2 c r =0 n r < 2 K − 1 q P b n 2 c r =0 q r ( n − r ) by (1). Therefore, | E ( q , n ) | < 2 K − 1 q q b n 2 c ( n − b n 2 c ) P b n 2 c i =0 q − i ( n − 2 b n 2 c + i ) < 2 K − 1 q θ ( q ) q b n 2 c ( n − b n 2 c ) . W e observe that by (1) and Lemma 1, | E r ( q , n ) | is the same as | E ( q , n ) | up to a scalar when r = n 2 or r = n − n 2 . That is, the volume of E b n 2 c ( q , n ) , which is equal to that of E n − b n 2 c ( q , n ) when n 2 6 = n − n 2 , dominates the v olumes of other Grassmannians. This geometric property has significant implication to the packing properties of subspace codes. W e now determine the number of subspaces at a gi ven subspace distance from a fixed subspace. Let us denote the number of subspaces with dimension s at subspace distance d from a subspace with dimension r as N S ( r , s, d ) . Lemma 2: N S ( r , s, d ) is giv en by q u ( d − u ) r u n − r d − u when u = r + d − s 2 is an integer , and 0 otherwise. Pr oof: For U ∈ E r ( q , n ) and V ∈ E s ( q , n ) , d S ( U, V ) = d if and only if dim( U ∩ V ) = r − u . Thus there are r u choices for U ∩ V . The subspace V is then completed in q u ( d − u ) n − r d − u ways. W e remark that this result in Lemma 2 is implicitly con- tained in [10, Theorem 5] without an e xplicit proof. It is formally stated here because it is important to the results in this paper . W e also denote the volume of a ball with subspace radius t around a subspace with dimension r as V S ( r , t ) def = P t d =0 P n s =0 N S ( r , s, d ) . W e now deriv e bounds on the volume of a ball with subspace radius. Since V S ( r , t ) = V S ( n − r , t ) for all r and t , we only consider r ≤ n 2 . Also, we assume t ≤ n 2 , for only this case will be needed in this paper . Pr oposition 1: For all q , n , r ≤ n 2 , and t ≤ n 2 , q − 3 4 q g ( r ,t ) ≤ V S ( r , t ) ≤ 2 θ ( q 3 ) K − 2 q (1 + q − 4 3 ) θ ( q 3 4 ) q g ( r ,t ) , where g ( r , t ) = t ( n − r − t ) for t ≤ n − 2 r 3 , 1 12 ( n − 2 r ) 2 + 1 4 t (2 n − t ) for n − 2 r 3 < t ≤ n +4 r 3 , ( t − r )( n − t + r ) for n +4 r 3 < t ≤ n 2 . The proof of Proposition 1 is given in Appendix A. W e remark that the lower and upper bounds on V S ( r , t ) in Propo- 0 5 10 15 20 25 30 0 1 2 3 4 5 log 2 (V S (2,10,r,t)) t r=0 r=1 r=2 r=3 r=4 r=5 Fig. 1. V olume of a ball of subspace radius in E (2 , 10) as a function of the dimension of its center and of its radius sition 1 are tight up to a scalar , and that g ( r, t ) depends on both r and t . W e also observe that g ( r, t ) decreases with r for r ≤ n 2 . That is, the volume of a ball around a subspace of dimension r ( r ≤ n 2 ) decreases with r . This observation is significant to the covering properties of subspace codes with the subspace metric. Figure 1, where we show log 2 [ V S ( q , n, r, t )] for q = 2 , n = 10 , 0 ≤ r ≤ 5 , and 0 ≤ t ≤ 5 , illustrates this observation. B. P acking pr operties of subspace codes with the subspace metric W e are interested in packing subspace codes used with the subspace metric. The maximum cardinality of a code in E ( q , n ) with minimum subspace distance d is denoted as A S ( q , n, d ) . Since A S ( q , n, 1) = | E ( q , n ) | , we assume d ≥ 2 henceforth. W e can relate A S ( q , n, d ) to A C ( q , n, r, d ) . First, we re- mark that max 0 ≤ r ≤ n A C ( q , n, r, d ) = A C ( q , n, n 2 , d ) for all q , n , and d ≤ n 2 . The claim is obvious for d = 1 , and easily shown for d > 1 by using (1). W e also re- mark that A C ( q , n, n 2 , d ) = A C ( q , n, n 2 , d ) . For all J ⊆ { 0 , 1 , . . . , n } , we denote the maximum cardinality of a code with minimum subspace distance d and codew ords having dimensions in J as A S ( q , n, d, J ) . For 2 ≤ d ≤ 2 n 2 , R d def = d 2 , d 2 + 1 , . . . , n − d 2 . Proposition 2 belo w compares A S ( q , n, d ) to A C ( q , n, n 2 , d ) and shows that A S ( q , n, d, R d ) is a good approximate of A S ( q , n, d ) . Pr oposition 2: For n = d = 2 n 2 + 1 , A S ( q , 2 n 2 + 1 , 2 n 2 + 1) = 2 and for 2 ≤ d ≤ 2 n 2 , A S ( q , n, d ) ≤ A S ( q , n, d, R d ) + 2 . Also, we hav e A C ( q , n, n 2 , d 2 ) ≤ A S ( q , n, d ) ≤ 2 + P r ∈ R d A C ( q , n, r, d 2 ) . Pr oof: Let C be a code in E ( q , n ) with minimum sub- space distance d . For C , D ∈ C , we hav e dim( C ) + dim( D ) ≥ d S ( C, D ) ≥ d ; therefore there is at most one codew ord with dimension less than d 2 . Similarly , dim( C ) + dim( D ) ≤ 2 n − d S ( C, D ) ≤ 2 n − d , therefore there is at most one code- word with dimension greater than 2 n − d 2 . Thus A S ( q , n, d ) ≤ A S ( q , n, d, R d ) + 2 for d ≤ 2 n 2 and A S ( q , 2 n 2 + 1 , 2 n 2 + 1) ≤ 2 . Since the code {{ 0 } , GF( q ) 2 b n 2 c +1 } has minimum 4 subspace distance 2 n 2 +1 , we obtain A S ( q , 2 n 2 +1 , 2 n 2 + 1) = 2 . A CDC in E r ( q , n ) with minimum injection distance d 2 has minimum subspace distance ≥ d , and hence A C ( q , n, r, d 2 ) ≤ A S ( q , n, d ) for all r . Also, the codewords with dimension r in a code with minimum subspace distance d form a CDC in E r ( q , n ) with minimum injection distance at least d 2 , and hence A S ( q , n, d ) ≤ A S ( q , n, d, R d ) + 2 ≤ 2 + P r ∈ R d A C ( q , n, r, d 2 ) . W e compare our lower bound on A S ( q , n, d ) in Proposition 2 to the Gilbert bound in [10, Theorem 5]. The latter shows that A S ( q , n, d ) ≥ | E ( q,n ) | avg { V S ( r,d − 1) } , where the av erage volume avg { V S ( r , d − 1) } is taken over all subspaces in E ( q , n ) . Using the bounds on V S ( r , d − 1) in Proposition 1, it can be shown that this lower bound is at most 2 K − 1 q θ ( q ) q 3 4 q b n 2 c ( n − b n 2 c ) − 1 4 ( d − 1)(2 n − d +1) . On the other hand, Proposition 2 and (5) yield A S ( q , n, d ) ≥ q b n 2 c ( n − b n 2 c ) − 1 4 ( d − 1)( n +1) . The ratio between our lo wer bound and the Gilbert bound is hence at least 1 2 K q θ ( q ) − 1 q 1 4 ( d − 1)( n − d ) ≥ 1 for all n and d . Therefore, our lower bound in Proposition 2 is tighter than the Gilbert bound in [10, Theorem 5]. The lower bound in Proposition 2 is further tightened below by considering the union of CDCs in different Grassmannians. Pr oposition 3: For all q , n , and 2 ≤ d ≤ n , we have A S ( q , n, d ) ≥ P z i = − z A C ( q , n, n 2 − id, d 2 ) , where z = b n 2 c d . Pr oof: F or i = − z , − z + 1 , . . . , z , let C i be a CDC in E b n 2 c − id ( q , n ) with minimum subspace distance 2 d 2 and cardinality A C ( q , n, n 2 − id, d 2 ) and let C = S z i = − z C i . W e have |C | = P z i = − z |C i | , and we now prove that C has minimum subspace distance at least d by considering two distinct codew ords C j i ∈ C i and C b a ∈ C a . First, if i 6 = a , then d S ( C j i , C b a ) ≥ | i − a | d ≥ d ; second, if i = a and j 6 = b , then d S ( C j a , C b a ) ≥ 2 d 2 by the minimum distance of C a . In order to characterize the rate loss by using CDCs instead of subspace codes, we now compare the cardinalities of optimal subspace codes and optimal CDCs with the same minimum subspace distance d . Note that the bounds on the cardinalities of optimal CDCs in (5) assume the injection metric for CDC. When d is even, a CDC with a minimum subspace distance d has a minimum injection distance d 2 . When d is odd, a CDC with a minimum subspace distance d + 1 has a minimum injection distance d +1 2 = d 2 . Thus, a CDC has a minimum subspace distance at least d if and only if it has minimum injection distance at least d 2 . Hence, we compare A S ( q , n, d ) and A C ( q , n, r, d 2 ) in Proposition 4 below . Pr oposition 4: (Comparison between optimal subspace codes and CDCs in the subspace metric). For 2 ≤ d ≤ 2 n 2 and d 2 ≤ r ≤ n 2 , K q q ( b n 2 c − r )( b n 2 c − r + d d 2 e − 1) A C q , n, r, d 2 < A S ( q , n, d ) < 2 K − 1 q θ ( q ) q ( b n 2 c − r )( b n 2 c − r + d d 2 e − 1) A C q , n, r, d 2 . (6) Pr oof: By (1), Proposition 2, and (5), we hav e A S ( q , n, d ) ≥ A C ( q , n, n 2 , d 2 ) ≥ q ( n − b n 2 c )( b n 2 c − d d 2 e +1) > K q q ( b n 2 c − r )( b n 2 c − r + d d 2 e − 1) A C q , n, r, d 2 . Also, Proposi- tion 2 and (1) also lead to A S ( q , n, d ) < 2 + 2 K − 1 q b n 2 c X r = d d 2 e q ( n − r )( r − d d 2 e +1) =2 + 2 K − 1 q q ( n − b n 2 c )( b n 2 c − d d 2 e +1) b n 2 c − d d 2 e X i =0 q − i ( n − 2 b n 2 c + d d 2 e − 1+ i ) , where i = n 2 − r . Since n − 2 n 2 ≥ 0 and d 2 − 1 ≥ 0 , we obtain A S ( q , n, d ) < 2 + 2 K − 1 q θ ( q ) q ( n − b n 2 c )( b n 2 c − d d 2 e +1) ≤ 2 K − 1 q θ ( q ) q ( b n 2 c − r )( b n 2 c − r + d d 2 e − 1) A C q , n, r, d 2 , (7) where (7) follows from (5). W e now compare the relation between A S ( q , n, d ) and A C ( q , n, r, d ) in Proposition 4 to the one determined in [11, Theorem 5]. The latter only provides the following lower bound on A S ( q , n, d ) : A S ( q , n, d ) ≥ q n +1 − r + q r − 2 q n +1 − 1 A C ( q , n + 1 , r, d 2 + 1) . The Singleton bound on CDCs [3] indicates that A C ( q , n + 1 , r, d + 1) ≤ A C ( q , n, r, d − 1) , which in turn satisfies A C ( q , n, r, d − 1) < K − 1 q q − ( n − 2 r − d ) A C ( q , n, r, d ) by (1). Hence the lower bound on A S ( q , n, d ) in [11, Theorem 5] is at most 2 K − 1 q q − ( n − r + d d 2 e − 1) A C ( q , n, r, d 2 ) . The ratio between our lower bound in Proposition 4 and the lo wer bound in [11, Theorem 5] is at least K q 2 q n − b n 2 c +( b n 2 c − r +1)( b n 2 c − r + d d 2 e − 1) , and thus our lower bound in Proposition 4 is tighter than the bound in [11, Theorem 5] for all cases. The bounds in Proposition 4 help us determine the asymp- totic behavior of A S ( q , n, d ) . W e first define the rate of a subspace code C ⊆ E ( q , n ) as log q |C | log q | E ( q,n ) | . W e note that this definition is combinatorial, and differs from the rate introduced in [3] for CDCs. The rate defined in [3] also accounts for the channel usage, but it seems appropriate for CDCs only . On the other hand, our rate depends on only the cardinality of the code, and hence is more appropriate to compare general subspace codes, since all the subspaces are treated equally regardless of their dimension. Finally , the rate defined in [3] can be deriv ed from our rate de- fined here. Using the normalized parameters r 0 def = r n and d 0 S def = d S n where d S is the minimum subspace distance of 5 a code, the asymptotic rate of a subspace code a S ( d 0 S ) = lim sup n →∞ log q A S ( q ,n, d nd 0 S e ) log q | E ( q,n ) | and of a CDC of given dimen- sion a S ( r 0 , d 0 S ) = lim sup n →∞ log q A C ( q ,n,nr 0 , n d 0 S 2 ) log q | E ( q,n ) | can be easily determined. Pr oposition 5: (Asymptotic rate of packing subspace codes in the subspace metric). For 0 ≤ d 0 S ≤ 1 , a S ( d 0 S ) = 1 − d 0 S . For 0 ≤ r 0 ≤ d 0 S 2 or 1 − d 0 S 2 ≤ r 0 ≤ 1 , a S ( r 0 , d 0 S ) = 0 ; for d 0 S 2 ≤ r ≤ 1 2 , a S ( r 0 , d 0 S ) = 2(1 − r 0 )(2 r 0 − d 0 S ) ; for 1 2 ≤ r 0 ≤ 1 − d 0 S 2 , a S ( r 0 , d 0 S ) = 2 r 0 (2 − 2 r 0 − d 0 S ) . Pr oof: First, (5) and Lemma 1 yield a S ( r 0 , d 0 S ) = 2(1 − r 0 )(2 r 0 − d 0 S ) for 0 ≤ r 0 ≤ d 0 S 2 . Since A C ( q , n, r, d 2 ) = A C ( q , n, n − r , d 2 ) , we also obtain a S ( r 0 , d 0 S ) = 2(1 − r 0 )(2 r 0 − d 0 S ) for d 0 S 2 ≤ r ≤ 1 2 . Second, (6) for r = n 2 and (5) yield a S ( d 0 S ) = a S ( 1 2 , d 0 S ) = 1 − d 0 S . Propositions 4 and 5 provide several important insights. First, Proposition 4 indicates that optimal CDCs with dimen- sion being half of the block length up to rounding ( r = n 2 and r = n − n 2 ) are optimal subspace codes up to a scalar . In this case, the optimal CDCs ha ve a limited rate loss as opposed to optimal subspace codes with the same error correction capability . When r < n 2 , the rate loss suffered by optimal CDCs increases with n 2 − r . Proposition 5 indicates that using CDCs with dimension d S 2 ≤ r < n 2 leads to a decrease in rate on the order of (1 − 2 r 0 )( d 0 S + 1 − 2 r 0 ) , where r 0 = r n . Since the rate loss increases with 1 − 2 r 0 , using a CDC with a dimension further from n 2 leads to a larger rate loss. The conclusion above can be explained from a combina- torial perspecti ve as well. When r = n 2 or r = n − n 2 , by Lemma 1, | E ( q , n ) | is the same as | E r ( q , n ) | = n r up to scalar . Thus it is not surprising that the optimal packings in E ( q , n ) are the same as those in E r ( q , n ) up to scalar . W e also comment that the asymptotic rates in Proposition 5 for subspace codes come from Singleton bounds. The asymp- totic rate a S ( r 0 , d 0 S ) is achiev ed by KK codes. The asymptotic rate a S ( d 0 S ) is similar to that for rank metric codes [20]. This can be explained by the fact that the asymptotic rate a S ( d 0 S ) is also achiev ed by KK codes when r = n 2 , whose cardinalities are equal to those of optimal rank metric codes. In T able I we compare the bounds on A S ( q , n, d ) deriv ed in this paper with each other and with existing bounds in the literature, for q = 2 , n = 10 , and d ranging from 2 to 10 . W e consider the lo wer bound in Proposition 2, its refinement in Proposition 3, and the lower bounds in [10] and [11, Theorem 5] described above, and the upper bound comes from Proposition 2. Note that Proposition 4 is not included in the comparison since its purpose is to compare the cardinalities of optimal subspace codes and optimal CDCs with the same minimum subspace distance. Since bounds in Propositions 2 and 3 and [11, Theorem 5] depend on cardinalities of either related CDCs or optimal CDCs, we use the cardinalities of CDCs with dimension r = n/ 2 = 5 proposed in [11] and [7] as lower bounds on A C ( q , n, r, d ) and the upper bound in [9] on A C ( q , n, r, d ) to derive the numbers in T able I. For example, the lower bound of Proposition 2 is simply given by the construction in [11] when d = 3 , 4 , 5 , and 6 , and given by the construction in [7] for other values of d . T able I illustrates our lower bounds in Propositions 2 and 3 are tighter than those in [10] and [11, Theorem 5]. The cardinalities of CDCs with dimension r = n/ 2 in [11] and [7], displayed as the lower bound in Proposition 2, are quite close to the lower bound in Proposition 3, supporting our conclusion that the rate loss suffered by properly designed CDCs is smaller when the dimension is close to n/ 2 . Also, the lower and upper bounds in Proposition 2 depend on d 2 , and hence the bounds for d = 2 l and d = 2 l − 1 are the same. Finally , the tightness of the bounds improv es as the minimum distance of the code increases, leading to very tight bounds for d = n . C. Covering pr operties of subspace codes with the subspace metric W e now consider the covering properties of subspace codes with the subspace metric. The subspace covering radius in E ( q , n ) of a code C is defined as max U ∈ E ( q ,n ) d S ( U, C ) . W e denote the minimum cardinality of a subspace code in E ( q , n ) with subspace covering radius ρ as K S ( q , n, ρ ) . Since K S ( q , n, 0) = | E ( q , n ) | and K S ( q , n, n ) = 1 , we assume 0 < ρ < n henceforth. W e determine below the minimum cardinality of a code with subspace cov ering radius ρ ≥ n 2 . Pr oposition 6: For n 2 ≤ ρ < n , K S ( q , n, ρ ) = 2 . Pr oof: For all V ∈ E ( q , n ) there e xists ¯ V such that V ∩ ¯ V = { 0 } and V + ¯ V = GF( q ) n , and hence d S ( V , ¯ V ) = n . Therefore, one subspace cannot cover the whole E ( q , n ) with radius ρ < n , hence K S ( q , n, ρ ) > 1 . Let C = {{ 0 } , GF( q ) n } , then for all D ∈ E ( q , n ) , d S ( D , C ) = min { dim( D ) , n − dim( D ) } ≤ n 2 . Thus C has covering radius n 2 and K S ( q , n, ρ ) ≤ 2 for all ρ ≥ n 2 . W e thus consider 0 < ρ < n 2 henceforth. Proposition 7 below can be vie wed as the sphere covering bound for sub- space codes with the subspace metric, as it considers how a subspace code covers each Grassmannian E r ( q , n ) for any 0 ≤ r ≤ n . Pr oposition 7: (Spher e covering bound for the subspace metric). F or all q , n , and 0 < ρ < n 2 , K S ( q , n, ρ ) ≥ min P n i =0 A i , where the minimum is taken ov er all integer sequences { A i } satisfying 0 ≤ A i ≤ n i for all 0 ≤ i ≤ n and P n i =0 A i P ρ d =0 N S ( i, r, d ) ≥ n r for 0 ≤ r ≤ n . Pr oof: Let C be a subspace code with covering radius ρ and let A i denote the number of subspaces with dimension i in C . Then 0 ≤ A i ≤ n i for all 0 ≤ i ≤ n . All subspaces with dimension r are covered; howe ver , a codeword with dimension i covers exactly P ρ d =0 N S ( i, r, d ) subspaces with dimension r , hence P n i =0 A i P ρ d =0 N S ( i, r, d ) ≥ n r for 0 ≤ r ≤ n . W e remark that the lo wer bound in Proposition 7 is based on the optimal solution to an integer linear program and hence determining this lo wer bound is computationally infeasible for large parameter values. W e now derive upper bounds on K S ( q , n, ρ ) . Since | E b n 2 c ( q , n ) | is equal to E ( q , n ) up to a scalar , the main issue with designing covering subspace codes is to cover E b n 2 c ( q , n ) . In Proposition 8, we use subspaces in E r ( q , n ) in order to cover the Grassmannian E r + ρ ( q , n ) for r ≤ n 2 , i.e., E b n 2 c ( q , n ) is covered using subspaces in E b n 2 c − ρ ( q , n ) . This 6 Lower bounds Upper bound d Proposition 2 Proposition 3 [10] [11, Theorem 5] Proposition 2 2 52,494,849 59,058,177 3,181,506 3,073,032 229,755,605 3 1,167,327 1,167,967 64,047 88,163 2,616,760 4 1,167,327 1,167,329 64,047 4,650 2,616,760 5 32,841 32,843 1,986 397 50,708 6 32,841 32,841 1,986 54 50,708 7 1,025 1,025 63 12 1,260 8 1,025 1,025 63 4 1,260 9 33 33 2 2 35 10 33 33 2 2 35 T ABLE I C O MPA R IS O N O F B O U ND S O N A S (2 , 10 , d ) F O R d F RO M 2 T O 10 choice is in fact asymptotically optimal, as we shall show in Proposition 10. The upper bound in Proposition 8 below is based on the uni- versal greedy algorithm in [14, Theorem 12.2.1] to construct cov ering codes, which we briefly revie w below for subspaces. The algorithm begins by selecting as the first codeword one of the subspaces which cover the most subspaces, and then keeps adding subspaces to the code. Each new codeword is selected as to cov er the most subspaces not yet covered by the code (if se v eral subspaces cov er the same number of subspaces, then the new code word is chosen randomly). The algorithm ev entually stops once all subspaces are covered. Although the cardinality of the code obtained by this algorithm is not constant, an upper bounded on its value is giv en in [14, Theorem 12.2.1]. The bound in Proposition 8 adapts this algorithm to co ver each Grassmannian E r + ρ ( q , n ) for r ≤ n 2 by subspaces in E r ( q , n ) . W e remark that the bound in Proposition 8 is only semi-constructive, as it determines an algorithm to construct cov ering subspace codes but does not design the actual codes. W e remark that the bound in Proposition 8 can be further tightened by using the bounds on the greedy algorithm deriv ed in [21], [22]. Pr oposition 8: For all q , n , 0 < ρ < n 2 , K S ( q , n, ρ ) ≤ 2 + 2 P b n 2 c r = ρ +1 b k r c , where k r = n r n − r + ρ ρ + n r − ρ r ρ ln n − r + ρ ρ . Pr oof: W e show that there exists a code with cardinality 2 + 2 P b n 2 c r = ρ +1 b k r c and covering radius ρ . W e choose { 0 } to be in the code, hence all subspaces with dimension 0 ≤ r ≤ ρ are covered. For ρ + 1 ≤ r ≤ n 2 , let A be the n r × n r − ρ binary matrix whose rows represent the subspaces U i ∈ E r ( q , n ) and whose columns represent the subspaces V j ∈ E r − ρ ( q , n ) , and where a i,j = 1 if and only if d S ( U i , V j ) = ρ . Then there are exactly N S ( r , r − ρ, ρ ) = r ρ ones on each row and N S ( r − ρ, r, ρ ) = n − r + ρ ρ ones on each column. By [14, Theorem 12.2.1], there exists an n r × b k r c submatrix of A with no all-zero rows. Thus, all subspaces of dimension r can be covered using b k r c codewords. Summing for all r , all subspaces with dimension 0 ≤ r ≤ n 2 can be cov ered with 1 + P b n 2 c r = ρ +1 b k r c subspaces. Similarly , it can be sho wn that all subspaces with dimension n 2 + 1 ≤ r ≤ n can be covered with 1 + P b n 2 c r = ρ +1 b k r c subspaces. In Proposition 9 below , we design an explicit construction of a subspace covering code by combining entire Grassmannians. Pr oposition 9: For all q , n , and 0 < ρ < n 2 , let J 1 = { 0 } ∪ { n 2 − ρ − b b n 2 c − ρ 2 ρ +1 c (2 ρ + 1) , . . . , n 2 − 3 ρ − 1 , n 2 − ρ } and J 2 = { i : n − i ∈ J 1 } . Then the code S r ∈ J 1 ∪ J 2 E r ( q , n ) has subspace cov ering radius ρ , and hence K S ( q , n, ρ ) ≤ P r ∈ J 1 ∪ J 2 n r . Pr oof: W e prove that S r ∈ J 1 E r ( q , n ) cov ers all subspaces with dimension ≤ n 2 . First, all subspaces D 0 ∈ E ( q , n ) with dimension 0 ≤ dim( D 0 ) < n 2 − 2 ρ − b b n 2 c − ρ 2 ρ +1 c (2 ρ + 1) ≤ ρ are covered by the subspace with dimension 0 . Second, for all D 1 ∈ E ( q , n ) with dimension n 2 − 2 ρ − i (2 ρ + 1) ≤ dim( D 1 ) ≤ n 2 − ρ − i (2 ρ + 1) , there exists C 1 with dimension n 2 − ρ − i (2 ρ + 1) such that D 1 ⊆ C 1 . Thus d S ( C 1 , D 1 ) = dim( C 1 ) − dim( D 1 ) ≤ ρ . Similarly , for all D 2 ∈ E ( q , n ) with dimension n 2 − ρ − i (2 ρ + 1) < dim( D 2 ) < n 2 − 2 ρ − ( i − 1)(2 ρ + 1) , there exists C 2 with dimension n 2 − ρ − i (2 ρ + 1) such that C 2 ⊂ D 2 . Thus d S ( C 2 , D 2 ) = dim( D 2 ) − dim( C 2 ) ≤ ρ . Therefore, S r ∈ J 1 E r ( q , n ) cov ers all subspaces with dimension ≤ n 2 . Similarly , all the subspaces with dimension ≥ n − n 2 are cov ered by S r ∈ J 2 E r ( q , n ) . Using the bounds deriv ed abov e, we now determine the asymptotic behavior of K S ( q , n, ρ ) . W e define k S ( ρ 0 ) = lim inf n →∞ log q K S ( q ,n, b nρ 0 c ) log q | E ( q,n ) | , where ρ 0 = ρ n . W e note that this definition of asymptotic rate is from a combinatorial perspectiv e again. Pr oposition 10: (Asymptotic rate of covering subspace codes in the subspace metric). For 0 ≤ ρ 0 ≤ 1 2 , k S ( ρ 0 ) = 1 − 2 ρ 0 . For 1 2 ≤ ρ 0 ≤ 1 , k S ( ρ 0 ) = 0 . Pr oof: By Proposition 6, k S ( ρ 0 ) = 0 for 1 2 ≤ ρ 0 ≤ 1 . Let C be a KK code in E b n 2 c ( q , n ) with minimum subspace distance 2 ρ + 1 and cardinality q ( n − b n 2 c )( b n 2 c − 2 ρ ) . Then any code D ⊆ E ( q , n ) with subspace covering radius ρ and cardinality K S ( q , n, ρ ) covers all codew ords in C ; howe ver , any code word in D only cov ers at most one code word in C . Hence K S ( q , n, ρ ) ≥ q ( n − b n 2 c )( b n 2 c − 2 ρ ) , which asymptotically becomes k S ( ρ 0 ) ≥ 1 − 2 ρ 0 . Also, by Proposition 8, it can be easily shown that K S ( q , n, ρ ) ≤ 2 + ( n + 1)[1 − ln K q + ρ ( n − ρ − 1) ln q ] K − 1 q q ( n − b n 2 c )( b n 2 c − ρ ) , which asymptotically becomes 7 k S ( ρ 0 ) ≤ 1 − 2 ρ 0 . The proof of Proposition 10 indicates that the minimum car- dinality K S ( q , n, ρ ) of a covering subspace code is on the order of q ( n − b n 2 c )( b n 2 c − ρ ) . Howe ver , a covering subspace code is easily obtained by taking the union of optimal covering CDCs (in their respecti ve Grassmannians) for all dimensions, lead- ing to a code with cardinality 2 + P n − ρ − 1 r = ρ +1 K C ( q , n, r, ρ 2 ) . By [7, Proposition 11], K C ( q , n, r, ρ 2 ) is on the order of q r ( n − r ) − b ρ 2 c ( n − b ρ 2 c ) . Hence the code has a cardinality on the order of q b n 2 c ( n − b n 2 c ) − ρ 2 ( n − ρ 2 ) , which is greater than q ( n − b n 2 c )( b n 2 c − ρ ) . Thus, a union of optimal covering CDCs (in their respective Grassmannians) does not result in asymp- totically optimal covering subspace codes with the subspace metric. I V . P A C K I N G A N D C OV E R I N G P R O P ER T IE S O F S U B S PAC E C O D E S W I T H T H E I N J E C T I O N M E T R I C A. Pr operties of balls with injection radii W e first in vestigate the properties of balls with injection radii in E ( q , n ) , which will be instrumental in our study of packing and cov ering properties of subspace codes with the injection distance. W e denote the number of subspaces with dimension s at injection distance d from a subspace with dimension r as N I ( r , s, d ) . Lemma 3: N I ( r , s, d ) = N S ( r , s, 2 d − | r − s | ) . Hence, N I ( r , s, d ) = q d ( d + s − r ) r d n − r d + s − r for r ≥ s and N I ( r , s, d ) = q d ( d + r − s ) r d + r − s n − r d for r ≤ s . Pr oof: If U ∈ E r ( q , n ) and V ∈ E s ( q , n ) , then d I ( U, V ) = d if and only if d S ( U, V ) = 2 d − | r − s | . Therefore, N I ( r , s, d ) = N S ( r , s, 2 d − | r − s | ) , and the formula for N I ( r , s, d ) is easily obtained from Lemma 2. Lemma 3 indicates that the injection metric satisfies a strengthened triangular inequality: for any U ∈ E r ( q , n ) and V ∈ E s ( q , n ) , we hav e d I ( U, V ) ≤ max { r, s } . W e denote the volume of a ball with injection radius t around a subspace with dimension r as V I ( r , t ) def = P t d =0 P n s =0 N I ( r , s, d ) . Although the volume V I ( r , t ) of a ball depends on its radius t and on the dimension r of its center , we deriv e below bounds on V I ( r , t ) which only depend on its radius. Pr oposition 11: For all q , n , r , and t ≤ n 2 , q t ( n − t ) ≤ V I ( r , t ) < θ ( q )(2 θ ( q ) − 1) K − 2 q q t ( n − t ) . The proof of Proposition 11 is given in Appendix B. W e remark that the bounds in Proposition 11 are tight up to a scalar , which will greatly facilitate our asymptotic study of subspace codes with the injection metric. Unlike the bounds on the volume of a ball with subspace radius in Proposition 1, the lower and upper bounds in Proposition 11 do not depend on r . This illustrates a clear geometric distinction between the subspace and injection metrics. B. P acking properties of subspace codes with the injection metric W e are interested in packing subspace codes used with the injection metric. The maximum cardinality of a code in E ( q , n ) with minimum injection distance d is denoted as A I ( q , n, d ) . Since A I ( q , n, 1) = | E ( q , n ) | , we assume d ≥ 2 henceforth. When d > n 2 , the maximum cardinality of a code with minimum injection distance d is determined and a code with maximum cardinality is given. F or all J ⊆ { 0 , 1 , . . . , n } , we denote the maximum cardinality of a code with minimum injection distance d and codewords having dimensions in J as A I ( q , n, d, J ) . For 2 ≤ d ≤ n 2 , we denote Q d = { d, d + 1 , . . . , n − d } . Proposition 12 belo w relates A I ( q , n, d ) to A C ( q , n, n 2 , d ) and shows that determining A I ( q , n, d, Q d ) is equiv alent to determining A I ( q , n, d ) . Pr oposition 12: For d > n 2 , A I ( q , n, d ) = 2 and for 2 ≤ d ≤ n 2 , A I ( q , n, d ) = A I ( q , n, d, Q d ) + 2 . Pr oof: Let C be a code in E ( q , n ) with mini- mum injection distance d and let C , D ∈ C . W e have max { dim( C ) , dim( D ) } = d I ( C, D ) + dim( C ∩ D ) ≥ d , therefore there is at most one code word with dimension less than d . Also, min { dim( C ) , dim( D ) } = dim( C + D ) − d I ( C, D ) ≤ n − d , therefore there is at most one codew ord with dimension greater than n − d . Thus A I ( q , n, d ) ≤ 2 for d > n 2 and A I ( q , n, d ) ≤ A I ( q , n, d, Q d ) + 2 for d ≤ n 2 . Also, adding { 0 } and GF( q ) n to a code with minimum injection distance d ≤ n 2 and codew ords of dimensions in Q d does not decrease the minimum distance. Thus A I ( q , n, d ) = A I ( q , n, d, Q d ) + 2 for d ≤ n 2 . When d > n 2 , n − d ≤ d , and thus A M ( q , n, d ) = 2 . Proposition 13 below relates A I ( q , n, d ) to A S ( q , n, d ) and A C ( q , n, r, d ) . Pr oposition 13: For all q , n , and 2 ≤ d ≤ n 2 , A S ( q , n, 2 d − 1) ≤ A I ( q , n, d ) ≤ A S ( q , n, d ) ; furthermore, when d ≥ n 3 , A I ( q , n, d ) ≤ A S ( q , n, 4 d − n, Q d ) + 2 . Also, A C ( q , n, n 2 , d ) ≤ A I ( q , n, d ) ≤ 2 + P n − d r = d A C ( q , n, r, d ) . Pr oof: A code with minimum subspace distance 2 d − 1 has minimum injection distance ≥ d by (4) and hence A S ( q , n, 2 d − 1) ≤ A I ( q , n, d ) . Similarly , a code with minimum injection distance d has minimum subspace distance ≥ d and hence A I ( q , n, d ) ≤ A S ( q , n, d ) . Let C be a code with minimum injection distance d whose codew ords have dimensions in Q d . For all codew ords U and V , d S ( U, V ) = 2 d I ( U, V ) − | dim( U ) − dim( V ) | ≥ 2 d − ( n − 2 d ) . Thus C has minimum subspace distance 4 d − n ≥ d for d ≥ n 3 , and hence A I ( q , n, d, Q d ) ≤ A S ( q , n, 4 d − n, Q d ) . Proposition 12 finally yields A I ( q , n, d ) = A I ( q , n, d, Q d ) + 2 ≤ A S ( q , n, 4 d − n, Q d ) + 2 . Any CDC in E r ( q , n ) with minimum injection distance d is a subspace code with minimum injection distance d , hence A C ( q , n, r, d ) ≤ A I ( q , n, d ) for all r . Also, the code words with dimension r in a subspace code with minimum injection distance d form a CDC in E r ( q , n ) with minimum injection distance at least d , hence A I ( q , n, d ) = A I ( q , n, d, Q d ) + 2 ≤ 2 + P n − d r = d A C ( q , n, r, d ) . W e now deri ve more bounds on A I ( q , n, d ) . Proposition 14 below is the analogue of Proposition 3 for the injection metric, and its proof is hence omitted. Pr oposition 14: For all q , n , and 2 ≤ d n 2 , we hav e A I ( q , n, d ) ≥ 2 + P z − 1 i = − z +1 A C ( q , n, n 2 − id, d ) , where z = b n 2 c d . By extending the puncturing of subspaces introduced in [3], we finally derive below a Singleton bound for injection metric 8 codes. Pr oposition 15: (Singleton bound for subspace codes in the injection metric). For all q , n , and 2 ≤ d ≤ n 2 , A I ( q , n, d ) ≤ A I ( q , n − 1 , d − 1) ≤ P n − d +1 r =0 n − d +1 r . Pr oof: Let W ∈ E n − 1 ( q , n ) . W e define the puncturing H W ( V ) from E ( q , n ) to E ( q , n − 1) as follows. If dim( V ) = 0 , then dim( H W ( V )) = 0 ; otherwise, if dim( V ) = r > 0 , then H W ( V ) is a fixed ( r − 1) -subspace of V ∩ W . For all U, V ∈ E ( q , n ) , it is easily sho wn that d I ( H W ( U ) , H W ( V )) ≥ d I ( U, V ) − 1 , and hence H W ( U ) 6 = H W ( V ) if d I ( U, V ) ≥ 2 . Therefore, if C is a code in E ( q , n ) with minimum injection distance d ≥ 2 , then { H W ( V ) : V ∈ C } is a code in E ( q , n − 1) with minimum injection distance ≥ d − 1 and cardinality |C | . The first inequality follows. Applying it d − 1 times yields A I ( q , n, d ) ≤ A I ( q , n − d + 1 , 1) = P n − d +1 r =0 n − d +1 r . W e remark that although the puncturing defined in the proof of Proposition 15 depends on W , the bounds in Proposition 15 do not. W e now compare the cardinalities of optimal subspace codes and optimal CDCs with the same minimum injection distance d . W e first establish the relation between A I ( q , n, d ) and A C ( q , n, d ) in Proposition 16 below . Pr oposition 16: (Comparison between optimal subspace codes and CDCs in the injection metric). For 2 ≤ d ≤ r ≤ n 2 , q ( b n 2 c − r )( r − d +1) A C ( q , n, r, d ) ≤ A I ( q , n, d ) < 2 K − 1 q θ ( q ) q ( b n 2 c − r )( r − d +1) A C ( q , n, r, d ) . The proof of Proposition 16 is similar to that of Proposi- tion 4 and is hence omitted. W e also obtain another relation between A I ( q , n, d ) and A S ( q , n, d ) . Cor ollary 1: For 2 ≤ d ≤ n 2 , A S ( q , n, 2 d ) ≤ A S ( q , n, 2 d − 1) ≤ A I ( q , n, d ) < 2 K − 1 q θ ( q ) A S ( q , n, 2 d ) . Also, A I ( q , n, d ) < 2 K − 2 q θ ( q ) q ( n − b n 2 c )( b n 2 c − d +1) . Pr oof: The lower bounds on A I ( q , n, d ) follow Proposi- tion 13. Furthermore, by choosing r = n 2 in Proposition 16 we hav e A I ( q , n, d ) < 2 K − 1 q θ ( q ) A C ( q , n, n 2 , d ) . Since A C ( q , n, n 2 , d ) ≤ A S ( q , n, 2 d ) , we obtain A I ( q , n, d ) < 2 K − 1 q θ ( q ) A S ( q , n, 2 d ) . The last inequality follows from (5). Corollary 1 provides sev eral interesting insights. First, the upper and lower bounds are all tight up to a scalar . Second, for any optimal subspace code with minimum injection distance d and cardinality A I ( q , n, d ) , the optimal (or nearly optimal) subspace codes with minimum subspace distance 2 d hav e the same cardinality up to a scalar . Third, the last inequality in Corollary 1 implies that such nearly optimal subspace codes with minimum subspace distance 2 d exist: KK codes in E b n 2 c ( q , n ) are such codes. Based on Proposition 16, we no w determine the asymptotic rates of subspace codes and CDCs with the injection met- ric. Let us use the normalized parameters r 0 = r n defined earlier and d 0 I def = d I n , where d I is the minimum injection distance of a code, and define the asymptotic maximum rate a I ( d 0 I ) = lim sup n →∞ log q A I ( q ,n,r , d nd 0 I e ) log q | E ( q,n ) | for a subspace code with the injection metric and the asymptotic rate a I ( r 0 , d 0 I ) = lim sup n →∞ log q A C ( q ,n,nr 0 , d nd 0 I e ) log q | E ( q,n ) | for a CDC. Pr oposition 17: (Asymptotic rate of packing subspace code in the injection metric). For 1 2 ≤ d 0 I ≤ 1 , a I ( d 0 I ) = 0 ; or 0 ≤ d 0 I ≤ 1 2 , a I ( d 0 I ) = 1 − 2 d 0 I . For 0 ≤ r 0 ≤ d 0 I or 1 − d 0 I ≤ r 0 ≤ 1 , a I ( r 0 , d 0 I ) = 0 ; for d 0 I ≤ r 0 ≤ 1 2 , a I ( r 0 , d 0 I ) = 4(1 − r 0 )( r 0 − d 0 I ) ; for 1 2 ≤ r 0 ≤ 1 − d 0 I , a I ( r 0 , d 0 I ) = 4 r 0 (1 − r 0 − d 0 I ) . The proof of Proposition 17 is similar to that of Proposition 5 and hence omitted. Propositions 16 and 17 provide sev eral important insights on the design of subspace codes with the injection metric. First, Proposition 16 indicates that optimal CDCs with dimension being half of the block length up to rounding ( r = n 2 and r = n − n 2 ) are optimal subspace codes with the injection metric up to a scalar . In this case, the optimal CDCs have a limited rate loss as opposed to optimal subspace codes with the same error correction capability . When r < n 2 , the rate loss suf fered by optimal CDCs increases with n 2 − r . Proposition 17 indicates that using CDCs with dimension d I ≤ r < n 2 leads to a decrease in rate on the order of (1 − 2 r 0 )(2 d 0 I + 1 − 2 r 0 ) . Similarly to the subspace metric, the rate loss for CDCs using the injection metric increases with 1 − 2 r 0 . Hence using a CDC with a dimension further from n 2 leads to a high rate loss. The combinatorial explanation in Section III-B also applies in this case. W e also comment that the asymptotic rates in Proposi- tion 17 for subspace codes come from Singleton bounds. The asymptotic rate a I ( r 0 , d 0 I ) is achie ved by KK codes, and the asymptotic rate a I ( d 0 I ) is achie v able also by KK codes when r = n 2 . Proposition 17 also compares the dif ference between asymp- totic rates of subspace codes with the subspace and injection metrics. Although a S ( d 0 S ) and a I ( d 0 I ) are different, the optimal subspace codes with the two metrics have similar asymptotic behavior . W e note that a CDC with minimum injection dis- tance d I has minimum subspace distance d S = 2 d I , which implies that a S ( r 0 , d 0 S ) = a I ( r 0 , d 0 I ) as long as d 0 S = 2 d 0 I . Also, as shown above, CDCs in E b n 2 c ( q , n ) with minimum injection distance d I are both asymptotically optimal subspace codes with minimum subspace distance d S = 2 d I and asymptotically optimal subspace codes with minimum injection distance d I . Finally , when the asymptotic rate is fix ed, the relati ve subspace distance d 0 S of optimal subspace codes is twice as much as the relati ve injection distance d 0 I . The implication of this on the error correction capability also depends on the decoding method. In T able II, we compare the bounds on A I ( q , n, d ) deri ved in this paper with each other for q = 2 , n = 10 , and d ranging from 2 to 5 (by Proposition 12, A I (2 , 10 , d ) = 2 for 6 ≤ d ≤ 10 ). W e consider the lower bound in Proposition 13 and its refinement in Proposition 14, while the upper bound comes from Proposition 13. Note that Proposition 16 is not included in the comparison since its primary purpose is to compare the cardinalities of optimal subspace codes and optimal CDCs with the same minimum injection distance. Although some bounds rely on A C ( q , n, r, d ) whose values are unkno wn in general, the values in T able II are obtained 9 Lower bounds Upper bound d Proposition 13 Proposition 14 Proposition 13 2 1,167,967 1,202,145 2,616,760 3 32,843 32,843 50,708 4 1,025 1,027 1,260 5 33 35 35 T ABLE II C O MPA R IS O N O F B O U ND S O N A I (2 , 10 , d ) F O R d F RO M 2 T O 5 by using constructions in [11] and [7] as lower bounds on A C ( q , n, r, d ) and the upper bound on A C ( q , n, r, d ) in [9]. The cardinalities of constant-dimension codes with dimension r = n/ 2 in [11] and [7] are quite close to the lower bound in Proposition 14, again supporting our conclusion that the rate loss suffered by properly designed CDCs is smaller when the dimension is close to n/ 2 . Finally , similar to the subspace distance case, the tightness of the bounds improves as the minimum distance of the code increases, leading to very tight bounds for d = n 2 . C. Covering pr operties of subspace codes with the injection metric W e now consider the covering properties of subspace codes with the injection metric. The injection covering radius in E ( q , n ) of C is defined as max U ∈ E ( q ,n ) d I ( U, C ) . W e denote the minimum cardinality of a subspace code with injection cov ering radius ρ in E ( q , n ) as K I ( q , n, ρ ) . Since K I ( q , n, 0) = | E ( q , n ) | and K I ( q , n, n ) = 1 , we assume 0 < ρ < n henceforth. W e first determine the minimum cardinality of a code with injection covering radius ρ when ρ ≥ n 2 . Pr oposition 18: For n − n 2 ≤ ρ < n , K I ( q , n, ρ ) = 1 . If n = 2 n 2 + 1 , then K I ( q , 2 n 2 + 1 , n 2 ) = 2 . Pr oof: Let C be a subspace with dimension n 2 . Then for all D 1 with dim( D 1 ) ≤ dim( C ) , we have d I ( C, D 1 ) ≤ dim( C ) = n 2 by (2); similarly , for all D 2 with dim( D 2 ) ≥ dim( C ) + 1 , we have d I ( C, D 2 ) ≤ n − dim( C ) = n − n 2 by (3). Thus C covers E ( q , n ) with radius n − n 2 and K I ( q , n, ρ ) = 1 for n − n 2 ≤ ρ < n . If n = 2 n 2 + 1 , then it is easily shown that { C , C ⊥ } has covering radius n 2 , and hence K I ( q , 2 n 2 + 1 , n 2 ) ≤ 2 . Howe ver , for any D ∈ E ( q , 2 n 2 + 1) , then either d I ( { 0 } , D ) = dim( D ) > n 2 or d I (GF( q ) n , D ) = n − dim( D ) > n 2 . Thus no single subspace can cover the pro- jectiv e space with radius n 2 and K I ( q , 2 n 2 + 1 , n 2 ) ≥ 2 . W e thus consider 0 < ρ < n 2 henceforth. Lemma 4 relates K I ( q , n, ρ ) to K S ( q , n, ρ ) and K C ( q , n, r, ρ ) . Lemma 4: For all q , n , and 0 < ρ < n 2 , K S ( q , n, 2 ρ ) ≤ K I ( q , n, ρ ) ≤ K S ( q , n, ρ ) and K I ( q , n, ρ ) ≤ 2 + P n − ρ − 1 r = ρ +1 K C ( q , n, r, ρ ) . Pr oof: A code with injection co vering radius ρ has sub- space covering radius ≤ 2 ρ , hence K S ( q , n, 2 ρ ) ≤ K I ( q , n, ρ ) . Also, a code with subspace covering radius ρ has injection cov ering radius ≤ ρ , hence K I ( q , n, ρ ) ≤ K S ( q , n, ρ ) . For ρ + 1 ≤ r ≤ n − ρ − 1 , let C r be a CDC in E r ( q , n ) with covering radius ρ and cardinality K C ( q , n, r, ρ ) and let C = S n − ρ − 1 r = ρ +1 C r ∪ {{ 0 } , GF( q ) n } . Then C is a subspace code with injection covering radius ρ and cardinality 2 + P n − ρ − 1 r = ρ +1 K C ( q , n, r, ρ ) . Proposition 19 below is the analogue of Proposition 7 for the injection metric. Pr oposition 19: (Spher e covering bound for subspace codes in the injection metric). For all q , n , and 0 < ρ < n 2 , K I ( q , n, ρ ) ≥ min P n i =0 A i , where the minimum is taken over all integer sequences { A i } satisfying 0 ≤ A i ≤ n i for all 0 ≤ i ≤ n and P n i =0 A i P ρ d =0 N I ( i, r, d ) ≥ n r for 0 ≤ r ≤ n . The lo wer bound in Proposition 19 is again based on the optimal solution to an integer linear program, and hence determining the lower bound is computationally infeasible for large parameter values. Proposition 20 below determines an upper bound on K I ( q , n, ρ ) , by applying the universal greedy algorithm in [14, Theorem 12.2.1] to construct cov ering codes in the injection metric. Proposition 20 is a direct application of the bound deriv ed in [14, Theorem 12.2.1] on the cardinality of a code returned by this algorithm. W e remark that this bound is only semi-constructiv e, as it determines an algorithm to construct cov ering subspace codes but does not design the actual codes. Pr oposition 20: (Gr eedy bound for covering codes in the injection metric). For all q , n , and ρ , K I ( q , n, ρ ) ≤ | E ( q,n ) | min 0 ≤ r ≤ n V I ( r,ρ ) [1 + ln (max 0 ≤ r ≤ n V I ( r , ρ ))] . W e finally determine the asymptotic behavior of K I ( q , n, ρ ) by using the asymptotic rate k I ( ρ 0 ) = lim inf n →∞ log q K I ( q ,n, b nρ 0 c ) log q | E ( q,n ) | . According to Proposition 11, the volume of a ball with injection radius is constant up to a scalar . The consequence of this geometric result is that the greedy algorithm used to prove Proposition 20 above will produce asymptotically optimal co vering codes in the injection metric. Howe ver , since the volume of balls in the subspace metric does depend on the center (see Proposition 1), a direct application of the greedy algorithm for the subspace metric does not necessarily produce asymptotically optimal cov ering codes in the subspace metric. Pr oposition 21: (Asymptotic rate of covering subspace code in the injection metric). For 0 ≤ ρ 0 ≤ 1 2 , k I ( ρ 0 ) = (1 − 2 ρ 0 ) 2 . For 1 2 ≤ ρ 0 ≤ 1 , k I ( ρ 0 ) = 0 . Pr oof: By Proposition 18, k I ( ρ 0 ) = 0 for 1 2 ≤ ρ 0 ≤ 1 . W e have K I ( q , n, ρ ) ≥ | E ( q,n ) | max 0 ≤ r ≤ n V I ( r,ρ ) > K 2 q θ ( q )(2 θ ( q ) − 1) q b n 2 c ( n − b n 2 c ) − ρ ( n − ρ ) by Lemma 1 and Proposi- tion 11. This asymptotically becomes k I ( ρ 0 ) ≥ (1 − 2 ρ 0 ) 2 for 0 ≤ ρ 0 ≤ 1 2 . Similarly , Proposition 20, Lemma 1, and Proposition 11 yield K I ( q , n, ρ ) < 2 K − 1 q θ ( q ) q b n 2 c ( n − b n 2 c ) − ρ ( n − ρ ) 1 + ln( θ ( q )(2 θ ( q ) − 1) K − 2 q ) + ρ ( n − ρ ) ln q which asymptotically becomes k I ( ρ 0 ) ≤ (1 − 2 ρ 0 ) 2 for 0 ≤ ρ 0 ≤ 1 2 . The proof of Proposition 21 indicates that the minimum car- dinality K I ( q , n, ρ ) of a cov ering subspace code with the injec- tion metric is on the order of q b n 2 c ( n − b n 2 c ) − ρ ( n − ρ ) . A co vering subspace code is easily obtained by taking the union of optimal cov ering CDCs for all constant dimensions, leading to a code 10 Properties Subspace Metric Injection Metric Packing asymptotic rates a S ( d 0 S ) = 1 − d 0 S a I ( d 0 I ) = 1 − 2 d 0 I optimality of CDCs with r = n/ 2 optimal up to a scalar optimal up to a scalar optimal construction optimal up to a scalar: KK codes optimal up to a scalar: KK codes Covering asymptotic rates k S ( ρ 0 ) = 1 − 2 ρ 0 k I ( ρ 0 ) = (1 − 2 ρ 0 ) 2 optimality of union of CDCs not asymptotically optimal asymptotically optimal optimal construction asymptotically optimal asymptotically optimal semi-constructiv e bound: Prop. 8 semi-constructiv e bound: Prop. 20 T ABLE III S U MM A RY O F R E S ULT S with cardinality 2 + P n − ρ − 1 r = ρ +1 K C ( q , n, r, ρ ) . By [7], the car- dinality of the union is on the order of q b n 2 c ( n − b n 2 c ) − ρ ( n − ρ ) . Thus, a union of optimal cov ering CDCs (in their respectiv e Grassmannians) results in asymptotically optimal covering subspace codes with the injection metric. Propositions 10 and 21 as well as their implications illustrate the differences between the subspace and injection metrics. First, the asymptotic rates of optimal cov ering subspace codes with the two metrics are different. Second, a union of optimal cov ering CDCs (in their respecti ve Grassmannians) results in asymptotically optimal cov ering subspace codes with the injection metric only , not with the subspace metric. These differences can be attrib uted to the different beha viors of the volume of a ball with subspace and injection radius. Although V S (0 , t ) = V I (0 , t ) , Proposition 1 indicates that V S ( r , t ) decreases with r ( r ≤ n 2 ), while according to Propo- sition 11, V I ( r , t ) remains asymptotically constant. Hence, for n 2 − ρ ≤ r ≤ n 2 , the balls with subspace radius ρ centered at a subspace with dimension r have significantly smaller volumes than their counterparts with an injection radius. Therefore, covering the subspaces with dimension n 2 requires more balls with subspace radius ρ than balls with injection radius ρ , which explains the different rates for k S ( ρ 0 ) and k I ( ρ 0 ) . Also, since the volume of a ball with subspace radius reaches its minimum for r = n 2 and E b n 2 c ( q , n ) has the largest cardinality among all Grassmannians, using cov ering CDCs of dimension n 2 to cover E b n 2 c ( q , n ) is not advantageous. Thus, a union of covering CDCs does not lead to an asymptotically optimal covering subspace code in the subspace metric. V . C O N C L U S I O N In this paper , we deriv e packing and covering properties of subspace codes for the subspace and the injection metrics. W e determine the asymptotic rates of packing and cov ering codes for both metrics, compare the performance of constant- dimension codes to that of general subspace codes, and pro vide constructions or semi-constructiv e bounds of nearly optimal codes in all four cases. These results are briefly summarized in T able III. Despite these results, some open problems remain for subspace codes. First of all, our bounds on the volumes of balls deriv ed in Lemma 1 and Propositions 1 and 11 may be tightened. Although the ratio between the upper and lower bounds is a function of the field size q which tends to 1 as q tends to infinity , it is unknown whether this ratio is the smallest that can be established. This issue also applies to the bounds on packing subspace codes in Propositions 4 and 16, where the ratios between upper and lower bounds are similar functions of q . Also, we only considered balls with radii up to n 2 , as only this case was useful for our deriv ations; the case where the radius is above n 2 remains unexplored. Second, the bounds on covering codes in both the subspace and the injection metrics deriv ed in this paper are only asymptotically optimal. It remains unkno wn whether any of these bounds is tight up to a scalar . Third, the design of packing and covering subspace codes is an important topic for future work. This is especially the case for covering codes in the subspace metric, as no asymptotically optimal construction is known so far . Finally , the aim of this paper was to deri ve simple bounds on subspace codes which are good for all parameter v alues, especially large values. On the other hand, a wealth of ad hoc bounds and heuristics can be used to tighten our results for small parameter values. V I . A C K N OW L E D G M E N T The authors are grateful to the anonymous re viewers and the associate editor Dr . Mario Blaum for their constructi ve comments, which hav e helped to improve this paper . A P P E N D I X A. Pr oof of Pr oposition 1 Pr oof: When r = 0 , we have g (0 , t ) = t ( n − t ) and V S (0 , t ) = P t i =0 n i for all t ≤ n 2 . Hence V S (0 , t ) ≥ n t ≥ q t ( n − t ) by (1), which proves the lower bound. Also, V S (0 , t ) < K − 1 q P t i =0 q i ( n − i ) < K − 1 q q t ( n − t ) P ∞ j =0 q − j 2 by (1), which prov es the upper bound. W e now prove the bounds on V S ( r , t ) for r ≥ 1 . By defini- tion, V S ( r , t ) = P n s =0 P t d =0 N S ( r , s, d ) is a double summation of exponential terms. The main idea of the proof is to deter- mine the largest term in the summation: this not only gi ves a good lower bound, but the whole summation can also be upper bounded by that term times a constant. First, by Lemma 2, N S ( r , s, d ) = q u ( d − u ) r u n − r d − u , where u = r + d − s 2 satisfies 0 ≤ u ≤ min { r , d } . Thus q f ( u ) ≤ N S ( r , s, d ) < K − 2 q q f ( u ) by (1), where f ( u ) = u (2 r + 3 d − n − 3 u ) + d ( n − r − d ) . Hence, P t d =0 S ( d ) ≤ V S ( r , t ) < K − 2 q P t d =0 S ( d ) , where S ( d ) = P min { r,d } u =0 q f ( u ) . Since f is maximized for u = u 0 def = 2 r +3 d − n 6 ≤ d , we need to consider the follo wing three cases. • Case I: 0 ≤ d ≤ n − 2 r 3 . W e ha ve u 0 ≤ 0 and hence f is maximized for u = 0 : f (0) = g ( r , d ) = d ( n − r − d ) . 11 Thus S ( d ) ≥ q g ( r ,d ) , and it is easy to show that S ( d ) = q g ( r,d ) P min { r,d } u =0 q − u ( n − 2 r − 3 d +3 u ) < θ ( q 3 ) q g ( r ,d ) since n − 2 r − 3 d ≥ 0 . • Case II: n − 2 r 3 ≤ d ≤ min n +4 r 3 , n 2 . W e have 0 ≤ u 0 ≤ r and hence f is maximized for u = u 0 : f ( u 0 ) = g ( r , d ) = 1 12 ( n − 2 r ) 2 + 1 4 d (2 n − d ) . It is easily shown that f ( u ) = f ( u 0 ) − 3( u − u 0 ) 2 for all u and hence S ( d ) ≥ max { q f ( b u 0 c ) , q f ( d u 0 e ) } ≥ q g ( r ,d ) − 3 4 . W e also obtain S ( d ) = q g ( r ,d ) P min { r,d } u =0 q − 3( u − u 0 ) 2 < 2 θ ( q 3 ) q g ( r,d ) . • Case III: n +4 r 3 ≤ d ≤ n 2 . W e have u 0 ≥ r and hence f is maximized for u = r : f ( r ) = g ( r , d ) = ( d − r )( n − d + r ) . Thus S ( d ) ≥ q g ( r ,d ) , and it is easy to show that S ( d ) = q g ( r ,d ) P r i =0 q − i (3 d − 4 r − n +3 i ) < θ ( q 3 ) q g ( r ,d ) since 3 d − 4 r − n ≥ 0 . From the discussion above, we obtain V S ( r , t ) ≥ S ( t ) ≥ q − 3 4 + g ( r ,t ) which pro ves the lower bound, and V S ( r , t ) < K − 2 q P t d =0 S ( d ) < 2 θ ( q 3 ) K − 2 q P t d =0 q g ( r ,d ) . W e no w sho w that R ( t ) = P t d =0 q g ( r ,d ) < (1 + q − 1 ) θ ( q 3 4 ) q g ( r ,t ) by distin- guishing the following three cases. First, if t ≤ n − 2 r 3 , R ( t ) = P t d =0 q d ( n − r − d ) = q t ( n − r − t ) P t i =0 q − i ( n − r − 2 t + i ) < q g ( r ,t ) θ ( q ) since n − 2 r − 2 t ≥ 0 . Second, if n − 2 r 3 < t ≤ n +4 r 3 , we have ( n − 2 r − 3 d ) 2 = 1 12 ( n − 2 r ) 2 + 1 4 d (2 n − d ) − d ( n − r − d ) and hence 1 12 ( n − 2 r ) 2 + 1 4 d (2 n − d ) ≥ d ( n − r − d ) for all d . W e obtain R ( t ) = P b n − 2 r 3 c d =0 q d ( n − r − d ) + P t d = b n − 2 r 3 c +1 q 1 12 ( n − 2 r ) 2 + 1 4 d (2 n − d ) ≤ P t d =0 q 1 12 ( n − 2 r ) 2 + 1 4 d (2 n − d ) and hence R ( t ) = q g ( r ,t ) P t i =0 q − 1 4 i (2 n − 2 t + i ) < θ ( q 3 4 ) q g ( r ,t ) since 2 n − 2 t ≥ 2 t . Third, if n +4 r 3 < t ≤ n 2 , which implies 1 ≤ r < n 8 , it can be shown that g ( r, n +4 r 3 ) ≤ g ( r , n +4 r 3 + 1) − n − 2 r 3 + 1 ≤ g ( r , t ) − 4 3 . Hence R ( t ) = R n +4 r 3 + q g ( r ,t ) P t − b n +4 r 3 c − 1 j =0 q − j ( n − 2 t +2 r + j ) < q g ( r ,t ) − 4 3 θ ( q 3 4 ) + q g ( r ,t ) θ ( q ) . Thus, V S ( r , t ) < 2 θ ( q 3 )(1 + q − 4 3 ) θ ( q 3 4 ) K − 2 q q g ( r,t ) . B. Pr oof of Pr oposition 11 Pr oof: First, V I ( r , t ) ≥ N I ( r , r, t ) ≥ q t ( n − t ) . W e now prov e the upper bound by determining the largest term in the double summation of V I ( r , t ) . Since V I ( r , t ) = V I ( n − r , t ) , we assume r ≤ n 2 without loss of generality . The triangular inequality indicates that N I ( r , s, d ) = 0 if s > | r − d | or s > r + d ; also, by definition of the injection distance, N I ( r , s, d ) = 0 if d > max { r , s } . W e can hence restrict the range of parameters in the summation formula of V I ( r , t ) as follows: V I ( r , t ) = r X d =0 d + r X s = r − d N I ( r , s, d ) + t X d = r +1 d + r X s = d N I ( r , s, d ) . (8) By Lemma 3 and 1, we hav e N I ( r , s, d ) < K − 2 q q s ( n − d + r − s ) − ( r − d )( n − d ) for s ≤ r and N I ( r , s, d ) < K − 2 q q s ( r − s + d )+ d ( n − r − d ) for s ≥ r , which with (8) yields K 2 q V I ( r , t ) < r X d =0 ( r X s = r − d q s ( n − d + r − s ) − ( r − d )( n − d ) + r + d X s = r +1 q s ( r − s + d )+ d ( n − r − d ) ) + t X d = r +1 d + r X s = d q s ( r − s + d )+ d ( n − r − d ) = r X d =0 q d ( n − d ) d X i =0 q − i ( n − d − r + i ) + d X j =1 q − j ( r − d + j ) + t X d = r +1 q d ( n − d ) r X k =0 q − k ( d − r + k ) , (9) where we make the following changes of variables: i = r − s , j = s − r , k = s − d in (9). Since n − d − r ≥ 0 , we hav e P d i =0 q − i ( n − d − r + i ) < θ ( q ) . Also, r − d ≥ 0 for r ≥ d , and hence P d j =1 q − j ( r − d + j ) < θ ( q ) − 1 ; similarly , we obtain P r k =0 q − k ( d − r + k ) < θ ( q ) . Hence, (9) leads to K 2 q V I ( r , t ) < (2 θ ( q ) − 1) r X d =0 q d ( n − d ) + θ ( q ) t X d = r +1 q d ( n − d ) < (2 θ ( q ) − 1) q t ( n − t ) t X l =0 q − l ( n − 2 t + l ) (10) < (2 θ ( q ) − 1) θ ( q ) q t ( n − t ) , where we set l = t − d and use n ≥ 2 r in (10). R E F E R E N C E S [1] T . Ho, M. M ´ edard, R. K oetter, D. R. Karger , M. Effros, J. Shi, and B. Leong, “ A random linear network coding approach to multicast, ” IEEE T rans. Info. Theory , vol. 52, no. 10, pp. 4413–4430, October 2006. [2] T . Ho and D. S. Lun, Network coding: an introduction . New Y ork NY : Cambridge University Press, 2008. [3] R. Koetter and F . R. Kschischang, “Coding for errors and erasures in random network coding, ” IEEE T rans. Info. Theory , vol. 54, no. 8, pp. 3579–3591, August 2008. [4] D. Silva and F . R. Kschischang, “On metrics for error correction in network coding, ” IEEE T rans. Info. Theory , vol. 55, no. 12, pp. 5479– 5490, December 2009. [5] D. Silva, F . R. Kschischang, and R. Koetter , “ A rank-metric approach to error control in random network coding, ” IEEE T rans. Info. Theory , vol. 54, no. 9, pp. 3951–3967, September 2008. [6] V . Skachek, “Recursiv e code construction for random networks, ” 2008, av ailable at http://arxiv .org/abs/0806.3650v1. [7] M. Gadouleau and Z. Y an, “Construction and covering properties of constant-dimension codes, ” submitted to IEEE T rans. Info. Theory , 2009, av ailable at http://arxiv .org/abs/0903.2675. [8] A. K ohnert and S. Kurz, “Construction of large constant dimension codes with a prescribed minimum distance, ” Mathematical Methods in Computer Science, LNCS , vol. 5393, pp. 31–42, December 2008. [9] S.-T . Xia and F .-W . Fu, “Johnson type bounds on constant dimension codes, ” Designs, Codes and Cryptography , vol. 50, no. 2, pp. 163–172, February 2009. [10] T . Etzion and A. V ardy , “Error-correcting codes in projective space, ” in Pr oc. IEEE Int. Symp. Information Theory , T oronto, ON, July 2008, pp. 871–875. [11] T . Etzion and N. Silberstein, “Error-correcting codes in projective spaces via rank-metric codes and Ferrers diagrams, ” IEEE Tr ans. Info. Theory , vol. 55, no. 7, pp. 2909–2919, July 2009. 12 [12] E. M. Gabidulin and M. Bossert, “Codes for network coding, ” in Pr oc. IEEE Int. Symp. on Information Theory , T oronto, ON, July 2008, pp. 867–870. [13] V . Pless and W . Huffman, Eds., Handbook of Coding Theory . Else vier, 1998. [14] G. D. Cohen, I. Honkala, S. Litsyn, and A. C. Lobstein, Covering Codes . Elsevier , 1997. [15] I. Honkala and A. Klapper, “Bounds for the multicovering radii of Reed- Muller codes with applications to stream ciphers, ” Designs, Codes, and Cryptography , vol. 23, no. 2, pp. 131–145, July 2001. [16] G. E. Andrews, The Theory of P artitions , ser . Encyclopedia of Mathe- matics and its Applications, G.-C. Rota, Ed. Reading, MA: Addison- W esley , 1976, vol. 2. [17] M. Gadouleau and Z. Y an, “On the decoder error probability of bounded rank-distance decoders for maximum rank distance codes, ” IEEE Tr ans. Info. Theory , vol. 54, no. 7, pp. 3202–3206, July 2008. [18] G. Gasper and M. Rahman, Basic Hyper geometric Series , 2nd ed., ser . Encyclopedia of Mathematics and its Applications. Cambridge Univ ersity Press, 2004, vol. 96. [19] M. Abramowitz and I. A. Stegun, Handbook of Mathematical Functions: with F ormulas, Graphs, and Mathematical T ables . Dover Publications, 1965. [20] M. Gadouleau and Z. Y an, “Packing and covering properties of rank metric codes, ” IEEE T rans. Info. Theory , vol. 54, no. 9, pp. 3873–3883, September 2008. [21] W . E. Clark and L. A. Dunning, “T ight upper bounds for the domina- tion numbers of graphs with giv en order and minimum degree, ” The Electr onic Journal of Combinatorics , vol. 4, 1997. [22] M. Gadouleau and Z. Y an, “Bounds on covering codes with the rank metric, ” IEEE Communications Letters , vol. 13, no. 9, pp. 691–693, September 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment