Automatic analysis of distance bounding protocols

Distance bounding protocols are used by nodes in wireless networks to calculate upper bounds on their distances to other nodes. However, dishonest nodes in the network can turn the calculations both illegitimate and inaccurate when they participate in protocol executions. It is important to analyze protocols for the possibility of such violations. Past efforts to analyze distance bounding protocols have only been manual. However, automated approaches are important since they are quite likely to find flaws that manual approaches cannot, as witnessed in literature for analysis pertaining to key establishment protocols. In this paper, we use the constraint solver tool to automatically analyze distance bounding protocols. We first formulate a new trace property called Secure Distance Bounding (SDB) that protocol executions must satisfy. We then classify the scenarios in which these protocols can operate considering the (dis)honesty of nodes and location of the attacker in the network. Finally, we extend the constraint solver so that it can be used to test protocols for violations of SDB in these scenarios and illustrate our technique on some published protocols.

💡 Research Summary

The paper addresses the problem of verifying the security of distance‑bounding (DB) protocols, which are used in wireless networks to compute an upper bound on the physical distance between a verifier and a prover. Traditional analyses of DB protocols have been manual, which is problematic because attacks often depend on subtle timing interactions that are easy to overlook. To overcome this, the authors propose a fully automated method based on a constraint‑solving engine, extending the Millen‑Shmatikov Dolev‑Yao style solver with explicit timing information.

The core contribution is the definition of a new trace property called Secure Distance Bounding (SDB). SDB compares the “time of flight” (ToF) measured in an ideal execution—where the verifier and prover are honest, positions are fixed, message‑creation times are deterministic, and no attacker is present—with the ToF measured in a real execution that may involve a malicious penetrator. The property holds if the real ToF is not smaller than the ideal ToF (t ≤ t′); a violation (t > t′) indicates that the attacker has managed to make the verifier believe the prover is closer than it actually is.

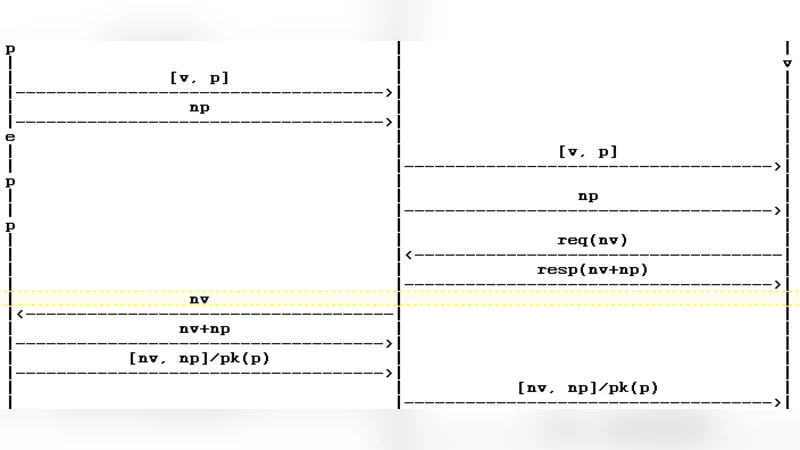

To reason about timing, the authors extend the strand‑space model with a third field “time” for each node, turning a strand into a sequence of timed events (send ‘+’, receive ‘‑’, with associated timestamps). Edges between nodes carry weights equal to the elapsed time between the connected events. Message‑transmission edges (+ → –) are weighted according to signal speed, message length, and physical distance, while internal computation edges (+ ⇒ +) are weighted by the time required for cryptographic operations. The attacker is modeled as a single penetrator strand that can perform any Dolev‑Yao operation; each operation corresponds to a reduction rule in the constraint solver, and the solver’s rule‑application cost contributes to the edge weight.

A “bundle” is a directed acyclic graph of strands together with the timing edges. An “ideal bundle” contains only honest strands and simple relays (zero‑cost attacker actions), whereas a “real bundle” may contain arbitrary attacker actions. The constraint‑solving procedure generates a constraint sequence from a semi‑bundle (a partially instantiated set of strands) and checks satisfiability under a substitution σ. If all constraints are satisfiable, the semi‑bundle can be completed to a real bundle; the solver also records the cumulative time cost of each rule, enabling the calculation of ToF for any pair of nodes.

The authors further classify execution scenarios along two dimensions: (1) the honesty of the prover (honest vs. dishonest/colluding) and (2) the physical location of the attacker relative to verifier and prover. This classification determines which bundle should be considered “ideal” for a given scenario, because when the attacker is closer to the verifier than the prover, the ideal reference point shifts.

The methodology is implemented in an extended version of the Millen‑Shmatikov solver (named PB in the paper). The tool automatically constructs timed bundles, computes the ideal and real ToF, and reports SDB violations. The authors evaluate the approach on several published DB protocols, including an extended Echo protocol, Brands‑Chaum variants, and adaptations of the NSPK protocol for distance bounding. The automated analysis discovers new attacks, such as man‑in‑the‑middle relays that reduce ToF, and collusion attacks where a dishonest prover shares secret material with a nearby attacker.

Key strengths of the work are: (i) a rigorous integration of physical timing into a formal symbolic model, (ii) minimal extensions to an existing, well‑understood constraint solver, making the approach relatively easy to adopt, and (iii) the ability to automatically uncover attacks that would be difficult to find manually. Limitations include the reliance on accurate environmental parameters (signal speed, distance, processing delays) for weight calculations; inaccurate parameters could lead to false positives or negatives. Moreover, the model assumes a single powerful attacker; extending to multiple coordinated attackers would require additional work.

In conclusion, the paper presents a novel, automated framework for analyzing distance‑bounding protocols. By defining Secure Distance Bounding as a trace property and embedding timing into constraint solving, it provides a practical tool for protocol designers to verify that their schemes resist distance‑fraud attacks, thereby enhancing the security of location‑based services, RFID authentication, and other wireless applications where physical proximity is a critical security factor.

Comments & Academic Discussion

Loading comments...

Leave a Comment