Destriping CMB temperature and polarization maps

We study destriping as a map-making method for temperature-and-polarization data for cosmic microwave background observations. We present a particular implementation of destriping and study the residual error in output maps, using simulated data corresponding to the 70 GHz channel of the Planck satellite, but assuming idealized detector and beam properties. The relevant residual map is the difference between the output map and a binned map obtained from the signal + white noise part of the data stream. For destriping it can be divided into six components: unmodeled correlated noise, white noise reference baselines, reference baselines of the pixelization noise from the signal, and baseline errors from correlated noise, white noise, and signal. These six components contribute differently to the different angular scales in the maps. We derive analytical results for the first three components. This study is related to Planck LFI activities.

💡 Research Summary

This paper presents a thorough investigation of the destriping map‑making technique as applied to temperature and polarization data from cosmic microwave background (CMB) observations, using simulated data that mimic the 70 GHz channel of the Planck satellite. The authors adopt an idealized detector and beam model but retain the realistic scanning strategy of Planck (spin rate, repointing, and sky coverage). The simulated time‑ordered data (TOD) consist of three components: a CMB signal, white noise, and correlated 1/f noise.

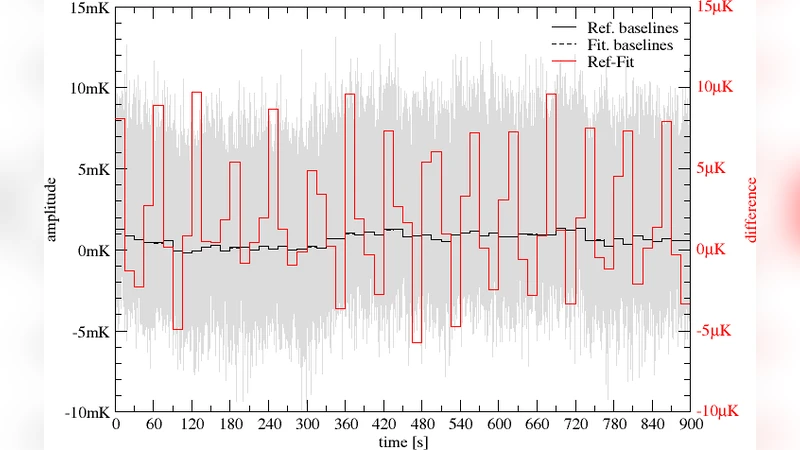

Destriping proceeds by dividing the TOD into short intervals (baselines) and solving a linear system that minimizes the variance of the baseline offsets, thereby removing the low‑frequency part of the correlated noise. After baseline subtraction, the cleaned TOD is binned into sky pixels to form the final map. The authors define the residual map as the difference between this output map and a reference “binned” map constructed only from the signal plus white‑noise part of the TOD.

Crucially, they decompose the residual into six statistically independent contributions: (1) unmodeled correlated noise (high‑frequency part of the 1/f noise that lies below the baseline length), (2) white‑noise reference baselines, (3) reference baselines arising from pixelization noise of the signal, (4) baseline‑estimation error due to correlated noise, (5) baseline‑estimation error due to white noise, and (6) baseline‑estimation error due to the signal itself. The first three terms are analytically tractable; the authors derive closed‑form expressions for their angular power spectra under the assumption of Gaussian statistics.

The analytical results reveal distinct scale‑dependent behaviours. Unmodeled correlated noise dominates the largest angular scales (ℓ ≲ 30) and decays rapidly with increasing ℓ. White‑noise reference baselines produce a flat “noise floor” that is most apparent at intermediate multipoles (ℓ ≈ 100–300). Pixelization‑noise baselines become significant at high multipoles (ℓ ≳ 500), reflecting the interplay between pixel size and the scanning pattern. Baseline‑estimation errors (components 4–6) depend sensitively on the chosen baseline length and on the accuracy of the prior noise model. In particular, if the baseline length is shorter than the optimal value, the correlated‑noise estimation error grows sharply, contaminating a broad range of ℓ.

The authors validate the analytical predictions against full Monte‑Carlo simulations. The agreement for the first three components is excellent, confirming that destriping efficiently separates the primary noise contributions. The residual errors associated with baseline estimation show a more complex pattern, reflecting non‑idealities such as scan‑pattern asymmetries and finite‑sample effects. Nevertheless, the simulations demonstrate that a baseline length of roughly one second, combined with an accurate prior estimate of the 1/f spectrum, yields residuals well below the instrumental noise level across the entire multipole range.

From a practical standpoint, the study provides concrete guidelines for the Planck Low‑Frequency Instrument (LFI) data processing pipeline. It shows that destriping can be applied to both temperature and polarization (Q, U) maps with comparable effectiveness, although the signal‑induced baseline error is slightly larger for polarization. The work also highlights the importance of modelling pixelization noise when high‑resolution polarization maps are required.

Finally, the paper outlines directions for future research: incorporating realistic detector non‑linearities, beam asymmetries, and more complex scanning strategies; extending the analytical framework to include these effects; and developing optimized baseline‑length selection algorithms that balance computational cost against residual error. The results are directly relevant to ongoing Planck LFI analyses and to upcoming CMB experiments that will rely on destriping or similar map‑making techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment